Google hits pause on Gemini’s people pictures

After a diversity initiative proved disastrous, it’s time to loosen chatbot guardrails

Today let’s talk about how Google’s effort to bring diversity to AI-generated images backfired, and consider how tech platforms’ efforts to avoid public-relations crises around chatbot outputs is undermining trust in their products.

This week, after a series of posts on the subject drew attention on X, Stratechery noted that Google’s Gemini chatbot seemed to refuse all attempts to generate images of white men. The refusals, which came as a result of the bot re-writing user prompts in an effort to bring more diversity into the images it generates, led to some deeply ahistorical howlers: racially diverse Nazis, for example.

The story has proven to be catnip for right-leaning culture warriors, who at last had concrete evidence to support the idea that Big Tech was out to get them. (Or at least, would not generate a picture of them.) “History messin’,” offered the conservative New York Post, in a cover story.

“Joe Biden’s America,” was the caption on House Republicans’ quote-post of the Post. It seems likely that the subject will eventually come up at a hearing in Congress.

With tensions running high, on Thursday morning Google said it had paused Gemini’s ability to generate images. “We're already working to address recent issues with Gemini's image generation feature,” a spokesman told me today over email. “While we do this, we're going to pause the image generation of people and will re-release an improved version soon.”

Gemini is not the first chatbot to rewrite user prompts in an effort to make them more diverse. When OpenAI released DALL-E 3 as part of ChatGPT, I noted that the bot was editing my prompts to add gender and racial diversity.

There are legitimate reasons to edit prompts in this way. Gemini is designed to serve a global audience, and many users may prefer a more inclusive set of images. Given that bots often don’t know who is using them, or what genders or races users expect to see in the images that are being requested, tweaking the prompt to offer several different choices increases the chances that the user will be satisfied the first time.

As Google’s communications team put it Wednesday on X: “Gemini’s Al image generation does generate a wide range of people. And that’s generally a good thing because people around the world use it. But it’s missing the mark here.” (The response strongly suggests to me that Gemini’s refusal to depict white people was a bug rather than a policy choice; hopefully Google will say this explicitly in the days to come.)

But there’s another reason chatbots are rewriting prompts this way: platforms are attempting to compensate for the lack of diversity in their training data, which generally reflects the biases of the culture that created them.

As John Hermann wrote today in New York:

This sort of issue is a major problem for all LLM-based tools and one that image generators exemplify in a visceral way: They’re basically interfaces for summoning stereotypes to create approximate visual responses to text prompts. Midjourney, an early AI image generator, was initially trained on a great deal of digital art (including fan art and hobbyist illustrations) scraped from the web, which meant that generic requests would produce extremely specific results: If you asked it for an image of a “woman” without further description, it would tend to produce a pouting, slightly sexualized, fairly consistent portrait of a white person. Similarly, requests to portray people who weren’t as widely represented in the training data would often result in wildly stereotypical and cartoonish outputs. As tools for producing images of or about the world, in other words, these generators were obviously deficient in a bunch of different ways that were hard to solve and awkward to acknowledge in full, though OpenAI and Google have occasionally tried. To attempt to mitigate this in the meantime — and to avoid bad PR — Google decided to nudge its models around at the prompt level.

The platforms’ solution to an interface that summons stereotypes, then, is to edit their outputs to be less stereotypical.

But as Google learned the hard way this week, race is not always a question of stereotypes. Sometimes it’s a question of history. A chatbot that is asked for images of German soldiers from the 1930s and generates not a single white one is making an obvious factual error.

And so, in trying to dodge one set of PR problems, Google promptly (!) found itself dealing with another.

I asked Dave Willner, an expert in content moderation who most recently served as head of trust and safety at OpenAI, what he made of Gemini's diversity efforts.

“This intervention wasn’t exactly elegant, and much more nuance will be needed to both accurately depict history (with all of its warts) and to accurately depict the present (in which all CEOs are not white men and all airline stewards are not Asian women),” he told me. “It is solvable but it will be a lot of work, which the folks doing this at Google probably weren’t given resources to do correctly in the time available."

What kind of work might address the underlying issue?

One strategy would be to train large language models on more diverse data sets. Given platforms’ general aversion to paying for content, this seems unlikely. It is not, however, impossible: last fall, as an effort to seed its Firefly text-to-image generator with high-quality images, Adobe paid photographers to shoot thousands of photos that would form the basis for its model.

A second strategy, and one that undoubtedly will prove quite controversial inside tech platforms, is to loosen some of the guardrails on some of their LLMs. I’m on record as saying that I think chatbots are generally too censorious, at least for their adult users. Last year ChatGPT refused to show me an image it had made of a teddy bear. Chatbots also shun many questions about basic sexual health.

I understand platforms’ fear that they will be held responsible and even legally liable for any questionable output from their models. But just as we don’t blame Photoshop when someone uses the app to draw an offensive picture, nor should we always hold chatbots responsible when a user prompt generates an image that some people take exception to. Platforms could build more trust by being upfront about their models’ biases and limitations — starting with the biases and limitations of their training data — and remind people to use them responsibly.

The alternative to letting people use these bots how they want to is that people will seek worse alternatives. To use the worst example imaginable, here’s a story about the right-wing platform Gab launching 91 chatbots over the past month with explicit instructions to deny the Holocaust and promote the lie that climate change is a scam. The wide availability of open-source, “unsecured” AI models means that people will soon be able to generate almost any kind of media on their laptop. And while there are legitimate legal reasons to maintain strict content policies — the traditional platform immunity shield, Section 230, probably doesn’t apply to chatbots — the current hyper-restrictive content policies seem likely to trigger significant backlash from Congressional Republicans, among others.

A final, longer-term strategy is to personalize chatbots more to the user. Today, chatbots know almost nothing about you. Even if Google has some information about your location, gender, or other demographic characteristics, that data does not inform the answers that Gemini gives you. If you only want Gemini to generate pictures of a particular race, there’s no way to tell it that.

Soon there will be, though. Earlier this month OpenAI introduced a memory feature for ChatGPT, effectively carving out a portion of the chatbot’s context window to collect information about you that can inform its answers. ChatGPT currently knows my profession, the city I live in, my birthday, and other details that could help it tailor its answers to me over time. As features like this are adopted by other chatbots, and as their memories improve, I suspect they will gradually show users more of what they want to see and less that they don’t. (For better and for worse!)

By now it’s a hack trope to quote a chatbot in a column like this, and yet I couldn’t resist asking Gemini itself why chatbots modify their prompts to introduce diversity into generated images. It gave a perfectly reasonable answer, reflecting many of the points above, and ended by offering a handful of “important considerations.”

“Sometimes specificity is important,” the bot noted, wisely. “It's okay for a prompt to be specific if it's relevant to the image's purpose.”

It also noted that the bot’s output should reflect the wishes of the person using it.

“The user should ultimately have the choice of whether to accept the chatbot's suggestions or not,” it said. And for once, a chatbot was giving advice worth listening to.

Sponsored

Cash management for startups

Crafting your startup’s cash management strategy? First, divide your funds into three categories: liquid, short-term, and strategic. From there, it’s about optimizing for risk and yield according to those time horizons. But how much should you put in each category?

Learn more about how to create the best cash management strategy for your startup.

On the podcast this week: Google DeepMind CEO Demis Hassabis joins us to discuss all those new AI models, his P(doom), and — yes — why Gemini rewrites your prompts to show you more diversity.

Apple | Spotify | Stitcher | Amazon | Google | YouTube

Governing

- In another maddening instance of non-consensual deepfake porn on X, AI-generated nude videos of comedian Bobbi Althoff were circulated for at least a day before the platform removed them. They generated millions of views. (Drew Harwell / Washington Post)

- X said it took down accounts and posts linked to farmer protests in India, following an order by the Indian government. The company used to fight these in court, before Musk bought it and jettisoned most of the legal team. (Reuters)

- The FTC found no evidence that X violated a consent order that protects user data, thanks to insubordinate Twitter employees who ignored Elon Musk's instructions. Amazing. (Cat Zakrzewski / Washington Post)

- The US Supreme Court will hear challenges next week to Florida and Texas social media laws brought by NetChoice, a small right-leaning lobby knee-deep in the free speech debate. "NetChoice’s total revenue jumped from just over $3 million in 2020 to $34 million in 2022." (Isaiah Poritz, Tonya Riley and Emily Birnbaum / Bloomberg)

- Google DeepMind is forming a new organization called AI Safety and Alignment out of its existing AI safety teams and will bring on new generative AI researchers and engineers. (Kyle Wiggers / TechCrunch)

- Meta’s Oversight Board widened its scope to include Threads, the first time the board has expanded to cover a new app. (Oversight Board)

- AI experts and industry executives, including “AI godfather” Yoshua Bengio, signed an open letter urging more regulation around the creation of deepfakes. (Anna Tong / Reuters)

- ElevenLabs’ management reportedly says it has no desire to see its product being used for scams, but with a number of audio deepfakes and the Biden robocall stemming from its AI tools, its guardrails are failing. (Margi Murphy / Bloomberg)

- Meta and Microsoft are lobbying EU regulators against Apple’s proposals to comply with the Digital Markets Act, saying that there need to be more concessions on the iOS software. (Michael Acton / Financial Times)

- As startups in China race to dominate AI, companies are finding themselves having to rely on underlying open-source systems built by companies in the US. Look on the bright side — Facebook finally got into China! (Via Llama.) (Paul Mozur, John Liu and Cade Metz / The New York Times)

- Microsoft’s Copilot AI search made up quotes attributed to Vladimir Putin at a press conference, despite Putin not having given a statement. (Rani Molla / Sherwood)

- Meta says a new Indonesia law does not require it to pay news publishers for content they voluntarily post on its platforms, despite the law’s requirement that platforms pay media outlets for content. (Reuters)

Industry

- Reddit reportedly plans on selling a large portion of its IPO shares to 75,000 of its most loyal users, in an unusual move that could risk favor with banks. (Corrie Driebusch / The Wall Street Journal)

- Reddit will allocate shares using a tiered system, starting with certain users and moderators identified by the company, followed by a system ranked by karma scores. (Emma Roth, Elizabeth Lopatto and Alex Heath / The Verge)

- The company plans to trade on the New York Stock Exchange under “RDDT” following its IPO. (Jonathan Vanian / CNBC)

- Its IPO filing revealed that Sam Altman is Reddit’s third-largest shareholder, controlling almost nine percent of the company’s stock. (Alex Weprin / The Hollywood Reporter)

- Buyer revealed – Reddit reportedly struck a $60 million deal with Google to make its content available for the tech giant’s AI model training. Reddit's announcement makes no mention of the AI training aspect, of course. (Anna Tong, Echo Wang and Martin Coulter / Reuters)

- Google is also developing ways to make Reddit content and communities easier to find across its products. Does this mean we can stop appending "reddit" to all of our searches now? (Abner Li / 9to5Google)

- Match Group is partnering with OpenAI to allow its employees to use AI tools for work-related tasks, according to an overly enthusiastic press release written by ChatGPT. (Sarah Perez / TechCrunch)

- Google released Gemma 2B and 7B, two open-source AI models that let developers use the research that went into building Gemini. (Emilia David / The Verge)

- Chrome is getting a new Gemini-powered “Help me write” feature that provides writing suggestions for short-form content and drafting product descriptions. (Jess Weatherbed / The Verge)

- Some TikTok managers are reportedly concerned about how video-editing app CapCut could put more scrutiny on TikTok now that it’s being run by ByteDance executives in China. (Juro Osawa / The Information)

- TikTok influencers are earning big on live streams. Some users say they feel addicted to the gamified feature; one said he spent almost $200,000 on his favorite streamers in a month. (Julian Fell, Teresa Tan and Ashley Kyd / ABC)

- WhatsApp added four new text formatting options, including bullet and numbered lists, block quotes, and inline code that helps highlight and organize messages. WhatsApp is really turning into a full CMS, huh? (Jess Weatherbed / The Verge)

- Instagram is expanding its marketplace tool to Canada, Australia, New Zealand, the UK, Japan, India and Brazil, to connect brands with creators. (Ivan Mehta / TechCrunch)

- Bluesky launched federation, allowing anyone to run their own server connected to Bluesky’s network. (Sarah Perez / TechCrunch)

- Roblox paid out a record $740.8 million to game developers in 2023, according to a company filing. (Cecilia D’Anastasio / Bloomberg)

- Stability AI offered an early preview of its Stable Diffusion 3.0 image generator, promising improved performance. (Sean Michael Kerner / VentureBeat)

- Magic, the startup that former GitHub CEO Nat Friedman and investment partner Daniel Gross invested $100 million in, says it’s created a new LLM that can process 3.5 million words worth of text, five times that of Gemini. It may have developed new reasoning techniques as well. (Stephanie Palazzolo and Amir Efrati / The Information)

- By the end of last year, 48 percent of most widely used news sites were blocking OpenAI’s crawlers, and 24 percent were blocking Google’s, and legacy print publications were more likely to block than broadcasters or digital outlets, a report found. (Richard Fletcher / Reuters Institute)

- AT&T said it restored wireless service for affected US customers after a cell phone outage. (Aditya Soni and David Sheparson / Reuters)

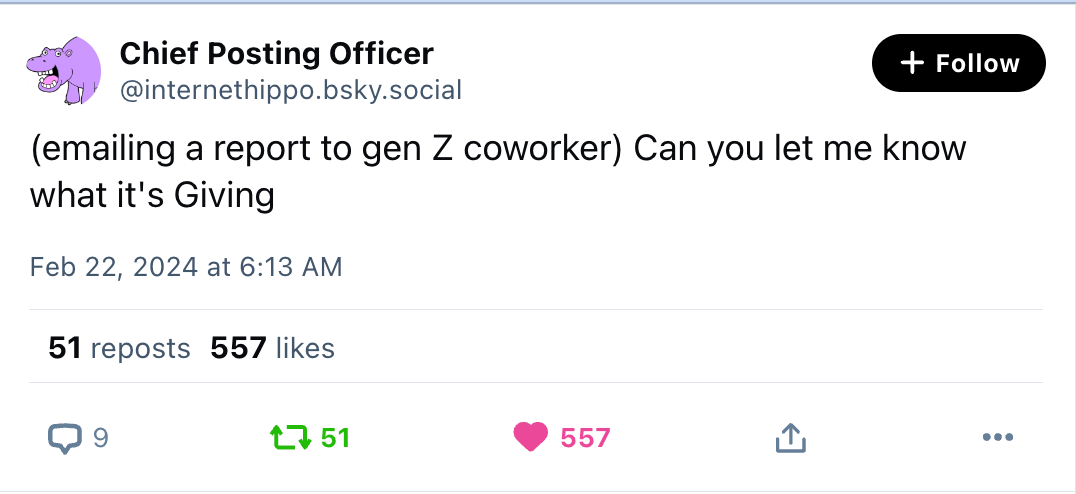

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and forbidden white men: casey@platformer.news and zoe@platformer.news.