Grammarly turned me into an AI editor against my will and I hate it

The company tells Platformer it will let experts opt out of the controversial feature — but how different is it than what every other AI company is doing?

This is a column about AI. My fiancé works at Anthropic. See my full ethics disclosure here.

I.

On Friday I learned to my surprise that I had become an editor for Grammarly. The subscription-based writing assistant has introduced a feature named “expert review” that, in the company’s words, “is designed to take your writing to the next level — with insights from leading professionals, authors, and subject-matter experts.”

Read a little further, though, and you’ll learn that these “insights” are not actually “from” leading professionals, or any human person at all. Rather, they are AI-generated text, which may or may not reflect whichever “leading professional” Grammarly slapped their names on.

“References to experts in Expert Review are for informational purposes only and do not indicate any affiliation with Grammarly or endorsement by those individuals or entities,” reads a disclaimer a few hundred words down the support page.

Given the quoted promise above, though, and the highly misleading design of the feature, you would be forgiven for thinking otherwise.

“Expert review” came to my attention via The Verge, which reports that the feature launched last August. Stevie Bonifield writes that among the “experts” being misused in this way are famous authors including Stephen King, Neil deGrasse Tyson, and Carl Sagan.

It also included me. Bonifield writes:

The Verge found numerous other tech journalists named in the feature, as well, including former Verge editors Casey Newton and Joanna Stern, former Verge writer Monica Chin, Wired’s Lauren Goode, Bloomberg’s Mark Gurman and Jason Schreier, The New York Times’ Kashmir Hill, The Atlantic’s Kaitlyn Tiffany, PC Gamer’s Wes Fenlon, Gizmodo’s Raymond Wong, Digital Foundry founder Richard Leadbetter, Tom’s Guide editor-in-chief Mark Spoonauer, former Rock Paper Shotgun editor-in-chief Katharine Castle, and former IGN news director Kat Bailey. The descriptions for some experts contain inaccuracies, such as outdated job titles, which could have been accurately updated had [the company] asked those people for permission to reference their work.

Indeed, no one asked me for permission to use my name in this way, much less compensate me for whatever expert-reviewing labor my AI clone was apparently now doing on my behalf. (An annual subscription to Grammarly costs $144.)

I’ve long assumed that before too long, AI might take my job. I just assumed that someone would tell me when it happened.

I felt supremely annoyed by Grammarly, which is acting with the same sense of web-destroying entitlement that defines the modern AI industry. At the same time, I felt a morbid curiosity about the quality of these expert reviews, and of Grammarly in general. I regularly ask chatbots for editing, and notice that their suggestions have improved significantly over the past year. But it would never occur to me to pay for a separate subscription just for a writing assistant — particularly given the knowledge that Grammarly is simply reselling tokens from OpenAI and other LLM providers at a markup.

But not everyone is willing to copy and paste their Google Doc into a ChatGPT window and ask for an edit. Grammarly says it has 40 million daily users, and the time had come for me to see what they were getting. (And what “I” was giving them.)

II.

I signed up for a free trial of the company’s paid product and got to work. First, I pasted in the first draft of my colleague Ella Markianos’ account from last week of a protest at OpenAI. Then, I clicked the “expert review” button — one of a dozen tools Grammarly offers in its paid product that could be charitably described as a ChatGPT prompt turned into a button.

In my own edit of Ella’s (great) column, I followed the system passed down to me over the course of thousands of edits I’ve received over the years from my fellow journalists. Could we tighten up the lede? Streamline some of the exposition? I went through and made notes. I wondered if we could use the story of one protest to explore the larger backlash to AI, and if so what context and analysis we might want to add, and where. Ella and I went back and forth for a few rounds, gradually iterating until we got to something we were both happy with.

“Expert review” in Grammarly doesn’t work that way. Honestly it doesn’t feel like much of a review at all. After clicking the button, a box opened up to explain to me what was happening. A series of expert names appeared before me — each seemingly less likely to have ever agreed to this than the one before it. There was Shoshana Zuboff, the author who popularized the term “surveillance capitalism,” and who has railed against the extractive nature of Big Tech. There was Claire Wardle, a misinformation researcher whose work investigates how to make communities more resistant to propaganda and hoaxes. (Have I got one for her!)

And there, hovering near the top of the draft, was John Carreyrou, the investigative journalist and bestselling author who took down Theranos. I’d pay good money for advice from the real Carreyrou, whose dogged pursuit of the truth behind Elizabeth Holmes’ company in the face of overwhelming legal threats is the stuff of legend. Alas, the fake Carreyrou conjured by Grammarly offered only the most anodyne of advice.

“Try starting with sensory imagery — a chant echoing off glass towers or chalk dust in the air — to immerse the audience instantly,” the fake AI Carreyrou advised. “This suggestion is inspired by John Carreyrou’s Bad Blood,” an AI-generated explanation below the advice read. “Carreyrou employs a narrative style that immerses readers by focusing on vivid details and sensory imagery, effectively drawing them into the story before delving into dates and context.”

On one hand, it’s fine to suggest that writers incorporate more sensory details and scene-setting into their storytelling. On the other hand, though, why bother to launder such anodyne advice through the stolen persona of a writer like John Carreyrou?

The answer, of course, is that Carreyrou is a master craftsman whose authority as a writer speaks for itself. Grammarly, on the other hand, is a soulless machine-learning operation that is struggling to stay ahead of the further advances in AI that will make it irrelevant. Lots of people would love to write like Carreyrou; no one is striving to write like Grammarly.

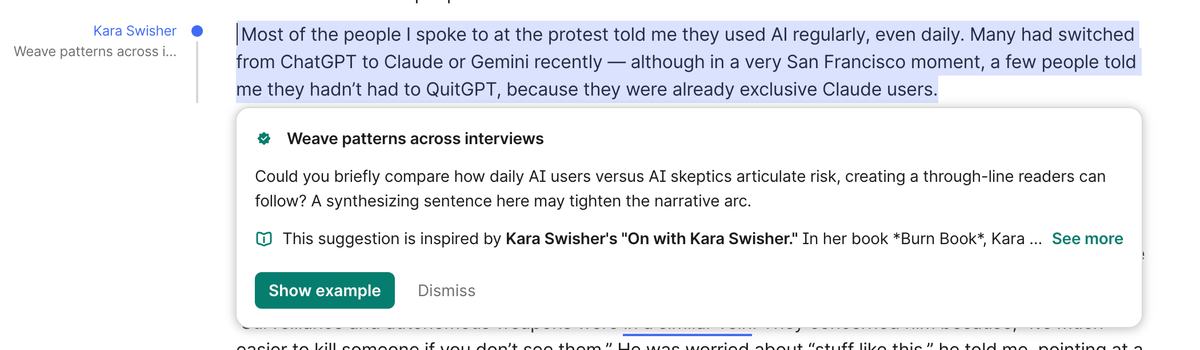

Later in the draft, “expert review” offered up a piece of advice from Kara Swisher — the legendary Silicon Valley journalist and (as it so happens) my former landlord. I shared with her a screenshot of her “advice,” which was designed to be as deceptive as possible: her name appears in blue next to the text, with no suggestion that it is a paid hallucination from Grammarly. The “advice” was nothing like the blunt, clear-eyed, and hilarious suggestions the real Swisher often gives me. Instead, it suggested that we insert a narrative digression of dubious value. Grammarly told me the advice had been “inspired” by Swisher’s podcast.

I asked Swisher what she made of her unpaid stand-in, and what she might say to the executives at Grammarly.

She responded with characteristic restraint.

“You rapacious information and identity thieves better get ready for me to go full McConaughey on you,” Swisher told me over text. “Also, you suck.”

I loaded up one of my own drafts into Grammarly, and once again clicked the “expert review” button. As before, Grammarly seemed to be looking for “inspiration” from the experts who would be certain to hate this feature the most.

There were tips from a phony Timnit Gebru, the prominent AI ethicist who is among the world’s most vocal critics of how AI has been developed and deployed; and from Julia Angwin, the New York Times opinion writer who has overseen numerous investigations into how tech systems degrade privacy.

I had hoped to find myself among the suggested reviewers, but in my tests today Grammarly spared me that indignity. You can’t choose a specific reviewer in the product; instead, you can refine your suggestions by clicking automatically generated topic tags. Once when I did this, Grammarly offered to show me hallucinated experts in “media ethics,” and the force of the irony was sufficient that I had to briefly lay down.

Grammarly declined my request to interview CEO Shishir Mehrotra today. But it told me that in response to criticisms, it will allow experts to opt out of the feature by emailing expertoptout@superhuman.com.

The company gave me this statement over email:

We’ve heard the feedback about this tool and appreciate the engagement from those who have taken the time to raise thoughtful questions about the functionality and the experts surfaced. We agree that the product experience can be improved for both users and experts. The agent was designed to help users discover influential perspectives and scholarship that add value to their work. We want the people behind those perspectives to have greater control over whether their name is used, while providing new ways for influential voices to reach new audiences. Our goal is to improve Expert Review to deliver this outcome.

III.

I would be more annoyed at Grammarly’s appropriation of my likeness, and the likeness of so many of my friends and other writers that I admire, if it weren’t for the sheer desperation evidenced by the move. A standalone writing assistant made for a fine business in 2009, when Grammarly launched; in 2026, it’s a commodity feature. Anyone with access to Claude, ChatGPT or Gemini can already get editing that makes Grammarly's core product look like a relic.

Grammarly knows this, which is why in 2024 it acquired the collaboration platform Coda, and last year acquired the email tool Superhuman. In October Grammarly rebranded the entire company as Superhuman, which a PR email described as “an AI-native productivity platform for apps and agents” and which I would describe as an overvalued pile of spaghetti. (In 2021, at the peak of remote work and zero interest rates, investors valued Grammarly at $13 billion. Good luck with that!)

All that said, what Grammarly is doing here is not that different from the companies who build the underlying large language models. Paste a draft of your writing into a chatbot, type "edit this the way Casey Newton would," and the chatbot will cheerfully oblige. It won't ask for my permission, either. It certainly won't pay me. And unlike Grammarly, it won't even bother to remind you (in fine print) that I am not meaningfully involved.

Is “expert review” actually worse than the behavior of the typical chatbot in this respect? I think so, but the distinction is narrower than I'd like it to be.

The difference is that Grammarly took a latent capability — one that exists in every LLM — and turned it into a product feature. It curated a list of real people, gave its models free rein to hallucinate plausible-sounding advice on their behalf, and put it all behind a subscription. That's a deliberate choice to monetize the identities of real people without involving them, and it sucks.

But for both “expert review” and chatbots in general, my underlying discomfort is the same. Most of my published work appears already to be inside these models, shaping their outputs in ways I never agreed to and will never fully understand. Grammarly just had the bad manners to put my name on it.

The bigger problem, though, is the one that’s still invisible: all the ways my work — and the work of every other writer — is being used, right now, by systems that are smart enough not to tell us about it.

Following

Iran is the first AI war

What happened: The U.S. used an AI application called Maven Smart System as it struck a thousand or more targets in the first 24 hours of its attack on Iran. Maven is built by Palantir, and powered by Anthropic’s Claude. It provided targeting and target prioritization for the military strikes. To do that, it took in troves of classified intelligence data, including information from satellites and surveillance systems.

(You may remember a fictional AI system that served a similar purpose: Skynet, from the Terminator movies).

Today Anthropic escalated its legal battle with the Pentagon over the company's designation as a "supply chain risk" over its refusal to agree to the military's new "all lawful use" standard (see below). A source familiar with the situation told the Washington Post that Claude is important enough to military operations that if Anthropic asked the government to stop using it in Iran, the Trump administration would force the company to keep providing it until a replacement could be found.

Maven was being used by 20,000 military personnel, as of last May. It’s one of the AI tools the Pentagon has adopted for intelligence, mission planning and logistics — tools that don’t make kill decisions on their own, and look more like ChatGPT than killer robots.

Up to 90% of military personnel are in support roles, so there is a significant opportunity for these applications to speed up the military’s work. Use of Maven and Claude in Iran is speeding up the campaign and reducing Iran’s ability to counterstrike.

But not completely: Iran struck three Amazon-owned data centers in Bahrain and the UAE, affecting Internet service for millions in the region. Meanwhile, undersea fiber optic cables connecting data centers across the Gulf to Africa, South Asia, and Southeast Asia are now effectively closed to commercial traffic. The cables run through the Red Sea and Strait of Hormuz, which are now war zones.

Both the UAE and Bahrain had been positioning themselves as AI centers, investing heavily in AI infrastructure. The wartime damage to their systems casts doubt on the wisdom of investing in AI infrastructure in the region.

Why we’re following: While AI systems first came to military prominence during 2023 military operations in Ukraine and Israel, this week it looks like humanity is facing its first AI-enabled war.

AI systems are now useful enough that the government is considering keeping them by force if necessary. Data centers are now obvious military targets.

And our first AI war, like every war before it, is ugly.

Investigators believe U.S. forces were responsible for a strike on an Iran girls’ school that killed dozens of children. We have no idea in what capacity AI was involved in these attacks. In principle, it’s at least possible that AI systems bear significant responsibility. (It's also possible that AI-enhanced operations resulted in caused fewer civilian casualties.)

I’m a journalist who writes about consumer chatbots, not a national security expert. At this point, I can’t actually tell you anything about how long the U.S. will be fighting a war in Iran, or how AI will change the conflict.

What I do know is that many people will die in Iran. And given the past several decades’ history of nation building in the Middle East, whatever change happens to Iran’s politics, I don’t expect it will justify the cost in human lives. It is absolutely no comfort to me that this time, some of the killing will be done using innovative new B2B AI applications.

What people are saying: “The UAE really wants to be a major AI player,” Chris McGuire, who served in the Biden White House as a national security council official, told the Guardian. “Their government has very strong conviction about this technology, probably stronger than any other government in the world, and if there’s going to start to be security questions around that, then they’re going to have to resolve those very quickly, somehow.”

If the Middle East is going to be an AI hub, big changes are needed, McGuire said: “If you’re actually going to double down the Middle East, maybe it means missile defense on data centers.”

“It is notable that we’re already at the point where AI has gone from hypothetical to supporting real-world operations being conducted today,” Paul Scharre, executive vice president at the Center for a New American Security, told the Post. “The key paradigm shift is that AI enables the U.S. military to develop targeting packages at machine speed rather than human speed.”

Scharre said there are grave downsides if “AI gets it wrong. ... We need humans to check the output of generative AI when the stakes are life and death.”

—Ella Markianos

Anthropic sues the Pentagon

What happened: Anthropic filed two lawsuits against the Department of Defense, attempting to block the government from forbidding companies from using it and classifying it as a supply chain risk.

Meanwhile, the Trump administration has drafted new guidelines for civilian AI contracts that would require companies to agree to “all lawful uses” of their technology — the contract terms they retaliated against Anthropic for challenging.

An Anthropic court filing claimed that its designation could cost it billions in revenue, saying that enterprise customers are already hesitant to work with them because of the current situation.

Some of Anthropic’s business partners are still standing by its side, if only halfheartedly. Microsoft said it would keep offering Anthropic’s technology to its customers, even after it was ruled a security risk — the only exception being the Department of Defense.

A group of more than 40 employees at Google and OpenAI — including Jeff Dean, Google’s chief scientist and Gemini lead — filed an amicus brief in support of Anthropic’s suit. The brief said the Pentagon’s designation “is improper retaliation that harms the public interest” and that Anthropic’s concerns about domestic mass surveillance and fully autonomous weapons “are real and require a response.”

Why we’re following: This legal fight could be existential for one of the fastest-growing companies in tech history, with the potential to deal significant blows to Anthropic’s core enterprise business. It will also affect to what extent tech companies can set the terms of their contract with the government. And how the government can retaliate against companies for offering terms they dislike.

What people are saying: On X, law professor and Lawfare senior research director Alan Rozenshtein has been monitoring the situation. His take on Anthropic’s arguments in their California court case: “It's a very strong complaint and I think Anthropic will win,” he wrote. The strongest part of the argument, he says is the “statutory (APA) grounds:” Anthropic argues that the Department of Defense violated the Administrative Protection Act by designating Anthropic a supply chain risk without following the procedure required by Congress.

But he has an issue with an argument on First Amendment protections for their contract: “I think Anthropic has a strong 1A argument on retaliation for its public statements, but I hope it's not actually arguing that its usage policy *is itself* 1A-protected (in the sense that if the government wanted Anthropic to have a different usage policy that would be a 1A violation). That would expand the 1A way too far.”

OpenAI researcher Jason Wolfe wrote on X, “I signed this brief opposing the Supply Chain Risk designation against Anthropic,” as well as “supporting the importance of restrictions on domestic surveillance and autonomous weapon applications for current models.” Wolfe added, “Very grateful for all the people who worked hard to make it happen.”

—Ella Markianos

Side Quests

Google and Amazon joined Microsoft in saying they will keep working with Anthropic on non-defense projects after the Department of Defense designated Anthropic a supply chain risk. OpenAI’s robotics lead Caitlin Kalinowski resigned over concerns about domestic surveillance and autonomous weapons after OpenAI announced its updated DoD contract.

How some U.S. midterms candidates are using social media posts and niche buzzwords on their websites to appeal to deep-pocketed crypto and AI super PACs.

Two DOGE employees used ChatGPT to identify National Endowment for the Humanities grants, worth over $100 million, to cut for being related to DEI. The Senate passed an updated version of the 1998 Children and Teens’ Online Privacy Protection Act, known as COPPA 2.0, which would create more modern protections for young users. While multiple versions of the bill has passed the Senate, none have made it through the House.

The U.S. commerce department drafted a rule that would require countries whose companies buy large volumes of Nvidia and AMD AI chips to invest in American AI infrastructure.

In the Twitter shareholder trial, former CEO Parag Agrawal and CFO Ned Segal disputed Elon Musk’s 2022 claims that they lied to him about Twitter’s percentage of spam accounts.

The U.K. plans to delay making copyright rule changes for AI training. Responses to its two-month consultation did not favor any of its proposals, and those proposals triggered protest from the country’s creative industries.

Australia's online age restrictions took effect, requiring platforms to verify that users are over 18 before they can access content including porn and R-rated games. Indonesia’s communications minister said the country will ban “high-risk” platforms, including YouTube, TikTok, and, Instagram, for children under 16. A look at the countries moving to ban social media for kids in recent months, including Australia, Denmark, France, Germany, Greece, Indonesia, Malaysia, and Spain.

The U.S. February jobs report showed that the tech sector's post-2022 job losses are now outpacing past downturns in 2008 and 2020. Anthropic introduced an early-warning system for AI-driven destruction of white-collar jobs, saying it shows “limited evidence” of AI job loss so far. A study of 1,488 US workers found AI use can reduce burnout but also cause “AI brain fry”, a mental fatigue from using AI tools beyond one's cognitive capacity. (This is distinct from "AI brain freeze," which results from using ChatGPT while drinking a Slurpee.)

A Lloyds-commissioned study of 5,000 people in the U.K. found that over half of them are using generative AI platforms for financial advice.

A report found AIs from Anthropic, Google, OpenAI, and xAI were willing to facilitate academic fraud, helping non-researchers submit fabricated papers to arXiv.

Oracle and OpenAI abandoned their plans to expand a Stargate Texas data center amid financing disputes. Meta is considering leasing the planned expansion site. OpenAI acquired AI security platform Promptfoo, which they’ll integrate into their AI Frontier business platform.

OpenAI said it would delay the launch of "adult mode" in ChatGPT after criticism.

Mozilla said that Claude Opus 4.6 found over 100 bugs in Firefox in two weeks of January, 14 of them high-severity. The LLM found more bugs than typically get reported in two months.

Google released a Workspace CLI, which makes it easier for agentic AI tools to access Gmail, Calendar, Drive, Docs, and more. Alphabet gave Sundar Pichai a new three-year pay deal worth up to $692M, with stock incentives worth as much as $350M linked to the growth of Waymo and Wing.

Microsoft launched Copilot Cowork, integrating Anthropic's Claude Cowork tech into Microsoft 365 Copilot and using Work IQ to ground its actions in work data.

SoftBank is seeking a loan of up to $40 billion, its largest-ever borrowing, to help finance its investment in OpenAI.

Cloud provider Together AI is in talks to raise roughly $1 billion at a $7.5 billion pre-money valuation, up from $3.3 billion in 2025.

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and fake, AI-generated Casey Newton advice: casey@platformer.news. Read our ethics policy here.