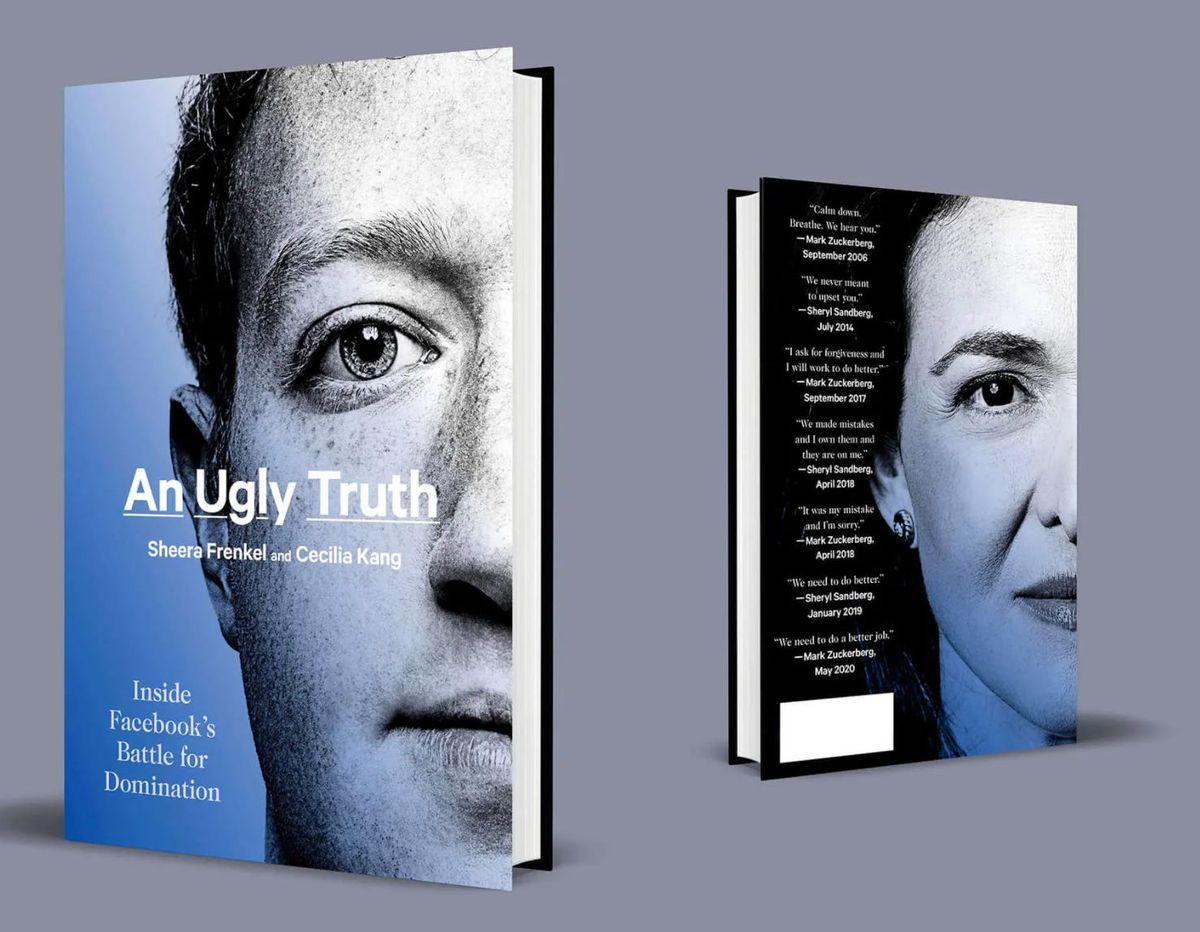

An Ugly Truth: Inside Facebook's Battle for Domination, reviewed

Three ways of looking at a Facebook scandal

I. The CSO and The Wire

As the second season of the great 2000s crime drama The Wire begins, Baltimore police detective Jimmy McNulty finds himself on a boat. After spending the previous season sounding the alarm about local drug kingpin Avon Barksdale, to the indifference and annoyance of his various superiors, McNulty is exiled to the city’s marine unit. (His bosses have learned he gets seasick, and punish him accordingly.) The special investigation team he was part of is forcibly disbanded; drug dealing in Baltimore continues unchecked.

Something similar happens to Alex Stamos, the non-fictional former chief security officer of Facebook, in the wake of his 2016-17 investigation into Russian interference on the platform. In An Ugly Truth: Inside Facebook's Battle for Domination, authors Sheera Frenkel and Cecilia Kang detail the company’s dawning awareness of the foreign adversaries in their midst. By the time it’s all over, Donald Trump will be president, and Facebook’s halting response triggers an international reckoning over the size and power of technology platforms.

The broad outlines of this story are well known, and have been told on a regular basis ever since, including in this column. (The aftermath of the 2016 election, and Facebook’s role in it, were the original inspiration for this newsletter.) The value in An Ugly Truth comes from the detail it brings to the Russia investigation as it was experienced by some of its participants at the time. And while I ultimately come to some different conclusions than the authors do, the book is worth reading for everyone interested in social networks, trust and safety, and cybersecurity. (And, of course, for anyone else like me who is fascinated by Facebook history.)

First, some disclosures: Frenkel and Kang have been colleagues of mine on the Facebook beat for years, and I’ve long been a fan on their work. I’m also friendly with Stamos — no reporter can resist a loudmouth who always gets in trouble with his bosses for telling them things they don’t want to hear, since this is secretly how we all think of ourselves.

Stamos arrived at Facebook in September 2015 following a high-profile stint at Yahoo, where he discovered a vulnerability in Yahoo mail that allowed the US government to monitor the messages of Yahoo Mail users. The vulnerability had been approved by then-CEO Marissa Mayer; Stamos quit a few weeks later on principle.

There were actually two separate Russian campaigns on Facebook during the 2016 election. The first came from Russia’s military intelligence agency, the GRU. Facebook first discovered GRU activity in March 2016, according to a postmortem circulated internally the following year. GRU agents made fake Facebook accounts and pages, and used them to spread disinformation and false news. Stamos’ team shared reports on what it found with the FBI — it heard nothing back from the agency — and also with his direct supervisors.

Facebook was slow to remove some of what it found, in part because at the time it had no rule against foreign groups setting up groups and pages to manipulate American opinion. After Facebook became aware that a Russian-operated page known as DCLeaks was distributing stolen emails from the Clinton campaign to journalists on the platform, the company initially took no action. Only after a security analyst found that the documents contained personal information — a clear violation of Facebook rules — was DCLeaks banned.

But even as the company investigated, it said nothing publicly. The authors write:

Within the threat intel group, there was debate over what should be done. Facebook was a private company, some argued, not an intelligence agency; the platform was not duty-bound to report its findings. For all Facebook knew, the National Security Agency was tracking the same Russian accounts the company was seeing, and possibly planning arrests. It might be irresponsible for Facebook to say anything. Others argued that Facebook’s silence was facilitating Russian efforts to spread the stolen information. They said the company needed to make public that Russia-linked accounts were spreading hacked documents through Facebook. To them, the situation felt like a potential national emergency. “It was crazy. They didn’t have a protocol in place, and so they didn’t want us to take action. It made no sense,” one security team member said. “It felt like this was maybe a time to break precedent.”

While Facebook considered what to do about the Russians’ hack-and-leak campaign, a separate influence operation was unfolding under its nose. The Internet Research Agency, a “troll farm” that the New York Times had first profiled in 2015, was running its own attack. It was discovered only after the election, in 2017, when a senator alerted Facebook officials to its existence. A subsequent Facebook investigation found that the IRA had published 80,000 posts, spent $100,000 on 3,300 advertisements, and reached as many as 126 million Americans.

That investigation had been undertaken by Stamos’ team without the knowledge of Facebook CEO Mark Zuckerberg or COO Sheryl Sandberg — this is the moment in the book that I began to see him as Jimmy McNulty — and Frenkel and Kang report that this put him in a tenuous position. Tensions spilled out during a presentation of the team’s findings to Zuckerberg and his leadership team in December 2016, a month after the election:

His investigation could expose the company to legal liability or congressional oversight, and Sandberg, as the liaison be- tween Facebook and Washington, would eventually be called to DC to explain Facebook’s findings to Congress. “No one said the words, but there was this feeling that you can’t disclose what you don’t know,” according to one executive who attended the meeting. Stamos’s team had uncovered information that no one, includ- ing the U.S. government, had previously known. But at Facebook, being proactive was not always appreciated. “By investigating what Russia was doing, Alex had forced us to make decisions about what we were going to publicly say. People weren’t happy about that,” recalled the executive. “He had taken it upon himself to uncover a problem. That’s never a good look,” observed another meeting participant.

Stamos would spend the next year managing the fallout of the revelations, and putting together various plans to restructure Facebook’s security operations. One of his ideas was to embed security people throughout the organization, rather than silo it from the rest of Facebook. The company accepted this idea, but it wound up leaving him like McNulty on the boat — a man without a country:

When he returned to work in January, the security team of over 120 people that he had built was largely disbanded. As he had suggested, they were moved across to various parts of the company, but he had no role, or visibility, into their work. Instead, he was left in charge of a whittled-down team of roughly five people.

Stamos left Facebook in the summer of 2018 to create the Stanford Internet Observatory, where he built a team that analyzes influence operations on social platforms around the world.

II. Imagination vs. bureaucracy

What lessons have platforms learned, if any, since 2016? Facebook massively expanded its team devoted to “platform integrity,” which monitors for Russia-style campaigns, and publishes monthly reports on the networks of adversaries that it disrupts around the world. (This has become so routine that they now typically get little coverage beyond Facebook’s own blog posts on the subject.)

“Since 2017, we have removed over 150 covert influence operations originating in more than 50 counties,” Facebook told me today, “and a dedicated investigative team continues to vigilantly protect democracy on our platform both here and abroad.”

Meanwhile, disinformation in US elections has become a primarily domestic concern, driven by politicians like former President Donald Trump, who spread lies with impunity and was often rewarded for it by his voters.

The Wire is a show about how people are shaped by the systems that they work within, and An Ugly Truth offers a story like that, too. Facebook’s police found plenty of suspicious activity while out on patrol, but they struggled to make an airtight case — to say, definitively, what they were looking at. Amid that uncertainty, the almighty inertia of bureaucracy took over: who do we tell? What do they do with what they tell them? Who is ultimately responsible? And in the confusion, Russia’s campaign succeeded.

At the same time, the story of the 2016 election is much bigger than Russia and Facebook. It’s a story about the accelerating polarization of our country; white anxiety over demographic changes; the decline of local journalism; and the fracturing of our broader information ecosystem. Even the Russia story was not simply about Facebook: the country pioneered the strategy of “hack and leak” — stealing documents and sharing them with mainstream news outlets while concealing their origin, giving those documents greater legitimacy when they were published.

Facebook had many failures during 2016, but the greatest one was of imagination: an inability to see that a platform that had gathered together billions of people would create a powerful point of leverage for foreign adversaries seeking to reshape public opinion — and to benefit from the platform’s own viral sharing mechanics.

Facebook has since learned that lesson; it has never been harder to set up a network of fake accounts on the network and keep it operational. And yet just because Facebook is less vulnerable to attack from state actors doesn’t mean its current information environment is good or healthy. High-quality news is too often relegated to a secondary tab, while depending on your friend and follow graph, your News Feed could be as dumb, partisan, and polarizing as ever.

What will be the result of people consuming years of feed posts that misinform them, or lead them to outrage? Who is the McNulty inside Facebook empowered to run that investigation?

It feels like there are some failures of imagination there, too.

III. Scandals

While the Russia story is at the heart of An Ugly Truth, the book also serves as a kind of compendium of Facebook’s highest-profile scandals over the years. Searching for a connecting thread, the authors attribute the company’s history of public relations crises to its capitalist origins: “the platform is built upon a fundamental, possibly irreconcilable dichotomy: its purported mission to advance society by connecting people while also profiting off them.”

Certainly, Facebook triggered several crises as a direct result of pursuing growth and profits above all else: the initial launch of the News Feed; the Beacon advertising disaster; setting posts to public by default in 2009; and most damningly, the tragic, heedless expansion into a genocidal Myanmar, where Facebook initially had only a single moderator who spoke Burmese.

Other topics covered here, though, don’t fit quite as squarely into that narrative. The company’s use of human moderators to select “trending topics” for the feed, which is given much space in the book, actually improved Facebook by keeping misinformation out of sight. To the extent it was a scandal, it was only because selecting for high-quality news disadvantaged Republican outrage bloggers.

In other cases, the company faces blowback because it exercises its right to moderate content as it sees fit: leaving up a manipulated video of Nancy Pelosi, say, or defending a decade-old policy permitting Holocaust deniers on the platform. (That policy was reversed last year.) Whatever you think of those decisions, they don’t strike me as capitalist growth hacks.

To me, the most alarming Facebook scandals have always been the ones that reflect the company’s ability to affect human psychology, typically without our knowledge. Like the time in 2012 that the company experimented with altering users’ moods by showing them happy or sad posts in the News Feed. Or the revelation that Cambridge Analytica used Facebook data to create “psychographic profiles” of users in hope of better targeting them with ads to benefit the Trump campaign. (This effort seems to have been a total failure, but the fact that this even seemed possible fueled much of the outrage toward that company and Facebook.)

You can look at the story of Russian interference on Facebook as a growth-and-profits scandal, I guess — if you want to suggest it happened only because the company under-invested in security and moderation, which everyone at Facebook now acknowledges it did. But to me this one falls in that last camp — the human psychology one. I’m ultimately less worried that it took Facebook so long to find the Russians as I am about what it was possible for them to do using Facebook. After all, the same viral machinery they used so successfully in 2016 is still being used every day — by Americans against other Americans, and by various foreign governments against their own citizens.

To me, that’s not a story about the business model being “broken,” as is so often said about the company. If Facebook had switched off ads in 2015 and become a nonprofit organization, the vast majority of the 2016 Russian influence operation still would have been possible. To me, this is a story about a social network that is massive, powerful, mostly unregulated, and by virtue of its data being privately owned, still poorly understood. In 2021, Facebook now has the tools for dealing with foreign adversaries. But Americans still don’t have many tools for dealing with Facebook.

So by all means, let’s keep debating those bills now before Congress. Let’s pass a national privacy law. Let’s scrutinize future mergers and keep an eye on Facebook’s VR ambitions. And let’s invest in public social media — and public media in general — to see what kinds of good we can do online when we remove the profit motive.

But let’s also not allow ourself the comforting delusion that the problems in our information environment begin and end in Menlo Park. All year now, cyberattacks have been ravaging our country — many of them appearing to originate in Russia. That’s a different story from the one told in An Ugly Truth, but it is also part of a single story, which is that for more than five years now, various parts of our critical internet infrastructure have been under attack. Until very recently, thanks to the president they helped elect, Russia has suffered very few consequences for its actions. And among all the ugly truths on parade here, that might be the one that baffles me most of all.

The Ratio

Today in news that could affect public perception of the big tech companies.

⬇️ Trending down: Twitter accidentally verified six bot accounts. “The accounts shared nearly all the same followers and had not made a single tweet.” (Mikael Thalen / Daily Dot)

Governing

⭐ Google was fined $593 million for allegedly failing to negotiate “in good faith” with French publishers over the inclusion of their articles on the platform. It’s the latest international conflict between Google and the journalism industry; the company can appeal. Here’s Gaspard Sebag at Bloomberg:

The Alphabet unit ignored a 2020 decision to negotiate in good faith for displaying snippets of articles on its Google News service, the Autorité de la concurrence said Tuesday. The fine is the second-biggest antitrust penalty in France for a single company. […]

Google is “very disappointed” with the decision and considers it “acted in good faith throughout the entire process,” a spokesperson said. Google added that it’s about to reach an agreement with Agence France-Presse that included a global licensing agreement.

Related: Google intends to fight a $5.15 antitrust billion fine from the European Union at a September hearing. (Foo Yun Chee / Reuters)

Access to social networks including Facebook and WhatsApp has been restricted amid growing protests against the government. “NetBlocks metrics show that communications platforms WhatsApp, Facebook, Instagram and as well as some Telegram servers are disrupted on government-owned ETECSA.” (Netblocks)

Individual vloggers who may be affiliated with the Chinese Communist Party are promoting CCP narratives on YouTube. “Many of these YouTubers have hundreds of thousands of subscribers, and their videos are fiercely promoted and commented on by nationalist users.” (Kerry Allen & Sophie Williams / BBC)

A look at the shrinking influence of the Internet Association, which for years served as a primary trade association for Google, Facebook, Amazon and others. The decline of the 9-year-old group reflects both internal dysfunction and the emergence of factions within the platforms, observers say. (Emily Birnbaum / Politico)

Industry

Google slashed its revenue share for Stadia games to 15 percent on the first $3 million in revenue. Google’s video-game streaming service has struggled to gain traction among developers or users to date; we’ll see if this changes anything. (Kyle Orland / Ars Technica)

Google will now enforce a 60-minute time limit on Meet calls for free accounts. Like anyone wants to videoconference for more than an hour at a time anyway. (Abner Li / 9to5Google)

Google adopted the BIMI standard for Gmail, allowing authenticated companies to display verified logos inside their messages. Would love it if I could use this feature to improve deliverability of Platformer! (Catalin Cimpanu / The Record)

Instagram is testing a feature that shows users recently viewed stories and invites them to re-share them to their feed along with a sticker. “The new reshare Sticker adds a new way for people to contextualize content they’re resharing and makes those posts feel a bit less static (think retweets with comment rather than straight up retweeting a stranger into your feed).” (Taylor Hatmaker / TechCrunch)

Discord bought Sentropy, a firm that works to prevent online harassment. The company’s existing products will wind down, and it will be integrated with Discord’s trust and safety team. (Sentropy)

Amazon’s Ring surveillance network is rolling out end-to-end encryption. Using this (opt-in) feature will prevent recorded footage from being used by law enforcement. (Jay Peters / The Verge)

Facebook has a growing interest in the creator economy, but the platform’s mechanics have historically made it difficult for individuals to grow their brands. With a new focus on Reels and Bulletin, though, the company hopes to change that perception. (Mike Isaac and Taylor Lorenz / New York Times)

Search Atlas is a tool that shows you Google results from three different countries at once. The results can sometimes display dramatic differences revealing local norms and preferences. (Tom Simonite / Wired)

Apple’s weather app refuses to display 69 degrees. “Apple may be sourcing data for its iOS Weather app in Celsius and then converting it to Fahrenheit. For example, 20 degrees Celsius converts to 68 degrees Fahrenheit, while 21 degrees Celsius converts to 69.8 degrees Fahrenheit — which rounds up to 70 degrees Fahrenheit.” (Chaim Gartenberg / The Verge)

Those good tweets

you ok babe? you haven’t built your personal brand all day

— taimur (@taimurabdaal) 4:11 PM ∙ Jul 10, 2021

Guys I found it

— Eric von Otter 💉x1 (@ericvonotter) 2:45 PM ∙ Jul 11, 2021

— Deleted Tweets (@TweetNotLoading) 6:31 PM ∙ Jul 9, 2021

literally congrats everybody

— character actress lana schwartz (@_lanabelle) 10:29 PM ∙ Jul 9, 2021

Talk to me

Send me tips, comments, questions, and Russian interference: casey@platformer.news.