Before Uvalde, a platform fails to answer kids' alarms

Tech companies keep building systems to detect violent threats. Why didn't Yubo's work?

A week ago today, an 18-year-old man walked into an elementary school in Uvalde, Texas, and committed the latest in our nation’s never-ending series of senseless murders. And in the aftermath of that horror — 19 children dead, two teachers dead, 18 more injured — attention once again turned to what role platforms might have played in enabling the violence.

This question can feel both urgently necessary and also somehow beside the point. Necessary because people (often teenagers) are constantly being arrested after making threats on social media, and the Uvalde case shows once again why those threats must be taken more seriously.

And yet it’s also clear that American’s gun violence problem will not be solved at the level of platform policy or enforcement. It can be solved only by making it harder for people to acquire and use guns, particularly the assault weapons that figure in every single story like this one.

But around here we focus on platforms. And with that in mind, let’s take a look at what we’ve learned about the shooter’s online behavior in the week since the shooting. It speaks to issues around child safety and platforms that I’ve reported on here before — and points to some clear steps that platforms (and, if necessary, regulators) should take next.

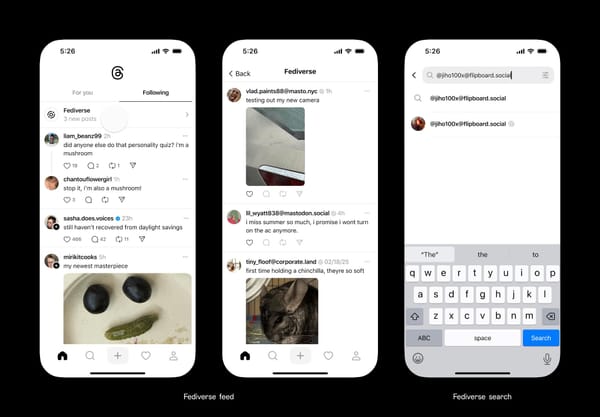

Aside from a handful of private messages, the Uvalde shooter appears not to have much used Facebook. That and Instagram were once the default platforms for making threats like these, but new platforms are growing in popularity with young people. The Uvalde shooter liked one called Yubo, created by a French company called Twelve App. It’s a “live chilling” app similar to Houseparty, the app that Meerkat became after helping to launch the live-streaming craze in the United States in 2015.

It’s also apparently quite popular, with more than 18 million downloads in the United States alone, according to the market research firm Sensor Tower.

Like Houseparty, Yubo lets users broadcast themselves live to a small group of friends. The twist is that Yubo focuses on making new friends — finding people with similar interests and letting them chat. Particularly young people. “Yubo is a social live-streaming platform that celebrates the true essence of being young,” the company says. (Perhaps for that reason, its seems to have attracted more than its share of older men and their unwanted sexual advances.)

In the days after the massacre, reporters discovered that Yubo appears to have been the shooter’s primary social app. He used it, among other things, to threaten rape — and school shootings. Here are Daniel A. Medina, Isabelle Chapman, Jeff Winter and Casey Tolan at CNN:

Three users said they witnessed Ramos threaten to commit sexual violence or carry out school shootings on Yubo, an app that is used by tens of millions of young people around the world.

The users all said they reported Ramos' account to Yubo over the threats. But it appeared, they said, that Ramos was able to maintain a presence on the platform. CNN reviewed one Yubo direct message in which Ramos allegedly sent a user the $2,000 receipt for his online gun purchase from a Georgia-based firearm manufacturer.

At the Washington Post, Silvia Foster-Frau, Cat Zakrzewski, Naomi Nix and Drew Harwell found a similar pattern of behavior:

A 16-year-old boy in Austin who said he saw Ramos frequently in Yubo panels, told the Post that Ramos frequently made aggressive, sexual comments to young women on the app and sent him a death threat during one panel in January.

“I witnessed him harass girls and threaten them with sexual assault, like rape and kidnapping,” said the teen. “It was not like a single occurrence. It was frequent.”

He and his friends reported Ramos’s account to Yubo for bullying and other infractions dozens of times. He never heard back, he said, and the account remained active.

Yubo told the network that it is cooperating with the investigation, but declined to offer any details on why the shooter was able to remain on the platform despite having been reported for making threats over and over again.

It can seem shocking that a person who repeatedly makes violent threats, and is reported for doing so to the platform, fails to see any consequences. And yet for years now, children have been telling us that this is regular occurrence for them.

In May of last year, I wrote about a report based on a survey of minors by Thorn, a nonprofit organization that builds technology to defend children from sexual abuse. Here are two findings from that survey that are relevant to the Uvalde case, from my column about it:

- Children are more than twice as likely to use platform blocking and reporting tools than they are to tell parents and other caregivers about what happened: 83 percent of 9- to 17-year-olds who reported having an online sexual interaction reacted with reporting, blocking, or muting the offender, while only 37 percent said they told a parent, trusted adult, or peer.

- The majority of children who block or report other users say those same users quickly find them again online: More than half of children who blocked someone said they were contacted again by the same person again, either through a new account or a different platform. This was true both for people children knew in real life (54 percent) and people they had only met online (51 percent).

In short: most kids use platform reporting tools instead of telling parents or other caregivers about threats online, but in most cases those reporting tools aren’t effective. In our interview last year, Julie Cordua, Thorn’s CEO, likened platform reporting tools to fire alarms that have had their wires cut. In the Uvalde case, we see what happens when those alarms aren’t connected to effective enforcement mechanisms.

If there’s any room for optimism here, it’s in the fact that criminals really do seem to be moving away from better-defended platforms to ones that are less established — and, in some cases, have fewer policy and enforcement tools. Surely part of that is simply evidence of changing tastes — Discord and Twitch are much more popular with the average teenager today than Facebook or perhaps even Instagram is.

But part of it is also that Meta, YouTube, and Twitter in particular have invested heavily in content moderation, making it harder for bad actors to make threats with impunity and evade bans. That speaks to the value of content moderation, to both companies and the world at large.

Peruse Yubo’s website and history and you will see a company that appears to be committed to good stewardship. The app has clearly posted community guidelines, albeit ones that have not been updated since 2020. It has a policy on ban evasion. And it uses facial-recognition technology in an effort to prevent users younger than 13 from signing up.

The company also says that it uses machine-learning to scan live streams in an effort to find bad behavior, and scans text messages to look for private information that users might be about to share unwittingly, such as phone numbers. These are good, useful, and expensive tools that many other platforms do not offer.

At the same time, these are voluntary measures in a world where regulators still have not established minimum standards for content policy, moderation, enforcement, or reporting what they find — aka “transparency.” We know that Yubo had a policy against basically everything the Uvalde shooter did. We know that kids saw what he was doing online, grew concerned, and used the app’s reporting tools to try to prevent it from happening in the future.

And, as is usually the case in these situations, we know nothing about what happened next. Were the reports reviewed? By humans or machines? What did they find?

Platforms that allow users to create accounts should be required to let people report those accounts for bad behavior. (Did you know you still can’t report an account on iMessage, one of the world’s biggest communications services?) Platforms should also be required to let us know what they do with those reports, both individually (to the person who reported it) and in the aggregate (so we can understand bad behavior on platforms overall).

Doing so will sadly do nothing to stop the epidemic of gun violence in this country. But it will make good on the promise that apps like Yubo are making to their users when they let them report bad behavior — that they will take action when they receive them, and work to prevent further harm.

Nobody forced Yubo build the systems that Thorn’s Cordua rightly called “fire alarms.” But it did. The least that Yubo and other platforms can do now is offer us some evidence that those alarms are actually plugged in.

Elsewhere in bad vibes: How the right-wing misinformation machine is exploiting the shooting to promote false conspiracy theories. And here’s some dead silence from the firm hired by the Uvalde school district to monitor students for making threats on social media.

Governing

⭐ In a chillingly close 5-4 decision, the Supreme Court voted to stay the Texas social media law that would force platforms to carry hate speech and other harms. “Justice Alito said he was skeptical of the argument that the social media companies have editorial discretion protected by the First Amendment like that enjoyed by newspapers and other traditional publishers.” Fun! (Adam Liptak / New York Times)

- Anti-abortion activists are already collecting data they’ll need to prosecute people after the Supreme Court overturns Roe vs. Wade. (Abby Ohlheiser / MIT Technology Review)

- The Securities and Exchange Commission said it is investigating Elon Musk’s late disclosure of his purchase of Twitter shares in April. You would hope so! (Kate Conger / New York Times)

- Russia’s foreign minister said journalists from Western countries will be forced to leave the country if YouTube blocks access to the weekly briefings of its spokeswoman, Maria Zakharova. (Reuters)

- Apple lost in an effort to dismiss an antitrust lawsuit from rival app store maker Cydia, which is seeking access to the iPhone. (Mike Scarcella / Reuters)

- Connecticut is the first state to hire someone to combat election misinformation as part of a $2 million campaign to promote facts about voting. The successful candidate will be “expected to comb fringe sites like 4chan, far-right social networks like Gettr and Rumble and mainstream social media sites to root out early misinformation narratives about voting before they go viral, and then urge the companies to remove or flag the posts that contain false information.” (Cecilia Kang / New York Times)

- A look at how the defamation trial between Johnny Depp and Amber Heard took over social media feeds, with the most popular content mocking an disparaging Heard. Depp has “attracted the support of men’s rights activists, right-wing media figures, #BoycottDisney campaigners eager to capitalize off Depp’s status as a fallen Disney franchise star, sex abuse conspiracists, armchair true-crime detectives, anyone wary of ‘the mainstream media’ and plenty of opportunists eager to draft off the trial traffic.” (Amanda Hess / New York Times)

- How “do your own research” transformed from a warning against groupthink into a movement dedicated to rejecting institutions and expertise, particularly in crypto circles. (John Herrman / New York Times)

- Federal Trade Commission staffers can once again speak at most conferences and events after Lina Khah relaxed a ban that was crushing morale. (Josh Sisco / The Information)

Industry

- India’s social networking company ShareChat is valued at $5 billion after raising $300 million in fresh capital from Google and others. ShareChat’s short-form video app Moj has grown quickly since India banned TikTok. (Munsif Vengattil / Reuters)

- A fired Google AI researcher reportedly spent two years attempting to undermine the findings of two fellow researchers who helped improve the company’s chips using artificial intelligence. (Tom Simonite / Wired)

- A look at the growing role Discord plays in the lives of musicians, who use it to build community and interact with fans. (Cat Zhang / Pitchfork)

- Foursquare founder Dennis Crowley announced LivingCities, which plans to build “a ‘social layer’ for consumers based around interacting with virtual spaces that capture the ‘spirit’ of real-life geographies and cities.” It has raised $4 million; co-founder Matt Miesnieks recently sold his previous startup to Niantic. (Lucas Matney / TechCrunch)

- A look at the rise of surveillance software in classrooms, which often falsely accuses students of cheating based on opaque algorithms. (Kashmir Hill / New York Times)

- Amid a glut of messaging apps and notifications, it’s time to bring back the universal away message. (Lauren Goode / Wired)

- An always-on lock screen is coming to the iPhone. Finally, a way to spend more time with my notifications. (Mark Gurman / Bloomberg)

- Finally, I went on Eric Newcomer and Tom Dotan and Katie Benner’s podcast to talk about Substack’s fundraising travails. (Dead Cat Show)

Those good tweets

I just know Amazon drivers be like.. THIS HOUSE AGAIN ???

— bandit 👼🏿 (@kinkyybandit) 5:16 PM ∙ May 26, 2022

Kid at the park just told me it's her birthday today. I asked her how old she is and she said five and a half. Story absolutely crumbling

— ben flores redemption arc (@limitlessjest) 6:34 PM ∙ May 27, 2022

I asked my mom how her first date went with a guy she met on eharmony and she said “let’s just say we were physically compatible” and I said “let’s just say fine next time”

— gianmarco (@GianmarcoSoresi) 2:41 AM ∙ May 24, 2022

This is by far the funniest law book out there

— Nicole A. Rizza (JD Era) 🏳️⚧️ (@NicoleARizza) 4:39 PM ∙ May 25, 2022

Talk to me

Send me tips, comments, questions, and Yubo reports: casey@platformer.news.