Biden seeks to rein in AI

An executive order gives AI companies the guardrails they asked for. Will the US go further?

I.

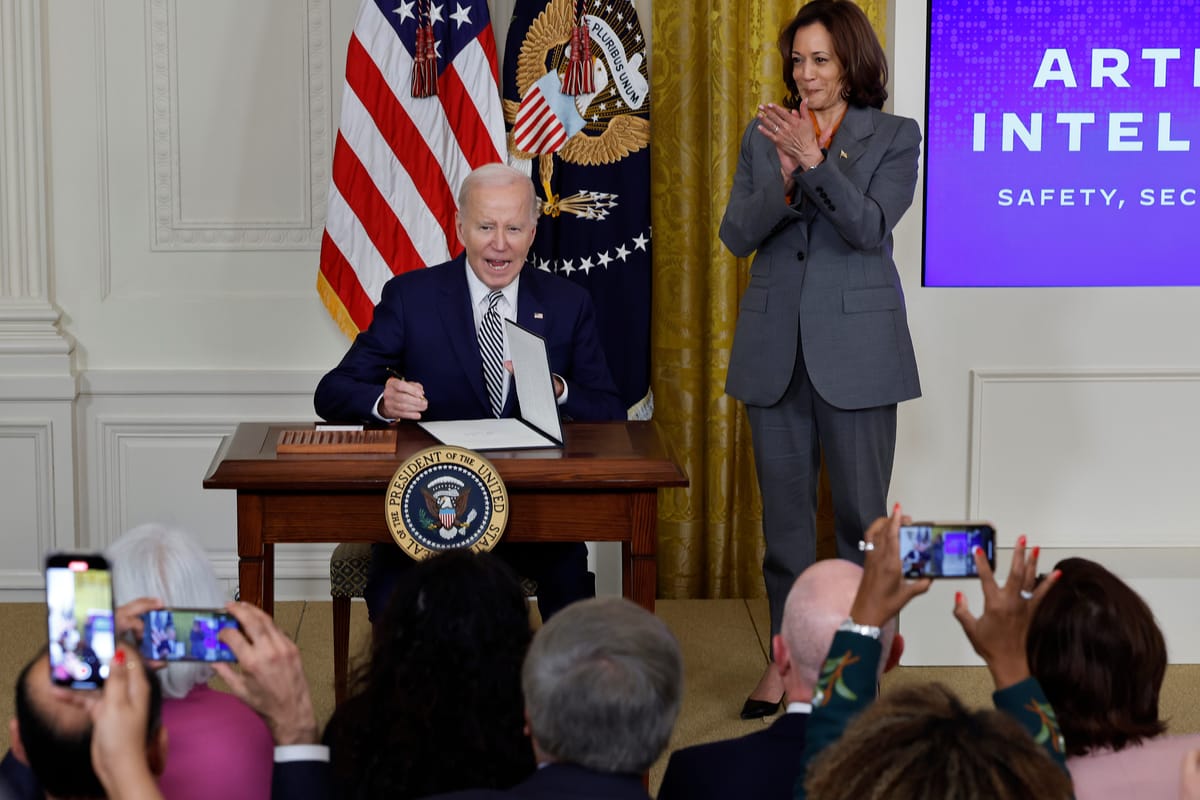

WASHINGTON, DC — Moments before signing a sweeping executive order on artificial intelligence on Monday at the White House, President Biden deviated from his prepared remarks to talk about his own experience of being deepfaked.

Reflecting on the times he has seen synthetic media simulating his voice and image, he was sometimes fooled by the hoax. “When the hell did I say that?” he said, to laughter.

The text of the remarks stated that even a three-second recording of a person’s voice could fool their family. Biden amended it to say that such a recording could fool the victim, too.

“I swear to God — take a look at it,” he said, as the crowd laughed again. “It’s mind-blowing.”

The crowd’s laughter reflected both the seeming improbability of 80-year-old Biden browsing synthetic media of himself, and the nervous anticipation that more and more of us will find ourselves the subject of fakes over time.

The AI moment had fully arrived in Washington. And, as I listened in the audience in the East Room, Biden offered a plan to address it.

Over the course of more than 100 pages, Biden’s executive order on AI lays the groundwork for how the federal government will attempt to regulate the field as more powerful and potentially dangerous models arrive. It makes an effort to address present-day harms like algorithmic discrimination while also planning for worst-case future AI tools, such as those that would aid in the creation of novel bioweapons.

As an executive order, it doesn’t carry quite the force that a comprehensive package of new legislation on the subject would have. The next president can simply reverse it, if they like. At the same time, Biden has invoked the Defense Production Act, meaning that so long as it is in effect, the executive order carries the force of law — and companies that shirk their new responsibilities could find themselves in legal jeopardy.

Biden’s announcement came ahead of a global AI summit in the United Kingdom on Wednesday and Thursday. The timing reflects a desire on the part of US officials to be seen as leading AI safety initiatives at the same time US tech companies lead the world in AI development.

The executive order “is the most significant action any government anywhere in the world has ever taken on AI safety, security, and trust,” Biden said. For once, the president's remarks implied, the United States would compete with its allies in regulation as well as in innovation.

II.

So what does the order do?

The first and arguably most important thing it does is to place new safety requirements based on computing power. Models trained with 10 to the 26th power floating point operations, or flops, are subject to the rules. That’s beyond the computing power required to train the current frontier models, including GPT-4, but should pertain to the next-generation models from OpenAI, Google, Anthropic, and others.

Companies whose models fall under this rubric must perform safety tests on their models, and share the results of those tests before releasing their models to the public.

This mandate, which builds on voluntary requirements that 15 big tech companies signed on to over the past few months, creates baseline transparency and safety requirements that should limit the ability of a rogue (and extremely rich) company to build an extremely powerful model in secret. And it sets a norm that as models grow more powerful over time, the companies that build them will have to be in dialogue with the government around their potential for abuse.

Second, the order identifies some obvious potential harms and instructs the federal government to get ahead of them. Responding to the deepfake issue, for example, the order puts the Commerce Department in charge of developing standards for digital watermarks and other means to establish content authenticity. It also forces makers of advanced models to screen them for their ability to aid in the development of bioweapons, and orders agencies to perform a risk assessment for dangers posed by AI related to chemical, biological, radiological, and nuclear weapons.

And third, the order acknowledges various ways AI could improve government services: building AI tools to defend against cyberattacks; developing cheaper life-saving drugs; and exploring the potential for personalized AI tutors in education.

Ben Buchanan, special adviser for AI at the White House, told me that AI’s potential for good is reflected in technologies like AlphaFold, the system developed by Google DeepMind to predict a protein's three-dimensional structure from its amino acid sequence. The technology is now used to discover new drugs. More advances are on the near horizon, he said.

“I look at something like micro-climate forecasting for weather prediction, reducing the waste in the electricity grid, or accelerating renewable energy development,” said Buchanan who is on leave from Georgetown University, where he teaches about cybersecurity and AI. “There’s a lot of potential here, and we want to unlock that as much as we can.”

III.

For all that the executive order touches, there are still some subjects that it avoids.

For one, it does almost nothing to require new transparency in AI development. “There is a glaring absence of transparency requirements in the EO — whether pre-training data, fine-tuning data, labor involved in annotation, model evaluation, usage, or downstream impacts,” note Arvind Narayanan, Sayash Kapoor, and Rishi Bommasani at AI Snake Oil, in their excellent review of the order.

For another, it sidesteps questions around open-source AI development, which some critics worry could lead to more misuse.Whether it is preferable to build open-source models, like Meta and Stability AI, or to build closed ones, as OpenAI and Google are doing, has become one of the most contentious issues in AI.

Andrew Ng, who founded Google’s deep learning research division in 2011 and now teaches at Stanford, raised eyebrows this week when he accused closed companies like OpenAI (and presumably Google) of pursuing gate-keeping industry regulation in the cynical hope of eliminating their open-source competitors.

“There are definitely large tech companies that would rather not have to try to compete with open source [AI], so they’re creating fear of AI leading to human extinction,” Ng told the Australian Financial Review. “It’s been a weapon for lobbyists to argue for legislation that would be very damaging to the open-source community.”

Ng’s sentiments were echoed over the weekend in X posts from Yann LeCun, Meta’s pugnacious chief scientist and an outspoken open-source advocate. LeCun accused OpenAI, Anthropic and DeepMind of “attempting to perform a regulatory capture of the AI industry.”

“If your fear-mongering campaigns succeed, they will *inevitably* result in what you and I would identify as a catastrophe: a small number of companies will control AI,” LeCun wrote, addressing a critic on X. “The vast majority of our academic colleagues are massively in favor of open AI R&D. Very few believe in the doomsday scenarios you have promoted.”

DeepMind chief Demis Hasabis responded on Tuesday by politely disagreeing with LeCun, and saying that at least some regulations were needed today. “I don’t think we want to be doing this on the eve of some of these dangerous things happening,” Hassabis told CNBC. “I think we want to get ahead of that.”

At the White House on Monday, I met with Arati Prabhakar, director of the Office of Science and Technology Policy. I asked her if the federal government had taken a view yet on the open-versus-closed debate.

“We’re definitely watching to see what happens,” Prabhakar told me. “What is clear to all of us is how powerful open-source is — what an engine of innovation and business it has been. And at the same time, if a bedrock issue here is that these technologies have to be safe and responsible, we know that that is hard for any AI model because they're just so richly complex to begin with. And we know that the ability of this technology to spread through open source is phenomenal.”

Prabhakar said that views of open-source AI can vary depending on your job.

“If I were still in venture capital, I'd say the technology is democratizing,” said Prabhakar, whose long history in tech and government includes five years running the the Defense Advanced Research Projects Agency (DARPA). “If I were still at the Defense Department, I would say it's proliferating. I mean, this is just the story of AI over and over — the light side and and dark side. … And the open source issue is one that we definitely continue to work on and hear from people in the community about, and sort of figure out the path ahead.”

IV.

I left Washington this morning with two main conclusions.

One is that AI is being regulated at the right time. The government has learned its lesson from the social media era, when its near-total inattention to hyper-scaling global platforms made it vulnerable to being blindsided by the inevitable harms once they arrived.

AI industry players deserve at least some credit for this. Unlike some of their predecessors, executives like OpenAI’s Sam Altman and Anthropic’s Dario Amodei pushed early and loudly for regulation. They wanted to avoid the position of social media executives sitting before Congress, asking for forgiveness rather than permission.

And to Biden’s credit, the administration took them seriously, and developed enough expertise at the federal level that it could write a wide-ranging but nuanced executive order that should mitigate at least some harms while still leaving room for exploration and entrepreneurship. In a country that still hasn’t managed to regulate social platforms after eight years of debate, that counts for something.

That leads me to my second conclusion: for better and for worse, the AI industry is being regulated largely on its own terms.

The executive order creates more paperwork for AI developers. But it doesn’t really create any hurdles. For a set of regulations, it comes across as intending neither to accelerate or decelerate. It allows the industry to keep building more or less as it already is, while still calling it (in Biden’s words) “the most significant action any government anywhere in the world has ever taken on AI safety, security, and trust.”

If that is true, I suspect it may not be for long. The European Union’s AI Act, which among other things calls for ongoing monitoring of large language models, could take effect next year. When it does, AI developers may find that the United States is once again its most permissive market.

Still, what White House officials told me this week feels true: that the potential to do good here appears roughly equal to its potential to do harm. AI companies now have the opportunity to demonstrate that the trust the administration has now granted them is well placed. And if they don’t, Biden’s order may ensure that they feel the consequences.

Sponsored

Give your startup an advantage with Mercury Raise.

Mercury lays the groundwork to make your startup ambitions real with banking* and credit cards designed for your journey. But we don’t stop there. Mercury goes beyond banking to give startups the resources, network, and knowledge needed to succeed.

Mercury Raise is a comprehensive founder success platform built to remove roadblocks that often slow startups down.

Eager to fundraise? Get personalized intros to active investors. Craving the company and knowledge of fellow founders? Join a community to exchange advice and support. Struggling to take your company to the next stage? Tune in to unfiltered discussions with industry experts for tactical insights.

With Mercury Raise, you have one platform to fundraise, network, and get answers, so you never have to go it alone.

*Mercury is a financial technology company, not a bank. Banking services provided by Choice Financial Group and Evolve Bank & Trust®; Members FDIC.

Platformer has been a Mercury customer since 2020. This sponsorship gets us 5% closer to our goal of hiring a reporter in 2024.

Governing

- At the Google antitrust trial: testimony revealed that the company paid $26.3 billion in 2021 to be the default search engine across multiple browsers, phones, and platforms, with most of the money going to Apple. (David Pierce / The Verge)

- CEO Sundar Pichai testified that he once “asked Google executives to alert him any time an employee working on the search engine went to work for Apple.” But no one followed up on his request. (Miles Kruppa and Jan Wolfe / The Wall Street Journal)

- In late 2018, Pichai pitched the idea of pre-installing a Google Search app on every iOS device to Tim Cook. And eventually Cook was like, what if you paid us more than $20 billion a year instead?? (David Pierce / The Verge)

- The Justice Department argued that Google was once far ahead of competitors in generative AI, but chose not to release the technology publicly for fear of harming its monopoly in search. (Davey Alba and Leah Nylen / Bloomberg)

- The US Supreme Court is considering the issue of if and when government officials can block followers on social media, an issue at the intersection of the internet and the First Amendment. (Ariane de Vogue / CNN)

- The lawsuit against Meta alleging harms to children’s mental health has reignited the battle over online age verification, with privacy groups protesting that such measures will bring harms of their own. (Tonya Riley / Bloomberg Law)

- A school district in Florida barred students from using cell phones during school days, and while some teachers say it makes school more engaging, students say it’s unfair and isolating. (Natasha Singer / The New York Times)

- Some artists are alleging that there’s no functional way to opt out of Meta’s generative AI training, arguing that the data deletion request form doesn’t do anything. Meta says the form is meant merely for requests; it’s not an opt-out tool. (Kate Knibbs / WIRED)

- A US district judge dismissed copyright infringement claims against Midjourney and DeviantArt, saying it’s unclear whether any copyright images were used to train the AI image generators. (Winston Cho / Hollywood Reporter)

- Elon Musk said that posts on X with Community Notes attached will be ineligible for revenue share, in an effort to curb misinformation. On the other hand, he’s barely sharing any revenue even with the people he says he’s sharing revenue with. (Rebecca Bellan / TechCrunch)

- Canada banned the use of WeChat and the Russian antivirus program Kaspersky on government-issued devices, citing privacy and security risks. (Reuters)

- The Guardian is accusing Microsoft of damaging its reputation after its news aggregation service published an AI-generated poll speculating about the cause of a woman’s death next to a Guardian article about the incident. (Dan Milmo / The Guardian)

- UK prime minister Rishi Sunak is planning to launch an OpenAI-powered chatbot, “Gov.uk Chat”, to help people pay taxes and access their pensions. (James Titcomb and Matthew Field / The Telegraph)

- Over 100 individuals and groups, including trade unions and workers’ organizations, are accusing the UK and Rishi Sunak of sidelining them in favor of Big Tech in the development of new regulations to be discussed this week. (Cristina Criddle / The Financial Times)

- Some TikTok creators are streaming “live matches,” where one creator plays the role of the Israelis and another creator plays the role of the Palestinians, while urging their followers to donate monetary gifts. TikTok takes 50 percent of the earnings. (David Gilbert / Wired)

- Researchers have found relatively little synthetic media related to the Israel-Hamas conflict, but the possibility of their existence is leading people to dismiss authentic images and videos. The liar’s dividend strikes again. (Tiffany Hsu and Stuart A. Thompson / The New York Times)

- Instagram users are reporting that comments containing the Palestinian flag emoji have been hidden and flagged as “potentially offensive”. (Sam Biddle / The Intercept)

- Hackers are increasingly using AI to engineer phishing attacks, experts warn, making them harder to prevent and detect. (Eric Geller / The Messenger)

- Users in China are surprised to find that leading digital maps from Baidu and Alibaba do not identify Israel by name, underscoring its ambiguous diplomacy in the region. (James T. Areddy and Liyan Qi / The Wall Street Journal)

- China’s largest social media platforms, including WeChat and Douyin, are asking creators with over half a million followers to display their real names and identities. (Zheping Huang and Evelyn Yu / Bloomberg)

- Apple warned a number of Indian opposition leaders of state-sponsored attacks on their iPhones, just months ahead of general elections. It didn’t attribute the attacks to any particular group. But I can guess! (Manish Singh / TechCrunch)

Industry

- X is reportedly valued at $19 billion, less than half what it was worth a year ago, according to new employee equity compensation plans. (Kylie Robison / Fortune)

- X introduced its $16 per month Premium+ subscription, boasting an even larger boost to user replies and no ads. Also, a $3 per month basic plan that gives a small boost to replies but no verification or ad reduction. (Emma Roth / The Verge)

- Elon Musk and Linda Yaccarino reportedly told X employees that YouTube and LinkedIn are future competitors for the platform. They also have ambitions to create a newswire service named XWire. (Edward Ludlow / Bloomberg)

- While many users are fleeing X, sports fans appear to be remaining loyal to the platform, accounting for 42 percent of X’s audience. (Jesus Jiménez / The New York Times)

- Instagram head Adam Mosseri’s goal for Threads? For it to become the “de facto platform for public conversations online”. (Jay Peters / The Verge)

- Mosseri also said that Threads API is in the works, but is concerned that publisher content might overshadow creator content. I accidentally broke this story when Mosseri kindly replied to me after I replied to another user looking for an equivalent to Tweetdeck. (Ivan Mehta / TechCrunch)

- Meta is offering European users ad-free subscriptions on Facebook and Instagram after courts cracked down on ad targeting. Europe has forced the company’s hand here, but I’m interested to see how big the demand is. (Jillian Deutsch / Bloomberg)

- It’s also temporarily pausing ads on the platforms for European teens under 18. (Sam Schechner / The Wall Street Journal)

- Apple’s “Scary Fast” showcase announced a more powerful line of M3 chips, now in a new iMac and Macbook Pros, alongside a cheaper MacBook Pro with the base M3 chip. Also the Touch Bar has officially been retired. (Emma Roth / The Verge)

- AI deepfakes of Chinese influencers are flooding China’s e-commerce streaming platforms, allowing brands to livestream 24/7 to generate sales with lower costs. (Zeyi Yang / MIT Tech Review)

- OpenAI is testing an updated version of ChatGPT that will integrate access to all GPT-4 tools, including DALL-E 3 and browsing, into a single input field. It also appears to be adding native PDF uploading. (Kristi Hines / Search Engine Journal)

- Google is reportedly investing up to $2 billion in AI startup Anthropic, with $500 million paid up front and an additional $1.5 billion over time. (Berber Jin and Miles Kruppa / The Wall Street Journal)

- Research by the News Media Alliance show that AI chatbots rely on news articles more than generic online content. The alliance argues this results in copyright infringement. (Katie Robertson / The New York Times)

- Discord head of trust and safety John Redgrave talked about how AI is making content moderation both harder and easier when it comes child sexual abuse material. (Reed Albergotti / Semafor)

- Pinterest posted better-than-expected results for Q3, with a revenue increase of 11 percent. (Jonathan Vanian / CNBC)

- “Granfluencers” are gaining popularity on social media, with seniors finding success by sharing their expertise on specific subjects like cooking, auto repair, or fashion. (Lori Ioannou / The Wall Street Journal)

- Another group gaining stardom on social media: blue-collar workers sharing what they do in a day, like farming, fishing for lobsters, and trucking. (Steven Kurutz / The New York Times)

- News is a growth area for creators on TikTok and Instagram, a study found, as more people turn to social media over traditional news outlets. (Taylor Lorenz / Washington Post)

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and AI regulations: casey@platformer.news and zoe@platformer.news.