Spotify's misinformation mistake

Don't promote a policy you're not willing to enforce

Programming note: Platformer is off Monday for Martin Luther King Jr. Day.

Does Spotify have a policy about misinformation related to COVID-19, or doesn’t it?

Last year, amid a spike in songs and podcasts promoting falsehoods, the company said such a policy indeed is in effect. In a statement issued in January 2021, Spotify said:

“Spotify prohibits content on the platform which promotes dangerous false, deceptive, or misleading content about COVID-19 that may cause offline harm and/or pose a direct threat to public health. When content that violates this standard is identified it is removed from the platform.”

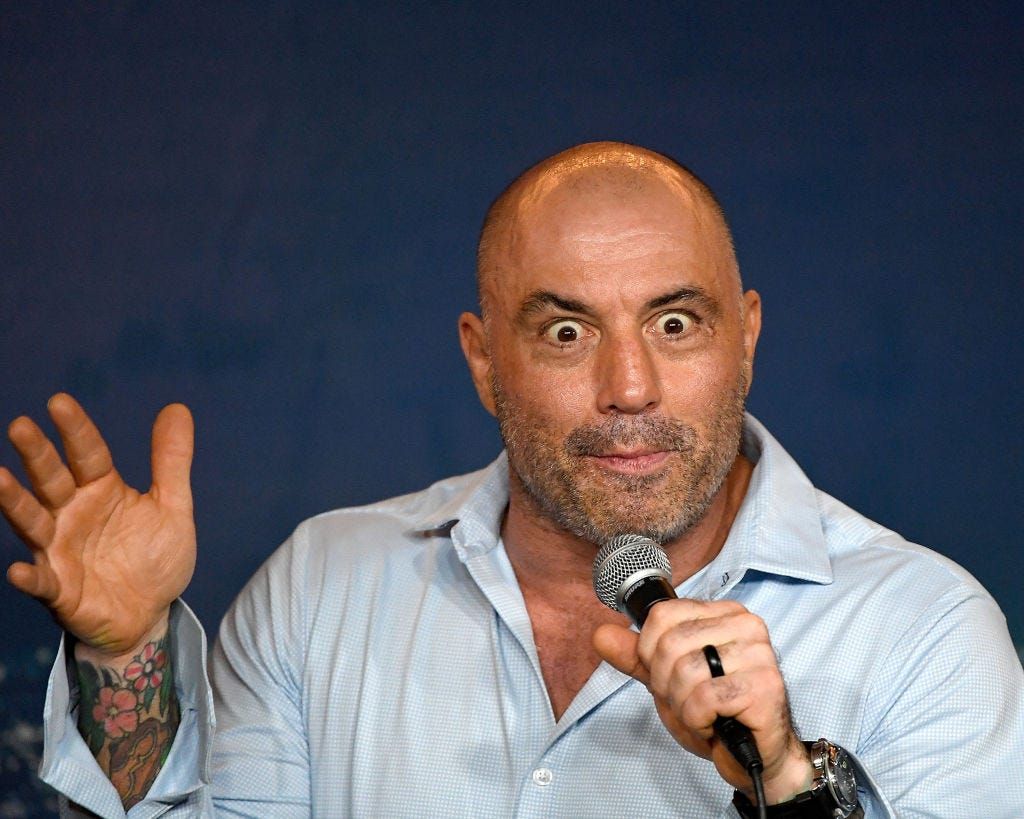

That statement was in the news again a few months later, after Joe Rogan — the most popular podcaster in the world, whose show is exclusive to Spotify — suggested that healthy young people do not need to be vaccinated against the disease. Sources told The Verge’s Ashley Carman that Spotify reviewed the episode before it was posted, and decided to leave it up because “he doesn’t come off as outwardly anti-vaccine” and “doesn’t make a call to action.”

Left unsaid was the fact that censoring Rogan would trigger a firestorm, and potentially put the company’s $100 million investment into the host in jeopardy.

In the months since, Spotify has continued to toe that line. None of Rogan’s podcasts have been removed, despite frequent controversies over his choice in interview subjects and their collective thoughts on COVID in particular. Anna Merlan described some of these discussions, including those with notorious Sandy Hook parent tormentor Alex Jones, in a piece for Vice in November:

During the pandemic, Rogan has played a major role in spreading COVID misinformation of all kinds to his enormous audience, including telling young viewers they didn’t need to get vaccinated before backpedaling in the face of enormous criticism. He helped spread the false gospel of ivermectin by hosting Dr. Pierre Kory, who co-founded a fringe medical group, the Frontline COVID-19 Critical Care Alliance (FLCCC), which exists mostly to suggest the drug is a treatment or preventative for COVID. (The best available evidence shows that it’s not. A paper written by members of the FLCCC making claims about ivermectin’s effectiveness was removed in March by the journal that had provisionally accepted it. Kory also promotes another COVID treatment protocol he calls MATH+; a paper he said showed its efficacy was recently retracted.)

Still, Spotify took no action, likely for the same reasons it took no action in April: Rogan hadn’t explicitly told his followers to avoid the vaccine. Instead, he had become that figure so familiar to anyone enforcing platform policy today: the high-profile edgelord who is forever just asking questions.

Then, a few weeks ago, Rogan rolled out his latest provocation: an interview with Robert Malone, a doctor who had previously been banned from Twitter for saying the vaccines are dangerous. In that interview, reports EJ Dickson at Rolling Stone:

[Malone] espoused various conspiratorial and baseless beliefs, from the idea that “mass formation psychosis” is responsible for people believing in the efficacy of vaccines; to the claim popular among anti-vaxxers that hospitals are financially incentivized to falsely diagnose COVID-19 deaths. The episode featuring Malone went viral, and was shared widely in right-wing media circles as well as on Facebook, where the link on Spotify has been shared nearly 25,000 times, according to CrowdTangle data.

If I were running an audio platform, and had a stated policy against false, deceptive, and misleading content about COVID 19 that may threaten public health, views like Malone’s would be pretty much what I had in mind when I wrote it. Spotify has 381 million users to whom it regularly promotes its podcasts; Rogan’s, which sits at the top of its download charts, reportedly gets 11 million downloads per episode. If even 1 percent of those people heard the podcast and decided that the efficacy of vaccines were a product of “mass psychosis,” and declined to get jabbed or boosted, it could quite conceivably threaten public health.

That’s why this week, 270 doctors, physicians, and science educators published an open letter to Spotify calling on it to take action. They write in part:

The average age of [Joe Rogan Experience] listeners is 24 years old and according to data from Washington State, unvaccinated 12-34 year olds are 12 times more likely to be hospitalized with COVID than those who are fully vaccinated. Dr. Malone’s interview has reached many tens of millions of listeners vulnerable to predatory medical misinformation. Mass-misinformation events of this scale have extraordinarily dangerous ramifications.

Now, at this point it’s worth mentioning that this entire pandemic has been fraught with misinformation, some of it coming from the medical establishment. That can make it extraordinarily difficult for tech platforms, which typically have no expertise in the subject matter, to develop effective policies around “misinformation.” What’s considered true and false can change quickly, and in some cases it can leave platforms likely wishing they had never intervened at all.

Facebook, for example, once banned claims that COVID had been made in a lab. Then last May it reversed itself, and it’s not totally clear why it ever felt the need to take action in the first place. (No significant policy question turns on how COVID originated.)

For their part, signatories of the Spotify letter aren’t calling on the company to remove Rogan — or even the podcast episode in question. Instead, they asked for Spotify “to immediately establish a clear and public policy to moderate misinformation on its platform.”

If we are to believe what the company said last January, it already has such a policy in place. But the true policy is the one that is enforced, and Spotify’s reluctance to challenge its star host over the past year, however predictable, casts doubt. (The company didn’t respond to requests for comment on Thursday.)

Speaking with Hot Pod’s Nick Quah in November 2020, when questions about Rogan and Spotify first bubbled up, I argued that the company should develop a comprehensive set of community standards and decide how aggressively it wanted to police hosts’ speech:

That should be a process that involves a lot of stakeholders and a lot of thought. They should be bringing in people who have worked on this issue for other platforms. They need to be gaming out scenarios. Of course, they could land in a bunch of places in that process — and, by the way, one of those places could be, “We don’t care if our podcast hosts bring on people who have been deplatformed elsewhere, we’re going to enable more speech than any other platform, and here’s why.”

Spotify could still run that process, and it could still land in that place. What it can’t do is have it both ways — issue press releases about removing COVID misinformation to the press, while turning a blind eye to its most prominent examples. If Spotify is determined to own the podcast market, it’s going to have to own its responsibilities there, too.

Platformer Jobs

Today’s featured jobs on the Platformer Jobs board include:

- AI and Media Integrity Program Lead, Partnership on AI.

- Outreach & Engagement Manager, Digital Trust & Safety Partnership.

- Project Lead, Global Coalition For Digital Safety, World Economic Forum.

Some posts here are paid. For more great jobs in tech policy and trust and safety, or to create a listing, visit here. Nonprofits and academic institutions can post for free using the code NONPROFIT.

The Ratio

Today in news that could change public perception of the big tech companies.

⬆️ Trending up: Twitter said its share of Black workers jumped to 9.4 percent in 2021 from 6.9 percent a year earlier, aided by the decision to let employees work from anywhere. It appears to be one of the most substantial diversity improvements since companies began reporting these statistics. (Jeff Green and Kurt Wagner / Bloomberg)

Governing

⭐ The January 6 committee subpoenaed Google, Facebook, Twitter, and Reddit, demanding records related to the attack. The committee found that the companies did not respond adequately to previous, voluntary requests. Here’s Kevin Breuninger at CNBC:

The committee is once again demanding that Google parent company Alphabet, Twitter, Reddit and Meta — formerly known as Facebook — hand over a slew of records relating to the domestic terrorism, the spread of misinformation and efforts to influence or overturn the 2020 election.

“Two key questions for the Select Committee are how the spread of misinformation and violent extremism contributed to the violent attack on our democracy, and what steps — if any — social media companies took to prevent their platforms from being breeding grounds for radicalizing people to violence,” Chairman Bennie Thompson, D-Miss., said in the press release.

Lawmakers introduced the “TLDR Act” to force tech companies to post shorter summaries of their terms of service. The act “would require sites to display a ‘summary statement’ that not only makes their terms ‘easy to understand,’ but also discloses whether they have been hit by recent data breaches and what sensitive personal data they collect.” (Cristiano Lima / Washington Post)

Antitrust enforcers are drowning in mergers. “In 2021, companies reported 4,130 mergers to the two agencies — more than double the number from the previous year.” (Rebecca Kern / Politico)

An investigation in El Salvador found that at least 35 cases journalists and members of civil society had their phones successfully infected with NSO Group’s Pegasus spyware. The hacking appears to be tied to the country’s increasingly authoritarian president. (John Scott-Railton, Bill Marczak, Paolo Nigro Herrero, Bahr Abdul Razzak, Noura Al-Jizawi, Salvatore Solimano, and Ron Deibert / Citizen Lab)

Gettr banned a right-wing pundit for using the N-word in his profile. Gettr “defends free speech, but there is no room for racial slurs on our platform,” the company said. (Sinéad Baker / Insider)

Austria’s data protection commissioner ruled that Google Analytics violates GDPR. If the ruling holds up, it could complicate life for US companies that transfer data in and out of Europe. (Natasha Lomas / TechCrunch)

China plans to build an NFT ecosystem on its state-controlled blockchain. The move comes as “digital collectibles” are gaining popularity in the country, even though public cryptocurrencies are banned. (Coco Feng / South China Morning Post)

German police used a contact-tracing app meant to help stop the spread of COVID-19 to track down potential witnesses to a crime. “The incident is also likely to provide fodder for vaccine doubters, some of whom have taken on a broader anti-government stance, and those who believe coronavirus-related conspiracy theories.” (Rachel Pannett / Washington Post)

Nigeria lifted its ban on Twitter. The cost: “Twitter will open an office in the capital Abuja, register as a broadcaster, pay taxes, and commit to being sensitive to national security and cohesion.” (Abubakar Idris and Peter Guest / Rest of World)

A look at “crypto colonizers” in Puerto Rico, where tax breaks have attracted a flood of newcomers — along with fears of rising inequality. While crypto utopia has yet to arrive on the island, it has captivated many younger residents. (Nitasha Tiku / Washington Post)

Industry

⭐ Instagram was the most-downloaded app in the fourth quarter of 2021. It’s performing particularly well in India, where TikTok is banned; another takeaway is that Reels is working. (Sarah Perez / TechCrunch)

The maker of battle royale shooter game PlayerUnknown’s Battlegrounds sued Apple and Google in an effort to force them to stop selling a game that copied some of its features. Even if you think app stores need to crack down on clones, this lawsuit would seemingly put the entire industry at risk — games copy features from each other all the time. (Blake Brittain / Reuters)

Related: A new analysis of the App Store finds a variety of knockoff apps for controlling Samsung devices, some of which come with pricey subscriptions. (Jason Cross / MacWorld)

The founder of Second Life returned to the company as a strategic adviser. Naturally, Philip Rosedale plans to “shepherd its expansion as the metaverse gains wider traction.” (Meghan Bobrowsky / Wall Street Journal)

A look at telecom industry backlash to Apple’s iCloud Private Relay feature, which enables VPN-like private browsing for subscribers. Telecoms are worried they will eventually lose understanding of what customers are doing on their networks. (Matt Burgess / Wired)

Coinbase acquired the derivatives exchange FairX. It’s a sign that Coinbase will move forward with plans to sell derivatives. (Michael McSweeney / The Block)

Google’s vice president of trust and safety returned to the government service. Kristie Canegallo joined DHS as chief of staff; she previously worked in the George W. Bush and Obama administrations. (Garrett Ross and Eli Okun / Politico)

A profile of creators who have made millions making digital stickers for popular Asian messaging app Line. “There are now 4 million designers on the platform, from hobbyists and part-timers to professional studios.” (Andrew Deck / Rest of World)

“Baby Shark” became the first YouTube video to surpass 10 billion views. Also it’s stuck in your head now. Sorry! (Jay Peters / The Verge)

Those good tweets

This month I’m doing something called January, where I try to make it through every day of January

— Ron Amaya (@juan_amayah) 9:15 PM ∙ Jan 11, 2022

Oh, you didn’t have any taste before Covid either, honey

— Sandy Danto (@SandyDanto) 4:39 PM ∙ Jan 11, 2022

[tinder first date]

— Andrew Nadeau (@TheAndrewNadeau) 4:59 PM ∙ Jan 12, 2022

her: oh. I saw your profile picture holding the fish. I just assumed…

fish: yeah this happens a lot

And this is why we were asked to leave.

— Glenn Farrington (@HaHaScribe) 8:11 AM ∙ Jan 11, 2022

Talk to me

Send me tips, comments, questions, and Joe Rogan quotes: casey@platformer.news.