Meta seeks to hide harms from teens

But to change the conversation, the company will have to do more than tweak its settings

Today, for a change of pace, let’s talk about something other than the fate of Substack and all those who dwell upon it.

Instead, let’s look at the mounting pressure on social networks to make their apps safer for young people, and the degree to which both platforms and regulators continue to talk past one another.

Let’s start with the news. Today, Meta said it would take additional steps to prevent users under the age of 18 from seeing content involving self-harm, graphic violence and eating disorders, among other harms.

Here’s Julie Jargon at the Wall Street Journal:

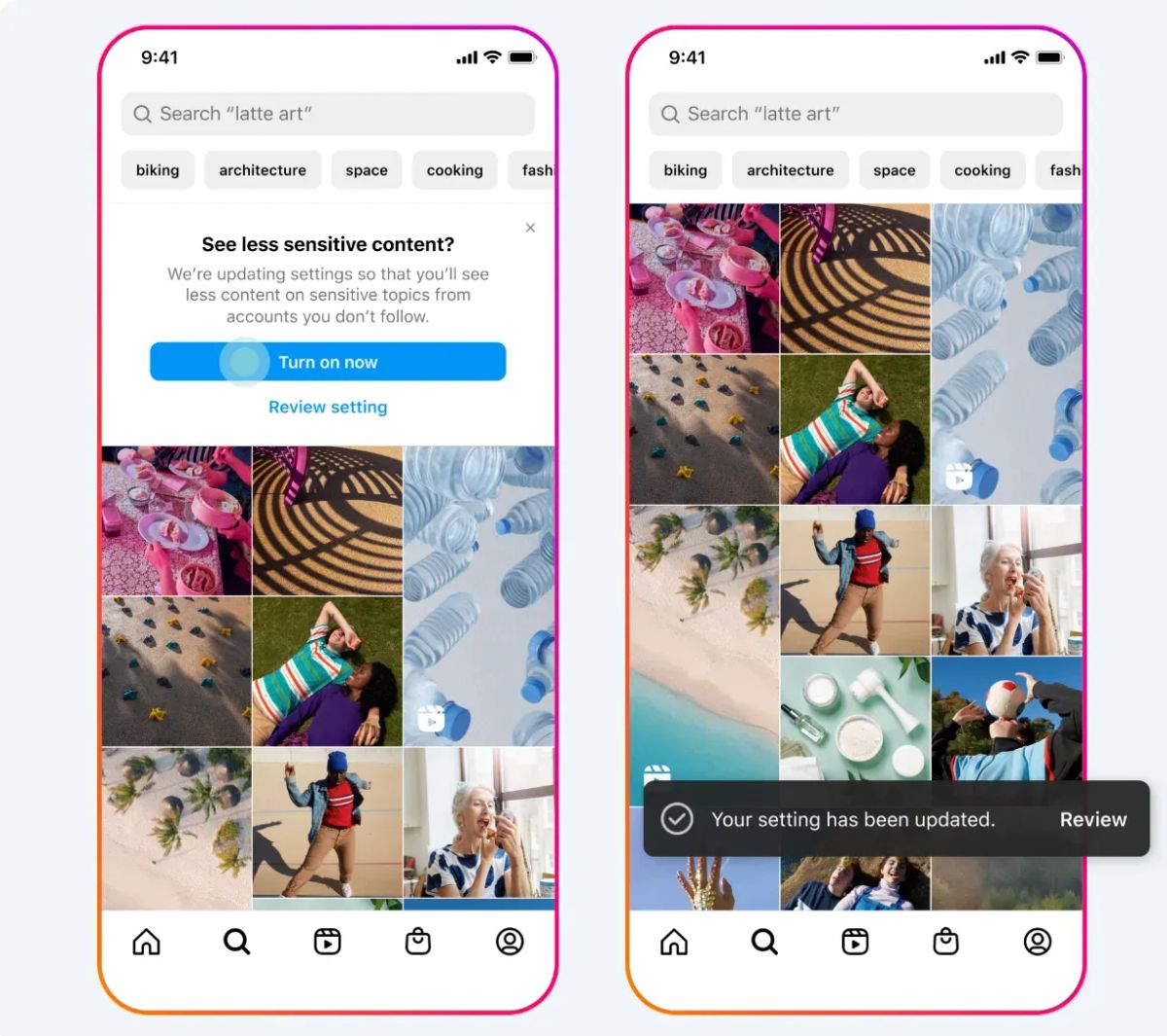

Teen accounts — that is, accounts of under-18 users, based on the birth date entered during sign-up — will automatically be placed into the most restrictive content settings. Teens under 16 won’t be shown sexually explicit content. On Instagram, this is called Sensitive Content Control, while on Facebook, it is known as Reduce. Previously, teens could choose less stringent settings. Teen users can’t opt out of these new settings.

The new restricted status of teen accounts means teens won’t be able to see or search for harmful content, even if it is shared by a friend or someone they follow. For example, if a teen’s friend had been posting about dieting, those posts will no longer be visible to the teen. However, teens might still see content related to a friend’s recovery from an eating disorder.

The changes announced today fall broadly into a category of platform tweaks that could be labeled “They weren’t doing that already?” But the fact that Meta made these changes, which the Journal calls “the biggest change the tech giant has made to ensure younger users have a more age-appropriate experience on its social-media sites,” underscores the degree to which child safety has become the most important dimension along which social networks are being judged in 2024.

How did that become the case?

The most consequential shift in attempts to regulate social media over the past year has been in the theory of harm. In the period after the 2016 US presidential election, regulators focused heavily on issues related to speech. Democrats focused on the way platforms can amplify lawful but harmful material, including hate speech and misinformation. Republicans took the opposite position, protesting against platforms’ right to moderate content and calling for an end to account removals and other restrictions in most cases.

The result was an enervating stalemate in efforts to regulate US tech companies; Congress has not passed a single meaningful new tech regulation since Donald Trump was elected president. But after the better part of a decade waiting for lawmakers to act, states took matters into their own hands. And in some cases, the focus remained on speech, as in the laws passed by Florida and Texas aimed at making content moderation illegal.

But over the past year, states have adopted a new approach to reducing the growth and power of social networks: making it increasingly difficult for children to use them. Utah and Arkansas have both passed laws seeking to ban minors from using some social networks without their parents’ permission. Montana sought to ban TikTok entirely.

Laws like these have struggled so far in courts. A lawsuit is seeking to block the Utah legislation from being implemented; and federal judges blocked both the Arkansas law and the Montana law from taking effect.

At the same time, pressure for lawmakers to act in these cases has only grown. Last year, the US surgeon general issued an advisory warning that social networks can be harmful to teens. Some critics dismissed the report as political posturing, but on the whole I thought it offered a fairly balanced portrait of both the potential benefits and harms that can come with extended use of social networks.

Meanwhile, a series of investigations over the past year has continued to shine a light on ways that social networks continue to be unsafe for young people. The Wall Street Journal documented how Instagram is used to connect buyers and sellers of child sexual abuse material, and the Stanford Internet Observatory found that similar issues related to CSAM affect X, Telegram, Mastodon, and other decentralized networks.

More recently, the Journal reported that Meta’s systems are unwittingly connecting pedophiles together. And on Snapchat, a scam targeting teenage boys has resulted in more than a dozen children taking their lives after being blackmailed over their nudes.

Frustration with platforms culminated in the lawsuit filed against Meta in October by 41 states and the District of Columbia, alleging that the company misled young people about the potential harms they face on the company’s apps. An unredacted version of the suit that came out later documented the extent to which Meta was aware of huge numbers of under-13 users.

All of that feels like necessary context for the changes Meta announced today. It also feels like an explanation why, despite the changes it has made over the past year, Meta remains on the defensive.

Last July, after he signed the Utah legislation, Gov. Spencer Cox described his rationale in an interview with the New York Times’ Jane Coaston. “If you look at the increased rates of depression, anxiety, self-harm since about 2012, across the board but especially with young women, we have just seen exponential increases in those mental health concerns,” Cox told her. “Again, the research is telling us over and over and over again that it is not just correlated, but it’s being caused, at least in part, by the social media platforms.”

That view is much disputed by the social networks, which argue that the data is more muddled and that any causal effects are small to nonexistent. But Cox’s belief is widely shared — and not just among Republican governors, but among millions of families who have complicated relationships with Facebook, Instagram, YouTube, TikTok, Snapchat, and other apps.

If you believe that at least some teens experience harm on social media, it seems unlikely that a parental permission slip will solve it. Neither, I’m afraid, will a few tweaks to where content about self-harm can be shared.

Not for the first time, lawmakers and social networks are talking past each other. To make real progress on teen mental health in 2024, both sides are going to have to find some common ground.

Talk about this edition with us in Discord: This link will get you in for the next week.

Governing

- TikTok quietly restricted a data analysis tool intended to support advertisers after researchers used it to study content related to the Israel-Hamas war, among other topics. (Sapna Maheshwari / The New York Times)

- X banned a number of high-profile accounts, including journalists, podcasters and writers that criticized the Israeli government. Their bans were quickly overturned, with Elon Musk blaming the move on unruly spam algorithms. (Thomas Germain / Gizmodo)

- The European Commission is examining Microsoft and OpenAI’s relationship to consider whether it needs to be vetted under merger rules. (Samuel Stolton / Bloomberg)

- A profile of Anna Makanju, OpenAI’s vice president of global affairs, who helped position the company as a trusted partner for lawmakers. (Cat Zakrzewski / Washington Post)

- Large language models from Meta and OpenAI have spawned a wave of digital sex bots — some of whom advertise themselves as children. (Ben Weiss and Alexandra Sternlicht / Fortune)

- Apple is pushing back against European rules that designate its five App Stores as one core platform service subject to regulation, saying that each store is specific to its devices. Uh huh. (Foo Yun Chee / Reuters)

- A Chinese state-backed institute says it has developed a technique to identify people who use Apple AirDrop to send messages. A potentially worrisome blow to protesters. (Bloomberg)

- Elections around the world are facing a new spread of disinformation amid rising extremism, rapid AI advancements, and a dangerous lack of content moderation. (Tiffany Hsu, Stuart A. Thompson and Steven Lee Myers / The New York Times)

- UK media regulator Ofcom has a new online safety team of almost 350 people, including new senior hires from Meta, Microsoft, and Google. (Cristina Criddle / Financial Times)

- The Screen Actors Guild signed a deal requiring consent and minimum payments when actors’ voices are digitally replicated. (Thomas Buckley / Bloomberg)

Industry

- ByteDance is in talks with multiple companies, including gaming giant Tencent, to sell off its gaming assets. (Josh Ye / Reuters)

- Volkswagen is planning on installing ChatGPT in cars, rolling them out first in Europe then in the US. ??? (Andrew J. Hawkins / The Verge)

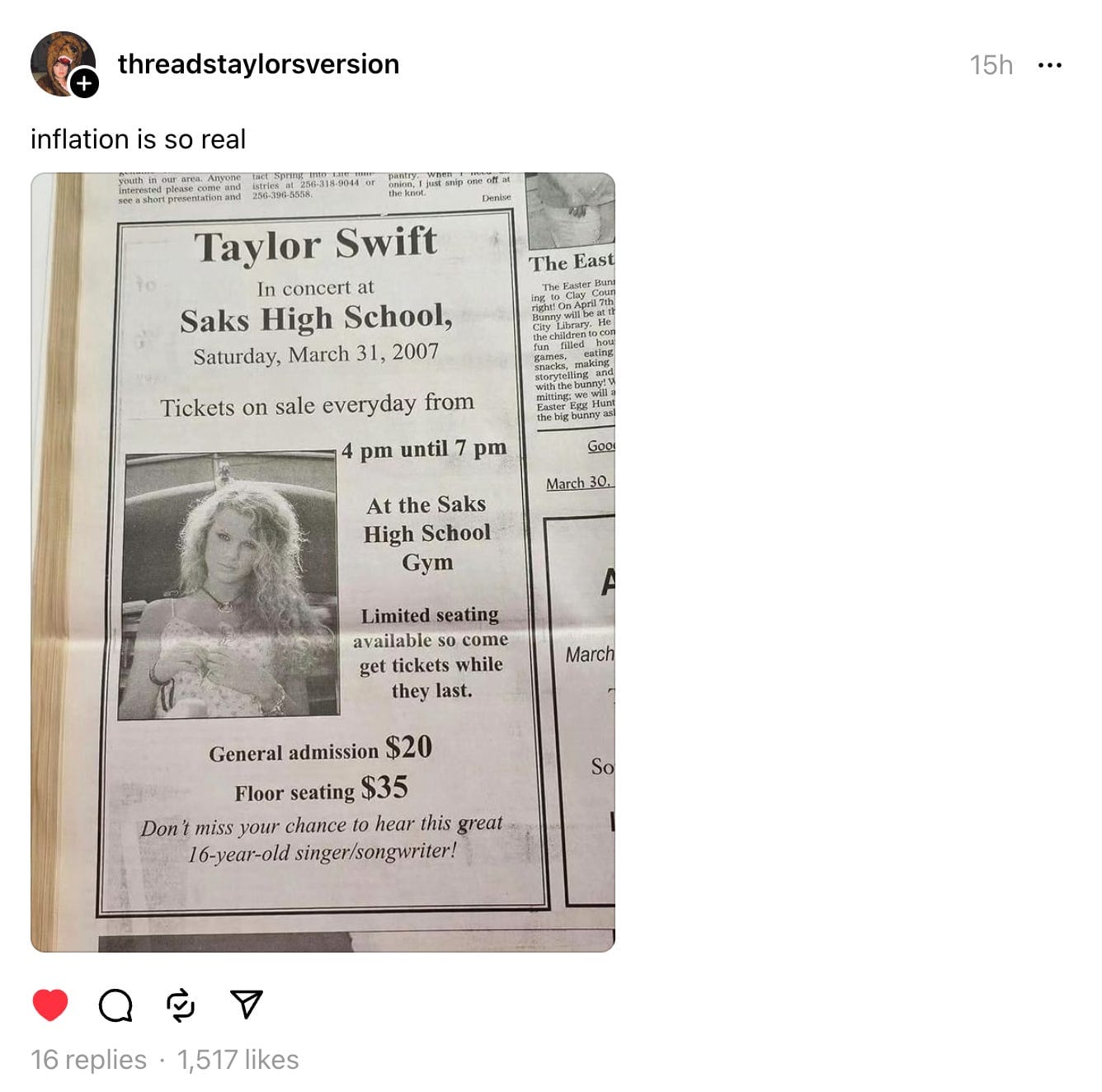

- A number of AI-generated ads are impersonating celebrities to endorse products in a scarily accurate way. So no, the Taylor Swift Le Creuset giveaway ad is not real. (Tiffany Hsu and Yiwen Lu / The New York Times)

- Startup founders are worried that they might get “Altmaned” — ousted by their own company — and are looking at ways to protect themselves. (Corrie Driebusch / The Wall Street Journal)

- Activist investor Elliott Investment Management invested about $1 billion in Match, the parent company of Tinder. Remember when Elliott did this with Jack Dorsey’s Twitter? I forget how that turned out. (Lauren Thomas / The Wall Street Journal)

- Dating apps like Tinder and Hinge are raising prices and introducing new subscription tiers, testing how much users will pay to find love. (Euan Healy / Financial Times)

- X’s former head of trust and safety, Ella Irwin, joined Stability AI as its senior vice president of integrity. (Ben Goggin / NBC News)

- X announced a number of content deals for streaming, including shows hosted by former CNN anchor Don Lemon and former Fox Sports host Jim Rome. (Alex Weprin / The Hollywood Reporter)

- Demos for the Apple Vision Pro will begin in Apple Stores at the start of February. (Zac Hall / 9to5Mac)

- Apple is asking developers not to refer to visionOS apps as “AR” or “VR”, under its new guidelines. Not sure even Apple can make “spatial computing” the standard parlance. (Filipe Espósito / 9to5Mac)

- Carta is closing its liquidity services business, following allegations that a salesperson from the department improperly used customer data. (Dan Primack and Kia Kokalitcheva / Axios)

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and teen mental health solutions: casey@platformer.news and zoe@platformer.news.