The states sue Meta over child safety

Everyone agrees there's a teen mental health crisis. Is this how you fix it?

I.

On Tuesday, 41 states and the District of Columbia sued Meta, alleging that the company hurts children by violating their privacy and misleading them about the potential harms they may experience from using its products. While a handful of states have pursued aggressive action against Meta and other platforms in an effort to prevent harm to minors, today's lawsuit represents the largest collective action against a social network on child safety grounds that we have seen to date.

Here are Cristiano Lima and Naomi Nix at the Washington Post:

While the scope of the legal claims varies, they paint a picture of a company that has hooked children on its platforms using harmful and manipulative tactics.

A 233-page federal complaint alleges that the company engaged in a “scheme to exploit young users for profit” by misleading them about safety features and the prevalence of harmful content, harvesting their data and violating federal laws on children’s privacy. State officials claim that the company knowingly deployed changes to keep children on the site to the detriment of their well-being, violating consumer protection laws.

We’ll get to the complaint itself. But first, some relevant context.

II.

The AGs’ lawsuit arrives nearly two years after the attorneys general began investigating Meta over child safety concerns. The investigation was prompted in large part by revelations from documents leaked by whistleblower Frances Haugen, particularly some internal research showing that a minority of teenage girls reported a negative effect on their mental health after using Instagram.

After Congress failed to pass a single piece of internet legislation during the post-2016 backlash to Big Tech, efforts shifted to the state level, where in 39 states a single party controls both legislative chambers and the governor’s office. That makes it much easier to pass legislation, for better and for worse. So does framing regulation as an effort to protect children.

The lawsuit filed Tuesday represents the culmination of efforts to re-focus the attention of lawmakers intent on regulating tech toward child safety. It is an issue in need of urgent attention at all levels of government. The Centers for Disease Control reported this year that 57 percent of US teen girls “felt persistently sad or hopeless” in 2021, the highest reported level in a decade.

The relationship between social networks and teenage mental health remains controversial. In May, US Surgeon General Vivek Murthy issued an advisory opinion arguing that social media can put some teens at risk of serious harm. (The advisory noted that social media can have a positive impact on teens as well.) And in August, I wrote here about conflicting research on teens and social media, observing both that the effects on teenage mental health in these studies are typically small and that studies are often designed in ways that obscure the larger impact of social media.

(I think regularly about the observation made by Prof. Sonia Livingstone of the London School of Economics to the BBC: “This reminds me of a conference I went to that asked, 'what difference did half a century of television make?'. How can there be one answer?")

Over time, I have become more persuaded that social networks can be harmful to young people: in particular, certain groups of young people (those with existing mental health issues, victims of bullying) and in particular circumstances (those who are using social networks for more than three hours per day.)

But my views are also formed by my experiences growing up as a gay man online, where I used the then-nascent social web to find connections and community that I struggled to locate elsewhere. That history makes me skeptical of regulations that would make it harder for LGBT teens and other minority groups to find and speak to one another online, which is the express point of some (bipartisan!) legislation proposed this year.

III.

There are two key questions to ask about the lawsuit against Meta today. One of those questions — and the one that will be explored in court — is whether Meta broke the law. The other is whether you can address the harms alleged in the lawsuit in a way that makes young people meaningfully safer.

The complaint is 233 pages and somewhat heavily redacted. For that reason, I haven’t read the full thing, and we may learn more about the evidence uncovered by the AGs as the case moves forward.

On the first question, the plaintiffs put Meta’s alleged crimes into two buckets. The first and biggest bucket concerns the company’s “scheme to exploit young users for profit,” which basically amounts to Meta making apps and then building features designed to get people to use those apps a lot. It “designs and deploys features to capture young users’ attention and prolong their time on its Social Media Platforms”; its “Recommendation Algorithms encourage compulsive use, which Meta does not disclose”; its “use of disruptive audiovisual and haptic notifications interfere with young users’ education and sleep.”

There are certainly some worthwhile conversations to be had here. Section B-7 alleges that “Meta promotes Platform features such as visual filters known to promote eating disorders and body dysmorphia in youth.” Perhaps those ought to be disabled for younger users?

Section B-3 argues that “Meta’s Recommendation Algorithms encourage compulsive use, which Meta does not disclose.” Do we want to set China-style limits on the amount of time that young people can spend using certain kinds of apps each day?

Still, many of the complaints here boil down to Meta sending out a lot of push notifications. This can be annoying, and in fact I have shut off most of the notifications the company wants to send me. But it’s not clear that it’s illegal, even if some young people find themselves hooked on these notifications and experiencing harm as a result.

The second bucket of crimes seems potentially more persuasive since it alleges violations of an actual law: the Children’s Online Privacy Protection Act, or COPPA, which requires platforms to obtain parental consent from any users younger than 13.

The Supreme Court has consistently struck down laws that require age verification of users on Free Speech grounds. And so in practice platforms “verify” users’ ages by showing them a box that says something like, “hey, you’re 13 or older, right?” And then the 11-year-old clicks “yes,” and as far as platforms are concerned, they are then COPPA-compliant.

“Meta does not obtain — or even attempt to obtain — verifiable parental consent before collecting the personal information of children on Instagram and Facebook,” the lawsuit alleges. Instead, the AGs say that Meta collects personal information about children, including names, addresses, email addresses, location data and photographs. Because under-13s are technically banned from Facebook and Instagram, Meta argues it is in compliance. It doesn’t need parental consent because kids shouldn’t be on the platform at all. (It also does take steps to identify under-13 users and delete their accounts, though the AGs argue it isn’t trying hard enough.)

“We share the attorneys general’s commitment to providing teens with safe, positive experiences online, and have already introduced over 30 tools to support teens and their families,” Meta told me in an email. “We’re disappointed that instead of working productively with companies across the industry to create clear, age-appropriate standards for the many apps teens use, the attorneys general have chosen this path.”

Of course, kids are on Facebook and Instagram. In part because Meta has openly courted them, through products like Messenger Kids, and in part because using internet services without your parents’ permission has become a cherished hallmark of American childhood.

Due to redactions in the complaint, it’s difficult to assess how strong the AGs’ evidence against Meta is here. But every platform of even modest size has at least some under-13 users, and most of those platforms do very little to identify those users, because for legal liability reasons you might be better off not knowing they are there.

That’s why it’s relatively easy for me to imagine that between 40 state attorneys general, they will be able to muster some evidence that among the billions of people who have signed up for Meta’s services, there was some substantial number of children, some significant percentage of which did not run it by mom and dad first, and that this will result in some sort of settlement large enough for AGs not to feel embarrassed about it when they announce it in a news conference, and small enough that Meta continues to operate more or less as it did before this lawsuit was ever filed.

And that leads us to the second and ultimately much more important question: can this lawsuit make young people safer?

I suppose there’s a world where a judge agrees with everything in the complaint and forces Meta to design its services from scratch, at least for young people. (Particularly if all the redacted sections of the complaint contain compelling evidence of harm to teens.) But it’s hard to see how a complaint about ranking algorithms, likes, and augmented reality filters can withstand a First Amendment challenge. And it’s even harder to see how the government can address a society-wide mental health crisis at the level of app design.

This isn’t to say Meta — or any of the other large platforms that teenagers use for hours every day, which go conspicuously unmentioned here — should be let off the hook. Platforms should conduct and release more research on how social media use can lead to mental health harms, and take steps to acknowledge and address it.

That won’t solve the teen mental health crisis, either. But I imagine it would be more effective than this lawsuit.

Musk says

Over the weekend, Elon Musk took aim at Wikipedia, a service he’s been bad-mouthing since at least 2019. Previously, Musk’s major gripe seemed to be that Tesla’s founding CEO, Martin Eberhard, had a Wikipedia page that lists him as a co-founder of that company. Now, Musk is asking why the company keeps asking people for donations. He also offered it $1 billion to change its name to Dickipedia.

Well, since he asked: the crowdsourced encyclopedia is run by a nonprofit called the Wikimedia Foundation, and its finances are public. The company’s 2022 annual report says that revenue was $154,686,521, and total expenses were $145,970,915. Its financials are audited by the auditor KPMG. Remember third-party auditors? X’s resigned this summer with $500,000 in outstanding invoices.

You can read the financial report for a full breakdown of these expenses, which includes $2,704,842 for internet hosting. Jaime Crespo, a senior database administrator at the site, posted a thorough breakdown about this figure on Bluesky:

“First, the numbers: We serve around 25 billion page views and around 50 million page edits each month. That translates to around 100K-160K http requests/s, 24/7 grafana.wikimedia.org In terms of public data size, that is ~180 TB after compression for text and half a PB for media,” Crespo wrote, later adding that, “For user security, privacy and cost, rather than using a 3rd party cloud provider, we host the ~2000 servers ourselves! That's where the internet hosting cost goes to: replacing and upgrading servers, bandwidth & network, datacenter space.”

Crespo politely declined to be interviewed. But a Wikipedia spokesperson had this comment:

“We are grateful that generous individuals from all over the world give every year to keep Wikipedia freely available and accessible. The majority of our funding comes from donations ($11 is the average) from people who read Wikipedia. We are not funded by advertising, we don’t charge a subscription fee, and we don’t sell user data. This model is core to our values and our projects, including Wikipedia. It preserves our independence by reducing the ability of any one organization or person to influence the content on Wikipedia.”

In summary, the Wikimedia Foundation asks for money to keep Wikipedia up and running. Imagine if Twitter had asked for $11 donations, rather than relying on a billionaire like Musk proposed. It might still be alive!

— Zoe Schiffer

Governing

- FTC commissioner Alvaro Bedoya said the Commission is planning to hire child psychologists to help understand the impact of social media on children and inform enforcement decisions. (Suzanne Smalley / The Record)

- ICE reportedly used a system called Giant Oak Search Technology to analyze social media posts and determine whether they are “derogatory” to the United States. They’ve used this information as part of immigration enforcement. (Joseph Cox / 404 Media)

- Google disabled live traffic data for Maps and Waze in Israel and the Gaza strip at the request of the Israeli military. The data could be used to track Israeli troop movements ahead of a potential ground invasion into Gaza. (Marissa Newman / Bloomberg)

- Some people are losing their jobs or getting job offers rescinded after publicly posting about the Israel-Hamas conflict and being critical of Israel. Twitter may be dead, but “never tweet” remains undefeated life advice. (Timothy Bella / Washington Post)

- A group of experts authored 23 international policy proposals for AI, saying that allowing AI systems to develop before understanding the risks threatens society at large. The group included some of the “godfathers” of AI, who argue that AI developers need to set aside a substantial part of their budget for safety research and enforcement. (Dan Milmo / The Guardian)

- UK ministers are set to reject demands from Big Tech companies to be able to appeal decisions made by the new Digital Markets Unit in the Competition and Markets Authority. (Kitty Donaldson and Thomas Seal / Bloomberg)

- PimEyes, a Clearview AI-style search engine for faces, is banning searches for minors over concerns that it could be used for malicious purposes. Ya think?? (Kashmir Hill / The New York Times)

- A new tool, Nightshade, is letting artists fight back against AI companies using their work to train AI without permission by “poisoning” the data. I am very skeptical that this will work! (Melissa Heikkilä / MIT Tech Review)

Industry

- Q3 earnings: YouTube earned $7.95 billion in ad revenue as Alphabet beat revenue expectations. (Todd Spangler / Variety)

- But Alphabet’s cloud unit revenue fell short of expectations. (Julia Love and Davey Alba / Bloomberg)

- Snap shares jumped about 20% after beating expectations for sales and net income, but declined after the company said some advertisers paused spending amid the war in the Middle East. (Jonathan Vanian / CNBC)

- Microsoft shares jumped about 6% after the company beat analyst expectations, with Azure cloud revenue growth accelerating. (Jordan Novet / CNBC)Most of X’s biggest advertising spenders have reportedly stopped advertising on the platform, contradicting what both Musk and CEO Linda Yaccarino have been saying. (Lara O’Reilly / Insider)

- Top creators are already emerging on Threads as users flock to the app in search of an alternative to X. These power users say they’re seeing more engagement despite having a lower follower count. (Salvador Rodriguez and Meghan Bobrowsky / The Wall Street Journal)

- In an effort to boost growth, Meta is promoting Threads posts on Facebook and Instagram, with no way to opt out. (Karissa Bell / Engadget)

- Automattic is acquiring universal messaging app Texts for $50 million, saying that messaging is the company’s third focus after publishing and commerce. (David Pierce / The Verge)

- YouTube Music is letting users customize their own playlist cover art with AI. (Emma Roth / The Verge)

- Twitch and YouTube are phasing out massive deals with gaming creators, with Twitch CEO Dan Clancy saying that bidding wars aren’t unsustainable for its business. (Cecilia D'Anastasio / Bloomberg)

- A decade-old idea to organize the internet, POSSE: Publish (on your) Own Site, Syndicate Everywhere, could help content creators navigate an era where social networks are part of one ecosystem. (David Pierce / The Verge)

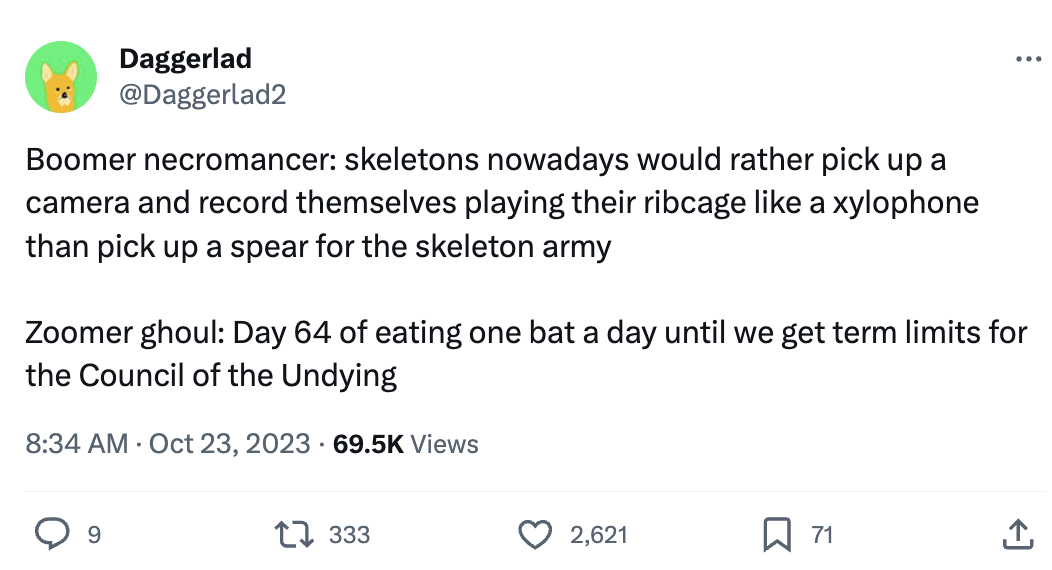

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and platform safety ideas: casey@platformer.news and zoe@platformer.news.