Sam Altman’s second thoughts

OpenAI’s CEO is asking the public to lower the temperature on AI. But who turned it up in the first place?

Platformer is off Tuesday. This is a column about AI. My fiancé works at Anthropic. See my full ethics disclosure here.

I.

Early Friday morning, according to a criminal complaint, a 20-year-old man threw a Molotov cocktail at Sam Altman’s house before driving to OpenAI headquarters and threatening to kill everyone inside.

The incident came a few days after someone fired 13 rounds at the home of an Indianapolis city councilor who had expressed support for a data center project; a note left at the scene read “no data centers.”

The escalating political violence over AI is terrifying, morally wrong, and completely ineffectual. The spread of AI systems, despite their growing unpopularity, will not be stopped by a few stray bullets. And among the many reasons to be alarmed by incidents like the ones we have seen over the past week is that the perpetrators seem too disturbed to understand that.

On Friday afternoon — in between the firebombing and a shooting near his property that the company said was unrelated — Altman reflected: on the attacks, last week’s New Yorker investigation into his tenure as CEO, the state of AI, and growing public unease about the technology. After talking up AI’s potential to create great benefits, he also sought to validate the fears of those afraid of what the future might bring. “The fear and anxiety about AI is justified,” Altman wrote, underneath a photo of his husband and baby. “We are in the process of witnessing the largest change to society in a long time, and perhaps ever.”

Altman excels at reassuring you that he is on your side — this is one of the themes of the New Yorker profile — and he takes pains here to find common ground. He says he does not want to see AI power become too concentrated, and that it should be governed democratically. “It is important that the democratic process remains more powerful than companies,” he writes, in one of many lines in the piece that I wholeheartedly agree with.

Altman concludes by saying he sympathizes with anti-tech sentiment and “welcome[s] good-faith criticism and debate.” “While we have that debate,” he writes, “we should de-escalate the rhetoric and tactics and try to have fewer explosions in fewer homes, figuratively and literally.”

II.

Altman was writing in the aftermath of a traumatic event, and I’m tempted to leave it there. There’s nothing wrong with calling on cooler heads to prevail, or to hope that the past week’s violence was an aberration. I hope it was, too.

And yet I keep coming back to Altman’s phrase “de-escalate the rhetoric.” After all, it was Altman and his fellow AI CEOs who have spent the past decade speaking about AI in existential terms; some of that language can be found in Altman’s very blog post calling for calm.

He’s been writing that way for a long time.

“Development of superhuman machine intelligence is probably the greatest threat to the continued existence of humanity,” Altman wrote in a 2015 blog post. Speculating on the arrival of superintelligence, he added: “Evolution will continue forward, and if humans are no longer the most-fit species, we may go away.”

In 2023, Altman signed a statement from the nonprofit Center for AI Safety that “Mitigating the risk of extinction from AI should be a global priority alongside other societal-scale risks such as pandemics and nuclear war.” Google DeepMind CEO Demis Hassabis and Anthropic CEO Dario Amodei signed the statement as well.

During a podcast appearance last year, Altman likened the effort to build superhuman AI to the Manhattan Project. “There are these moments in the history of science where you have a group of scientists look at their creation and just say, you know, what have we done?” he said. “Maybe it's great, maybe it's bad, but what have we done?”

Altman’s fellow CEOs have expressed similar levels of alarm. “We are summoning the demon," Elon Musk said in 2014. In January, in a long essay about risks posed by AI, Amodei wrote: “Humanity needs to wake up.”

And to some degree, humanity has woken up. One recent survey found that AI is rising in importance to voters faster than any other issue. That same survey found that a majority of voters believe AI is advancing too quickly, and that superintelligence would be mostly harmful to people. Meanwhile, a separate survey from Pew last year found that a majority of Americans believe that AI will lead to fewer jobs in the next 20 years.

Look no further than last week’s announcement of Anthropic’s Mythos model, and its unsettling ability to find new vulnerabilities in decades-old open source software, to understand that the CEOs were being honest when they warned that AI would introduce new risks into the world.

Given these facts, I struggle to understand what it would mean to “de-escalate the rhetoric” around AI. The CEOs are more convinced than ever that powerful intelligence will arrive within the next few years. The public increasingly believes them — and is appalled by the implications. It seems strange to suggest that, amidst accelerating breakthroughs in AI model performance, everyone needs to calm down.

If we really might be facing “the greatest threat to the continued existence of humanity,” as Altman once wrote, shouldn’t we expect people at some point to (non-violently) freak out?

III.

Altman’s proposed solution to the upheaval that OpenAI and its peers are planning is democratic governance. “Laws and norms are going to change, but we have to work within the democratic process, even though it will be messy and slower than we’d like,” he wrote in his weekend blog post.

This is a good instinct: one of the virtues of democracy is the way that it gives people a feeling of control over their own lives. People who believe that they can rein in AI companies through votes and laws and regulations will be much less likely to turn to violence.

But when legislatures have tried to regulate AI, OpenAI has fought them at every turn. The company lobbied against California’s SB 1047, which sought to set safety standards for frontier AI companies; the governor then vetoed it. OpenAI sent a sheriff to the home of a nonprofit advocate for California’s SB 53, which creates transparency requirements for AI companies, to deliver a subpoena as part of an inquiry into whether nonprofits were being directed or influenced by Musk. The company lobbied the European Union to weaken the AI Act. Most recently it backed an Illinois bill that would shield OpenAI from liability in cases where its models are used to cause serious harm so long as they did not “recklessly or intentionally” cause it and agreed to publish safety reports.

To some extent, yes, this is “working within the democratic process.” But the Illinois case shows what that looks like in practice: a company writing rules to limit its own accountability. And the more that OpenAI seeks to stifle efforts to regulate it, the more infuriated the general public will become.

Altman is right that words have power, and that AI anxiety should not boil over into open violence. To point out that he has consistently talked about the risks of AI systems is in no way to suggest that he deserved to be attacked over it.

But at the same time, the sudden call for calm does ring hollow coming from someone who spent a decade sounding the alarm, whose predictions look increasingly prescient — and who now uses the company’s resources to fight efforts to put his company under democratic oversight.

Ultimately, the public’s disdain for AI was not invented by journalists. It was co-created by the people building the systems, who have consistently told us that it is imminent and dangerous. That the public has now begun to take them at their word should not surprise them. Isn't that what they have been asking for all along?

But they should listen to what the public is asking for, too. AI companies are asking us to trust them with a technology that everyone involved believes could end in disaster. The least they could do in return is let the rest of us have a vote.

A MESSAGE FROM OUR SPONSOR

Providing a clearer view of care

UnitedHealth Group is making care easier to navigate by investing in tools that help patients find providers near them and compare costs.

"More transparent pricing benefits everyone." - Dr. Kailey G, Pediatrician

Following

OpenAI's Anthropic diss

What happened: OpenAI’s Chief Revenue Officer, Denise Dresser, sent staff a pugnacious memo about the company’s strategic direction — and its chief competitor, Anthropic.

“The market is as competitive as I have ever seen it,” Dresser wrote, and “there is no question it can be noisy, volatile and distracting at times.”

Dresser promoted the company's pivot to the enterprise and celebrated that demand for enterprise services via new partner Amazon has “been frankly staggering.”

She also said OpenAI’s “analysis” shows Anthropic is inflating its reported annualized revenue by $8 billion. “They use accounting treatment that makes revenue look bigger than it is, including grossing up rev share with Amazon and Google.” Dresser said. She added that Anthropic made a “strategic misstep to not acquire enough compute.”

Dresser had an opinion on Anthropic’s message, as well as their finances: “Their story is built on fear, restriction, and the idea that a small group of elites should control AI,” she wrote.

“Our positive message will win over time,” Dresser told employees.

Why we’re following: We were delighted by this memo’s pettiness — and curious about whether Anthropic is, in fact, cooking its books. What’s Dresser talking about when she says Anthropic is “grossing up” its revenue share?

Well, Anthropic has some cloud partners to which it pays a cut of revenue. But it reports gross revenue. That means it includes the cut that it later pays to partners AWS, Microsoft, and Google in its financials. OpenAI, on the other hand, doesn’t report gross revenue via its cloud partnership with Microsoft — it reports net revenue, minus Microsoft's share.

Both practices are allowed under standard US accounting principles, depending on who is considered the “principal” in the transaction. Anthropic and OpenAI’s partnerships have different terms, so it’s defensible for them to report the revenue from these partnerships differently.

Where’s the $8 billion coming from? While Dresser didn’t share details of her analysis, an anonymous source gave the same $8 billion figure to Semafor last week — according to Semafor, that number was “based on how much OpenAI would add to its run rate if it counted gross revenue instead of net revenue.”

While it is true that OpenAI is using a more conservative accounting practice than Anthropic, we have no idea whether that the delta between their gross and net revenue is the same as Anthropic’s.

So why was Dresser repeating that analysis? Well, in the lead-up to both companies potentially IPOing this year, they can’t be happy that Anthropic’s latest reported ARR of $30 billion is higher than the $25 billion that OpenAI last reported. And independent analysis shows Anthropic is closing in on OpenAI’s share in the enterprise market. The race is heating up!

What people are saying: On X, Brad Sams, VP at software company Stardock, wrote, “The gloves are coming off 🍿”

Ex-OpenAI policy researcher Miles Brundage wrote, “OpenAI leaders should stop caricaturing Anthropic.” He added, “It encourages tribalism at a time when safety cooperation is urgently needed.” He thinks they have the wrong idea: “Don’t know this person so am assuming she is genuine but Anthropic’s 'story' is not 'built on fear, restriction, and the idea that a small group of elites should control AI.'”

New York Times reporter Mike Isaac wrote, “the openai/anthropic feud is like the mad men elevator meme but both companies are ginsberg.” [If you’ve forgotten, Ginsberg is the first guy (below). Platformer agrees that, if nothing else, the two companies seem to be thinking about each other a lot.]

— Ella Markianos

Side Quests

Reddit was ordered to appear in front of a grand jury as part of President Trump’s effort to unmask anonymous critics of ICE.

Investors in Trump’s family crypto venture World Liberty Financial are accusing the project of secretly letting insiders freeze token holders’ funds.

Emil Michael, the Pentagon’s under secretary for research and engineering, made up to $24 million selling xAI stock after the Pentagon struck deals with the company.

The CIA has started using AI to help analyze intel.

A look at how the escalating AI arms race is reminiscent of the nuclear arms race.

Maine is set to become the first state to successfully impose a temporary ban on data center construction.

The new EU leader in charge of competition policy, Anthony Whelan, has signaled he will probe Big Tech companies despite pressure from Trump.

A judge blocked Arizona’s criminal case against Kalshi at the CFTC’s request.

Three senior OpenAI executives behind the Stargate initiative are leaving and joining Meta, sources said. An internal OpenAI tool downloaded a compromised update from the Axios software. OpenAI opened its first permanent London office.

Anthropic’s donations can’t be used to influence federal elections, the company said. Anthropic hired lobbying firm Ballard Partners, which has strong ties to Trump, following its Pentagon fight.

Vice President JD Vance and Treasury Secretary Scott Bessent reportedly questioned leading tech CEOs, including Dario Amodei, about the security of AI models before Anthropic released Mythos. Trump officials urged Wall Street banks to test the Mythos model internally, sources said. UK financial regulators are reportedly rushing to assess the risks posted by Mythos.

CoreWeave will provide Anthropic with data center capacity as part of a multiyear deal. Anthropic has reportedly asked Christian religious leaders for advice on how to guide Claude’s moral development. Claude for Word is now available in beta.

OpenAI accused Elon Musk of a “legal ambush” by suddenly changing direction in his lawsuit. Musk is experiencing a string of legal losses. A verified @elonmusk account posted on TikTok for the first time, and a verified @elonmusk handle surfaced on Instagram.

xAI sued Colorado to challenge its landmark AI bill aimed at protecting against AI “algorithmic discrimination.” (xAI said the bill would force it to “promote the state’s ideological views on various matters, racial justice in particular.”) X is reducing payments to clickbait and news aggregation accounts.

The majority of Europeans don’t trust American or Chinese tech companies with their data, a new survey showed.

Meta is reportedly building an AI version of Mark Zuckerberg that can talk to employees in his place.

The appearance of Polymarket bets in Google News was an error, Google said. Gmail end-to-end encryption is now available on all Android and iOS devices. YouTube is raising prices on its Premium subscription.

Microsoft is building new Copilot features inspired by OpenClaw.

Snap announced a partnership between its AR glasses subsidiary Specs and chipmaker Qualcomm.

Roblox is implementing an age verification process to ensure users are over the age of nine.

A look at how small AI startup Black Forest Labs is seeing success in AI image generation and its physical AI dreams.

The Wayback Machine is facing setbacks as major news organizations restrict access due to AI copyright concerns.

A look at whether prediction markets can improve weather forecasts.

Clients are increasingly sending lawyers numerous AI-generated questions and driving up fees. AI in the workplace is driving some productivity gains but not fundamental shifts in how work is done, according to a new Gallup poll. Stanford released its 2026 AI Index Report.

A look at how an intense relationship with an AI chatbot turned fatal.

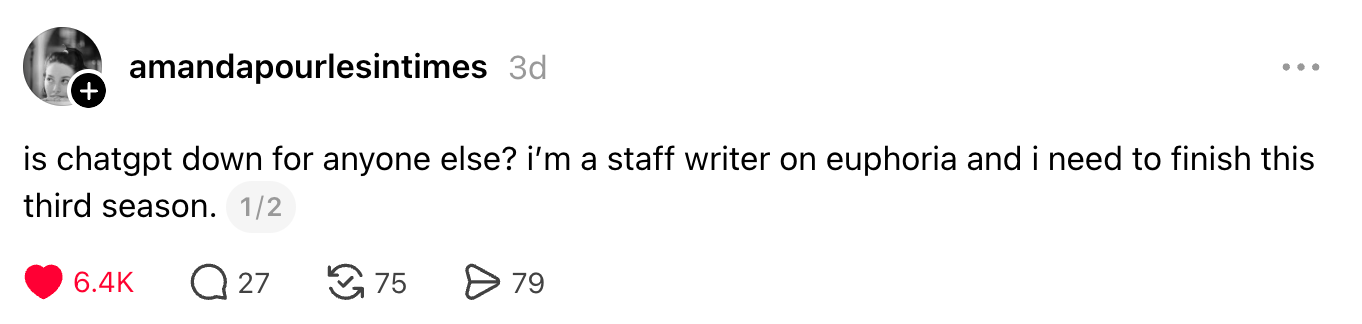

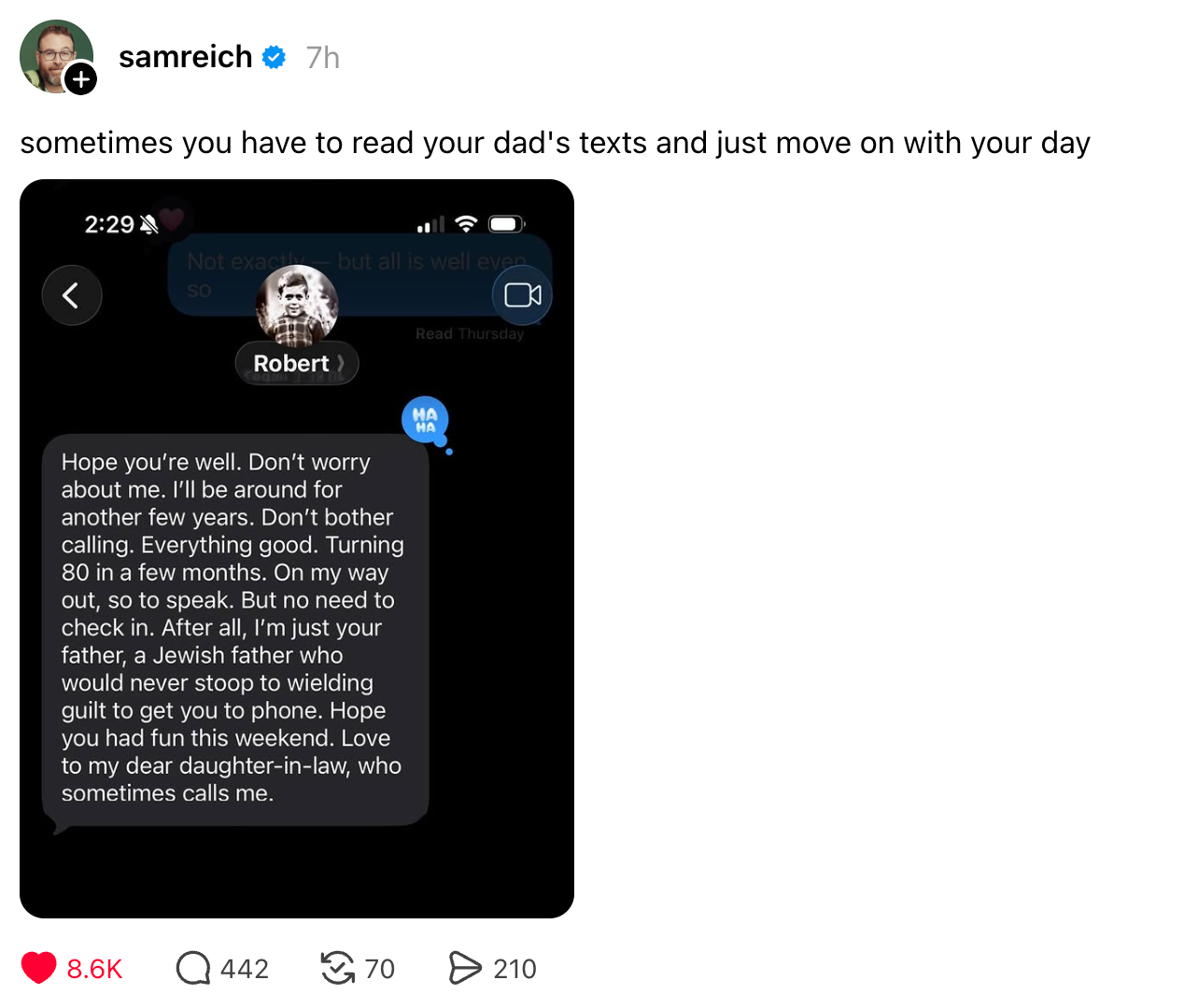

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and nonviolent protests: casey@platformer.news. Read our ethics policy here.