Can you have child safety and Section 230, too?

The verdicts in last week’s social media trials have alarmed open-internet advocates. But it’s possible to regulate platform design while also protecting speech

I.

In the aftermath of last week’s landmark rulings in lawsuits against Meta and YouTube (in Los Angeles) and Meta alone (in New Mexico), reactions fell into three basic camps.

One camp, represented by the plaintiffs, was euphoric. Finally, this side said, Big Tech would be held accountable for the harms caused to children by their platforms. “The jury’s verdict is a historic victory for every child and family who has paid the price for Meta’s choice to put profits over kids’ safety,” New Mexico Attorney General Raúl Torrez said in a statement after the verdict in that case. “Meta executives knew their products harmed children, disregarded warnings from their own employees, and lied to the public about what they knew. Today the jury joined families, educators, and child safety experts in saying enough is enough.”

A second camp, represented by the defendants, was defiant: both Meta and YouTube said they plan to appeal.

The third camp — and the one that has most interested me — are the writers, academics and thinkers who are trying to figure out what these verdicts mean for the wider internet. To this camp, it is self-evident that the tech platforms are villains of one sort or another. But in accepting the framing that their products have design defects for which they can be held liable, this camp argues, juries might have broken the basic compact that holds the internet together.

And should these verdicts be upheld on appeal, they worry that platforms will begin restricting far more speech than they ever did previously. What once were open forums for robust debate may come to feel increasingly sanitized and even censored.

The basic compact at the center of these cases is, of course, Section 230 of the Communications Decency Act, which prevents companies from being held liable in most cases for what their users post. If I libel you on Facebook, you are free to sue me, but Meta gets a free pass. The case that spurred lawmakers to create Section 230 was a defamation case like this; they worried that if you could sue a platform out of existence just because one user had defamed another, the internet would be at risk of collapse.

For 30 years, Section 230 has shielded platforms from liability for nearly everything their users do — from defamation to drug sales to incitement. It has also, along with the First Amendment, enabled an enormous amount of political speech.

Last week’s verdicts are important because they appear to demonstrate a clear path for people who believe they have been harmed by platforms to get around the shield: they only have to prove that the harms that they experienced resulted from the way the platform is designed.

In these cases, that means features including recommendation algorithms, “beauty” filters, infinite scroll, autoplay video, “streaks,” and barrages of push notifications. More worryingly, in the New Mexico case, encrypted messaging was also implicated.

Jurors were convinced that these features caused problematic use of Instagram and YouTube for the plaintiff in the LA case, and that Meta misled users about the safety of its platform in the New Mexico case. If upheld, platforms will face pressure to dramatically scale them back or eliminate them altogether, for children and possibly for adults. But some scholars say the changes may have to go further.

“Due to the legal pressure from the jury verdicts and the enacted and pending legislation, the social media industry faces existential legal liability and inevitably will need to reconfigure their core offerings if they can’t get broad-based relief on appeal,” Eric Goldman, a law professor at Santa Clara University and Section 230 scholar, wrote on his blog.

Like many in the third camp, Goldman worried that the ruling would have dire consequences for speech on the internet. “While any reconfiguration of social media offerings may help some victims, the changes will almost certainly harm many other communities that rely upon and derive important benefits from social media today,” he wrote. “Those other communities didn’t have any voice in the trial; and their voices are at risk of being silenced on social media as well.”

TechDirt’s Mike Masnick, another strong supporter of Section 230, was more blunt.

“If you care about free speech online, about small platforms, about privacy, about the ability for anyone other than a handful of tech giants to operate a website where users can post things — these two verdicts should scare the hell out of you,” he wrote. “Because the legal theories that were used to nail Meta this week don’t stay neatly confined to companies you don’t like. They will be weaponized against everyone.”

II.

There are parts of this argument that I agree with. Most alarming is the way that the New Mexico case argued Meta had endangered child safety by enabling end-to-end encryption in Instagram messaging. Earlier this month the company said it would discontinue encryption in Instagram, directing people instead to WhatsApp.

AG Torrez is the latest in a long line of law enforcement officials to realize his job would be easier if he could snoop on anyone’s messages. But we should not have to give up our basic right to privacy so that cops can make fewer phone calls. And encryption was at best tangential in the New Mexico case, which focused more on recommendation algorithms and how Meta failed to police the suspicious cross-platform movements of children across WhatsApp, Venmo, and Telegram.

I also agree that courts should apply strict scrutiny to any effort to limit what kinds of content platforms can recommend. So long as the content is legal, any efforts to regulate recommendations this way would seem like a clear violation of the First Amendment. This is why I remain skeptical of well-intentioned legislation like the Kids Online Safety Act: it may begin by telling platforms that they can’t recommend content about drugs and eating disorders, but could too easily be expanded to include bans on material about dissidents, LGBT people, and other disfavored groups.

Where I disagree with those in the third camp is where they say, in essence, that every design decision in an app like Instagram or YouTube is an editorial decision protected under the First Amendment. Goldman, in an interview with a reporter, put it this way:

“Social media’s offerings consist of third-party content, and the configurations were publishers’ editorial decisions about how to present it. So the line between first-party “design” choices and publication decisions about third-party content seems illusory to me.”

I’m not so sure. To say that an infinitely scrolling feed encourages overuse is to make no comment on its contents; only that the intermittent rewards that such a feed creates turns it into a kind of slot machine, and that teens would be less likely to develop problematic use if it didn’t exist.

Masnick’s response to this is to say that an infinitely scrolling feed is only interesting if the content of the feed is interesting — and so the distinction between content and design, from a legal perspective, really is illusory. He writes:

Here’s a thought experiment: imagine Instagram, but every single post is a video of paint drying. Same infinite scroll. Same autoplay. Same algorithmic recommendations. Same notification systems. Is anyone addicted? Is anyone harmed? Is anyone suing?

Of course not. Because infinite scroll is not inherently harmful.

On its face, it’s a compelling argument. But what if you ran the experiment the other way? What if you kept the content of a problematic Instagram feed identical — fill it up with posts about eating disorders, self-harm, rage bait, and drugs — but served it in an app where you had to tap a button every time you wanted to see the next post? What if this same version of the app sent no push notifications, and blocked video from autoplaying? If that was the default version of Instagram, do you think more people would be harmed, or fewer?

To me it seems obvious that fewer people would be harmed. Add a little friction to the experience, and it becomes much easier for the average person to look away. The content is still the same, but the delivery mechanism has changed, along with the dosage.

The truth is that content and design are necessary for people to be harmed at scale. And in a country where the Constitution prevents you from regulating the content, you can only regulate the design.

The question, of course, is how.

III.

One reason that the distinction between content and design features looks illusory to some is that they exist on a spectrum. If you changed the Instagram recommendation algorithm to promote only educational material, you are changing the content and the design of the app at the same time.

On the other hand, I reject the argument of the 230 diehards that every design decision is a content decision. The contents of YouTube go unchanged if the videos in your feed do not play by default. Snapchat messages will read the same even if Snap is prevented from incentivizing teens to go to extreme lengths to preserve their streaks. TikTok will still be TikTok if it is prevented from sending teenagers push notifications after midnight.

By identifying and restricting mechanical design features like these, we can preserve the core of Section 230 while also limiting at least some of the harm that platforms can cause. I’m under no illusion that we can solve the teen mental health crisis simply by disabling push notifications on Instagram. But given the complexity of the problem, the least we can do is to attempt some harm reduction: and that should begin with features like those above, which have close to no value outside boosting the valuations of the world’s richest companies.

I’m sympathetic to 230 defenders who fear that it is load-bearing infrastructure for the internet: that to touch it is to trigger a full-on collapse. When it became law 30 years ago, it fixed a genuine problem. And for a long time, it mostly worked as intended, letting small forums, blogs, and startups set up shop without getting sued into oblivion every time one user called another a bad name.

But a lot has changed since then. In 1996, the role of the platform was essentially custodial. Its job was to host and display the speech, and it didn’t do much beyond that.

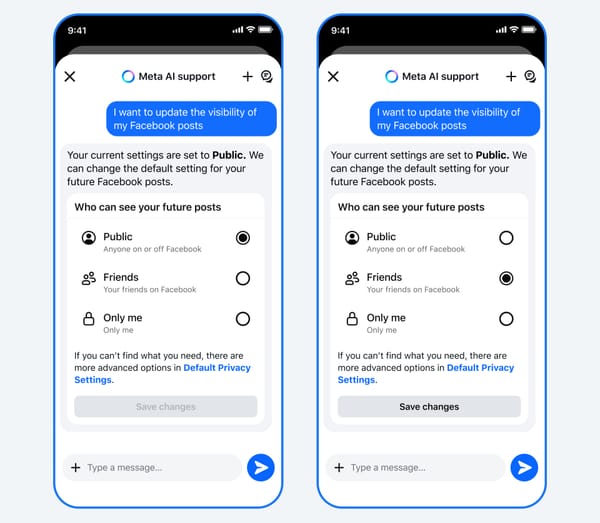

The internet of 2026 is a very different place. Platforms are no longer simple custodians. They build sophisticated systems that study your behavior to discover what will keep you on the app the longest, and then serve you more of it. They employ cognitive scientists who run endless experiments in an effort to defeat your instinct to do something else. And they do it not by making recognizably human editorial decisions about content, but by relentlessly optimizing their platforms’ designs.

That’s why, in the end, I’m glad juries recognized at least some of these design features as defective — and as something distinctive from what Section 230 was originally created to protect. The distinction between content and design might be blurry at the margins, but the differences between the CompuServe of 1996 and the Instagram of today are not subtle. The law should be able to tell the difference between them — and to rein in the excesses of our biggest platforms, which have shown us time and again that they have no interest beyond their own survival.

Section 230 continues to do a lot of good, and should be handled with care. But to argue that it must be frozen in amber and preserved at all costs is to risk protecting an abstraction at the expense of actual people. Juries have begun to realize that, and one way or another, platforms are going to have to deal with the consequences.

Sponsored

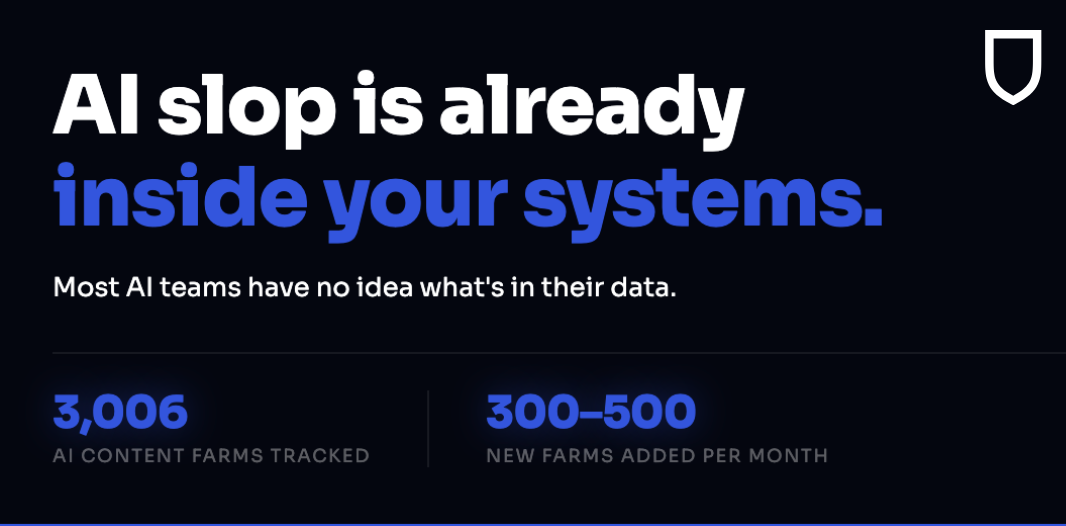

NewsGuard's AI Content Farm Detection Datastream delivers real-time, continuously updated intelligence on every known farm — plug it in as a data feed, exclusion list, or research dataset. Your current tools aren't catching them.

Trusted by leading AI providers including IBM to keep systems clean.

Following

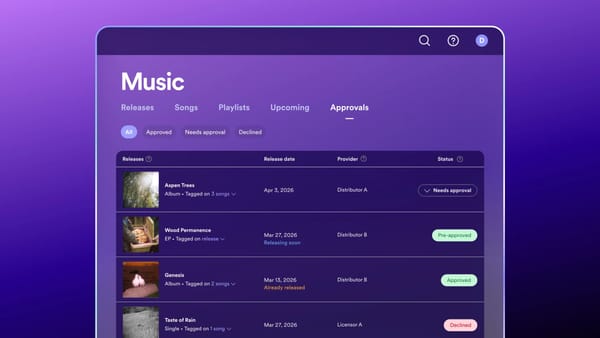

Meta stops pretending Instagram is PG-13

What happened:

Meta is walking back its rather bold decision to use “PG-13” to describe Instagram’s Teen Accounts after reaching an agreement with the Motion Picture Association. Meta will “substantially reduce” references to the rating and add a disclaimer that “there are lots of differences between social media and movies” when it does as part of the new agreement.

The initial decision to use the rating — announced in October — drew swift backlash from the MPA, which Meta had not deigned to consult before trying to hitch its beleaguered brand to something more trusted.

The MPA sent a cease-and-desist letter to Meta in the same month, calling the usage “literally false and highly misleading.”

Why we’re following: As the MPA pointed out in its press release, films rated by CARA, its voluntary film system, are “professionally produced and reviewed under a human-centered system, while user-generated posts on platforms like Instagram are not subject to the same rating.”

Meta’s approach was dubious from the start. A parent can generally expect a PG-13 movie to have few instances of swearing. From an implementation perspective, it’s hard to see how Instagram can promise the same sort of experience when social media and movies are fundamentally consumed differently.

Given all that, the surprising thing here is that the MPA is allowing Meta to associate Instagram with its rating system at all.

What people are saying: “We reject Meta’s implication to the public that Instagram’s tools for teens will be as safe and reliable as the MPA’s system,” Charles Rivkin, chairman and CEO of the MPA wrote in an op-ed for the Washington Post in November.

Rivkin had valid concerns then, especially with the child safety issues that have been swirling around Meta’s platforms: “What happens when inappropriate content slips through Instagram’s purportedly PG-13-inspired filter? Would parents conclude that the problem is an Instagram-specific defect?”

—Lindsey Choo

Bluesky users reject Attie

What happened:

Bluesky users despise the company's new AI app, Attie.

The app, which lets users build a custom Bluesky feed via text prompts, drew dunks from a user base that felt Bluesky had promised to eschew the AI-everywhere ethos of the modern internet. In the days after former CEO Jay Graber announced Attie, more than 100,000 people blocked Attie’s account on Bluesky.

That means Attie is the second-most-blocked Bluesky user behind U.S. Vice President J.D. Vance. At press time, Attie even had more blocks than ICE.

Why we’re following: Attie is the first feature Jay Graber has shipped since her recent transition from CEO to chief innovation officer. Unfortunately, Bluesky’s base doesn't seem very excited about this particular innovation.

In fairness to the CIO, it seems like people are confused about what Attie does. It’s not Bluesky's version of Grok, or even a chatbot per se. Instead, it’s a feature built on top of Bluesky’s ATP protocol and hosted outside its app, which means blocking @attie.ai doesn’t actually do much.

(We at Platformer would be supporters of Bluesky Grok, though. The time is ripe for a woke MechaHitler.)

Platformer was actually pretty excited for custom algorithms, and attempted to test Attie. Unfortunately, our efforts to craft a feed of Bluesky posts about the day's AI news mostly returned tedious arguments.

Our subsequent effort to create a custom feed about vintage interior design also went south when, out of nowhere, the first post showed a beautiful midcentury interior — occupied by a person posing in extremely skimpy lingerie.

The good news is that we only saw one porn post in our testing. And Bluesky’s porn appeared consensual and human-generated, unlike in certain other apps we could mention.

What people are saying: The most-liked reply to Jay Graber’s original announcement was a simple, “no thank you.” Another user wrote, “Cool! How do we block it?”

User @almostordinary.etheric-veil.com captured Bluesky’s mood with this image:

Many users were struck by the irony of a November 2025 post from Bluesky’s official account that read, “every time a software tool adds an AI feature nobody asked for, a human logs off.”

Graber addressed Attie’s haters in a follow-up post: “We hear the concerns about AI. Our goal is to use this technology to give people greater control, not to generate content.” She added: “We’ll look into ways to take into account the preferences expressed by people who’ve blocked @attie.ai.”

—Ella Markianos

Side Quests

A look at Trump AI czar David Sacks’ new role outside the White House, which appears to work a lot like his old role, but without the pesky ethical constraints.

Iran said it plans to target 18 major US tech companies in the Middle East, including Apple, Microsoft, and Google. A look inside Iran’s sophisticated hacking campaign to shape online perception.

California Gov. Gavin Newsom’s new executive order will require safety guardrails for AI companies seeking contracts with the state.

SpaceX lost contact with another Starlink satellite (because it exploded).

A look at the inner workings of Claude Code based on a partial source code leak.

The UK plans to probe Microsoft’s business software unit over concerns it could stem the growth of rivals. The UK’s accountancy regulator told auditors they will remain responsible for audit failures even when they’re caused by AI.

The new Meta Ray-Bans are more customizable, especially for people who need prescription lenses, but are also more expensive.

All US Google users can now change their email addresses.

Amazon struck a deal to provide in-flight WiFi on Delta flights.

Alibaba’s new multimodal model is not open-source, unlike its predecessors.

Future quantum computers could more easily break the cryptography protecting Bitcoin and other digital assets, Google researchers warned.

A disturbing game called Five Nights at Epstein’s is going viral in schools.

A look at how coding agents are dramatically changing vulnerability research.

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and platform design defects: casey@platformer.news. Read our ethics policy here.