The platforms give up on 2020 lies

For a time, they fought the good fight — but not any more

I.

On tech platforms these days you can get away with just about anything, as long as you’re running for president.

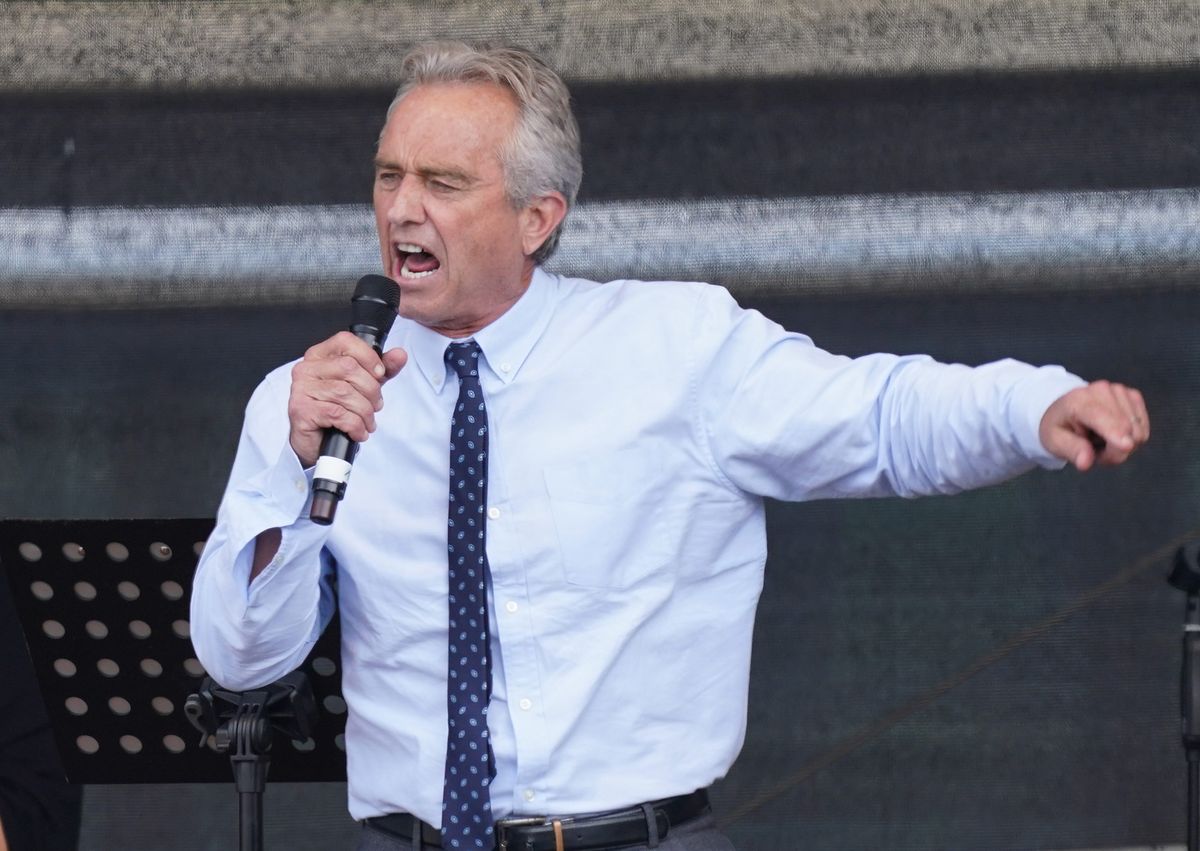

Take Robert F. Kennedy, Jr. A leading anti-vaccine conspiracy theorist, Kennedy lost his Instagram account in January 2021 when he tried to scare people away from getting the COVID-19 vaccine. His nonprofit organization, Children’s Health Defense, lost its account the following year for falsely warning that the COVID vaccine harmed people’s organs and was dangerous to pregnant women.

Platforms establish policies against spreading medical misinformation because they can spread information at a high speed and with few checks on its accuracy. It’s particularly important to enforce those policies on large accounts, like Kennedy’s, which has more than 770,000 followers: platforms often recommend their posts more often, giving fear-mongers like Kennedy unearned reach.

COVID has caused more than 1.1 million deaths in the United States alone, and 6.1 million hospitalizations; throughout 2021, when Kennedy was posting, the majority of people who died had not been vaccinated.

If you or I went on Instagram and created daily posts saying the COVID vaccines are harmful, we’d likely lose our accounts just like Kennedy did. But now he’s launched a bid for the presidency that looks to have about as much chance as Connor Roy did in Succession. And presto: he has his Instagram account back.

“As he is now an active candidate for president of the United States, we have restored access to Robert F. Kennedy, Jr.’s, Instagram account,” Meta told the Washington Post.

Needless to say, Kennedy is also welcome on Twitter, where Elon Musk hosted him in a Spaces event on Monday that offered lunatic counter-programming to Apple’s announcement of the Vision Pro headset. Seemingly confused about the event’s purpose, Kennedy spent a significant portion of the discussion interviewing Musk. When he did get a chance to offer his own views, Kennedy suggested that anti-depressants cause school shootings and that COVID was a bioweapon.

The traditional defense for offering candidates a platform like this, no matter how noxious their views, is that sunlight is the best disinfectant. Armed with the knowledge that Kennedy is crazier than a soup sandwich, the electorate can go forth and ensure that he does not win the Democratic nomination.

And that’s true, so far as it goes. But I’d be surprised if even Kennedy thinks he can win the nomination. The real point of running for office is to draw more attention to his harmful views — and platforms have now agreed to help with his project, congratulating themselves for being stalwarts of democracy even as candidates like Kennedy play them for fools.

II.

One function Musk now serves in the tech ecosystem is to give cover to other companies seeking to make unpalatable decisions. Across a variety of dimensions, Musk has moved fast and loudest — and when others have followed, the response has been barely a whimper.

Mass layoffs, stricter job performance requirements, a war on remote work, paid verification for social accounts — all of these served as a kind of aphrodisiac for other Silicon Valley CEOs, who proceeded to implement their own, slightly softer versions of Musk’s cultural reset.

Most recently, Twitter’s decaying policy and enforcement systems have proven to be enticing for other social platforms.

Last month, for example, Musk told an interviewer that users who made false claims about the 2020 election being stolen “would be corrected.” But there was no accompanying effort to make that happen. And so, that same week, the top 10 posts promoting a rigged election narrative racked up a collective 43,000 retweets, the Associated Press reported.

As Musk was surely not aware, his predecessors had sought to unwind the company’s enforcement of 2020 election lies. In January 2022, CNN reported to general surprise that Twitter had abandoned its old policy in March 2021. Enforcement measures were intended to operate only until the next president was inaugurated, a spokeswoman said at the time, and no longer.

In any case, Twitter’s peers took notice of its reversal and chose to follow suit. In February, Meta restored Donald Trump’s accounts, and upon reinstating him said it would no longer prevent users from lying about the 2020 election. And on Friday, YouTube announced that it wouldn’t, either.

Here’s Shannon Bond at NPR:

The Google-owned video platform said in a blog post that it has taken down "tens of thousands" of videos questioning the integrity of past U.S. presidential elections since it created the policy in December 2020.

But two and a half years later, the company said it "will stop removing content that advances false claims that widespread fraud, errors, or glitches occurred in the 2020 and other past U.S. Presidential elections" because things have changed. It said the decision was "carefully deliberated."

"In the current environment, we find that while removing this content does curb some misinformation, it could also have the unintended effect of curtailing political speech without meaningfully reducing the risk of violence or other real-world harm," YouTube said.

YouTube provided no evidence for its assertion that hosting and promoting 2020 election lies would not “meaningfully” increase the risk of harm. It seems curious, given the events of January 6, the ongoing threats to election workers, and the fact that about half of Americans didn’t think votes in the the midterm elections would be counted properly.

And sure, tech platforms aren’t the only place you’ll find Trump repeating his old election lies. Fox News was nearly sued out of existence for the whoppers that aired on its network, and more recently CNN hosted a town hall with the president in which the accompanying corrections for his ravings ran into the thousands of words.

But it’s one thing to host a single ill-considered town hall in the name of ratings, and another to volunteer to serve in perpetuity as a digital library for all the election lies that candidates and their surrogates see fit to upload. YouTube’s decision represents a massive in-kind donation of storage and bandwidth to the same forces that are attempting to ban video platforms and who recently almost eliminated the Section 230 protections that YouTube depends on.

On one hand, the Big Lie was never going to be solved at the level of tech policy. When 147 members of Congress are voting to overturn the results of a free and fair election, it’s clear that the rot goes much deeper than whatever is being posted on Twitter and YouTube.

At the same time, I find it more than a little grim that, little by little, Big Tech has opted out of the fight. Two years after the Capitol attacks, it’s easy to forget how close we came to losing our democracy. In the aftermath of January 6, platforms came together to promote a shared sense of reality and reduce the risk of further violence. Trumpists and right-wingers responded with a sustained DDoS attack on the truth until, one by one, platforms got tired of fighting it.

And so here we are.

On January 9, 2021, the historian Timothy Snyder wrote this about the ongoing danger of the Big Lie:

Trump’s coup attempt of 2020-21, like other failed coup attempts, is a warning for those who care about the rule of law and a lesson for those who do not. His pre-fascism revealed a possibility for American politics. For a coup to work in 2024, the breakers will require something that Trump never quite had: an angry minority, organized for nationwide violence, ready to add intimidation to an election. Four years of amplifying a big lie just might get them this. To claim that the other side stole an election is to promise to steal one yourself. It is also to claim that the other side deserves to be punished.

We are now two years into the amplification of that Big Lie. And one of the last checks on its viral promotion — the willingness of tech platforms to deny their services to those who endorsed it — has now fallen.

Among a certain kind of libertarian-leaning tech executive, criticism of disinformation on social networks has almost always been cause to roll the eyes: magical thinking from bed-wetting liberals who believe dangerous ideas can be snuffed out through censorship alone.

My fear is that the next two years will reveal that this attitude reflects a magical thinking of its own — that offering the most powerful communications tools ever devised to the enemies of democracy, allowing them to nibble away at the fabric of reality without consequence, will somehow fail to affect the society that they seek to unmake.

We are in for an ugly time. And should the worst happen, I hope we remember this: the moment when tech platforms, having briefly banded together to do the right thing, looked each other in the eye and one by one all gave up.

Governing

- The SEC expanded its legal assault on crypto, accusing Coinbase of operating an illegal securities exchange just one day after suing Binance. (Austin Weinstein, Allyson Versprille and Muyao Shen / Bloomberg)

- A former ByteDance executive claimed that a committee of Chinese Communist Party members accessed the TikTok data of civil rights activists in Hong Kong during the city’s widespread protests in 2018. (Georgia Wells / WSJ)

- Internal company documents show the extent to which 4chan’s loose content moderation approach has made the platform a breeding ground for violent ideologies. (Justin Ling / Wired)

- Rep. Jim Jordan is requesting documents and demanding meetings with disinformation experts after alleging collusion with government officials to suppress conservative speech. Ridiculous harassment of academics by an elected official here. (Naomi Nix and Joseph Menn / The Washington Post)

- A profile of Google general counsel Halimah DeLaine Prado details the lawyer’s recent successes defending Section 230 and the looming fights over generative AI and content moderation on the horizon. (Emily Birnbaum / Bloomberg)

- Microsoft agreed to pay the Federal Trade Commission $20 million as part of a child privacy settlement after being charged with collecting and retaining information on Xbox users without proper consent. (Kanishka Singh / Reuters)

- Twitter admitted in a court filing that the insinuations of U.S. government interference on the platform found in the Twitter Files are largely unfounded as it tries to now defend against a Trump lawsuit. Trump’s legal team is trying to reopen a 2021 case by claiming the Twitter Files are evidence of a ”widespread censorship campaign.” (Mike Masnick / Techdirt)

- Black soccer players, many of whom face racist abuse online, are turning to an AI-powered filtering tool from the company GoBubble to contend with the torrent of online hate speech. (Steve Douglas and Jerome Pugmire / Associated Press)

Industry

- The Vision Pro may not become a viable alternative to the iPhone for at least five more years as Apple strives to improve battery life, add cellular connectivity, and shrink the design. (Mark Gurman / Bloomberg)

- Stratechery’s Ben Thompson used the Apple Vision Pro and found the experience “extraordinary,” and argues that the potential for the device is larger than he previously imagined. (Ben Thompson / Stratechery)

- Apple acquired AR startup Mira, which has contracts with the U.S. military and supplied Nintendo with headsets for its Mario Kart theme park ride. (Zoe Schiffer and Alex Heath / The Verge)

- Instagram is testing a feature similar to Snapchat’s My AI bot that adds a generative AI assistant to its direct messaging tab. (Sabrina Ortiz / ZDNet)

- Marc Andreessen claims that AI ‘will save the world’ in a bullish blog post arguing against AI risks like automation and inequality and providing his framework for industry regulation. You can get excited about a lot of things if you convince yourself that every valid concern about a new technology is actually just a moral panic. (Marc Andreessen / a16z)

- Apple avoided saying “AI” during its WWDC keynote, and instead preferred to describe how it is using machine learning to improve its various software products. (Benj Edwards / Ars Technica)

- Apple software chief Craig Federighi said the rise of AI — and with it, increasingly sophisticated deepfakes — is informing the company’s approach to new privacy and security features. (Michael Grothaus / Fast Company)

- Publishing trade association Digital Content Next has drafted guidelines warning that generative AI systems trained on copyrighted works likely go beyond fair use and are violating copyright law. Can we just skip to the lawsuit already?(Ryan Barwick / Marketing Brew)

- Reddit is laying off 5% of the company, or about 90 employees, and slowing hiring as part of a restructuring effort designed to help the company break even next year. (Sarah E. Needleman / WSJ)

- Reddit defended its new API pricing policy for third-party apps, saying it needs to be “fairly paid” for high usage and that it determined pricing based on the costs it’s been incurring. (Priya Anand / Bloobmerg)

Those good tweets

For more good tweets every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and stable tech policy: casey@platformer.news and zoe@platformer.news.