Why Platformer is leaving Substack

We’ve seen this movie before — and we won’t stick around to watch it play out

After much consideration, we have decided to move Platformer off of Substack. Over the next few days, the publication will migrate to a new website powered by the nonprofit, open-source publishing platform Ghost. If you already subscribe to Platformer and wish to continue receiving it, you don’t need to do anything: your account will be ported over to the new platform.

If all goes well, following the Martin Luther King Jr. holiday on Monday, you’ll receive the Tuesday edition of Platformer as normal. If you have any issues with your subscription after that, please let us know.

Today let’s talk about how we came to this decision, the debate over how platforms should moderate content, and why we think we’re better off elsewhere.

I.

When I launched Platformer on Substack in 2020, it was not in the belief that we would be here forever. Tech platforms come and go; in the meantime, they can also change in ways that make staying there impossible for the creators that rely on them. For this reason, I almost launched Platformer on a custom-built stack of services centered on WordPress, the way my inspiration Ben Thompson had done for Stratechery.

But Substack had some compelling advantages of its own. It was impressively fast and easy to set up. It paid to design Platformer’s logo. It offered me a year of healthcare subsidies, and ongoing legal support.

I also felt a personal connection to Substack’s co-founders, who believed that Platformer would succeed even before it had a name. They convinced me that I could thrive on their platform, and offered me a welcome boost in confidence as I considered leaving the best job I ever had to strike out on my own.

In the three years since, Substack has been a mostly happy home. Platformer has grown tremendously over that time, from around 24,000 free subscribers to more than 170,000 today. Our paid subscribers have allowed me to create new jobs in journalism. I’m proud of the work we do here.

Over that same period, Substack has faced occasional controversies over its laissez-faire approach to content moderation. The platform hosts a wide range of material I find distasteful and offensive. But for a time, the distribution of that material was limited to those who had signed up to receive it. In that respect, I did not view the decision to host Platformer on Substack as being substantially different from hosting it on, for example, GoDaddy.

But as I wrote earlier this week, Substack’s aspirations now go far beyond web hosting. It touts the value of its network of publications as a primary reason to use its product, and has built several tools to promote that network. It encourages writers to recommend other Substack publications. It sends out a weekly digest of publications for readers to consider subscribing to. And last year it launched a Twitter-like social network called Notes that highlights posts from around the network, regardless of whether you follow those writers or not.

Not all of you use these features. Some of you might not have seen them. But I can speak to their effectiveness: In 2023, we added more than 70,000 free subscribers. While I would love to credit that growth exclusively to our journalism and analysis, I believe we have seen firsthand how quickly and aggressively tools like these can grow a publication.

And if Substack can grow a publication like ours that quickly, it can grow other kinds of publications, too.

II.

In November, when Jonathan M. Katz published his article in The Atlantic about Nazis using Substack, it did not strike me as cause to immediately leave Substack. All platforms host problematic and harmful material; I assumed Substack would remove praise for Nazis under its existing policy that “Substack cannot be used to publish content or fund initiatives that incite violence based on protected classes.”

And so, after reading the open letter from 247 writers on the platform calling for clarity on the issue, I waited for a response.

The response, from Substack co-founder Hamish McKenzie, arrived on December 21. It stated that Substack would remove accounts if they made credible threats of violence but otherwise would not intervene. “We don't think that censorship (including through demonetizing publications) makes the problem go away — in fact, it makes it worse,” he wrote. “We believe that supporting individual rights and civil liberties while subjecting ideas to open discourse is the best way to strip bad ideas of their power.”

This was the moment where I started to think Platformer would need to leave Substack. I’m not aware of any major US consumer internet platform that does not explicitly ban praise for Nazi hate speech, much less one that welcomes them to set up shop and start selling subscriptions.

But suddenly, here we were.

I didn’t want to leave Substack without first getting my own sense of the problem. I reached out to journalists and experts in hate speech and asked them to share their own lists of Substack publications that, in their view, advanced extremist ideologies. With my colleagues Zoë Schiffer and Lindsey Choo, I reviewed them all and attempted to categorize them by size, ideology, and other characteristics.

In the end, we found seven that conveyed explicit support for 1930s German Nazis and called for violence against Jews, among other groups. Substack removed one before we sent it to them. The others we sent to the company in a spirit of inquiry: will you remove these clear-cut examples of pro-Nazi speech? The answer to that question was essential to helping us understand whether we could stay.

It was not, however, a comprehensive review of hate speech on the platform. And to my profound disappointment, before the company even acted on what we sent them, Substack shared the scope of our findings with another, friendlier publication on the platform, along with the information that these publications collectively had few subscribers and were not making money. (It later apologized to me for doing this.)

The point of this leak, I believe, was to make the entire discussion about hate speech on Nazis on Substack appear to be laughably small: a mountain made out of a molehill by bedwetting liberals.

To us, the six publications we had submitted had only ever been a question: would Substack, in the most clear-cut of all speech cases, do the bare minimum?

In the end, it did, in five out of six cases. As all of this unfolded, I spoke twice with Substack’s co-founders. And while they asked that those conversations be off the record, my understanding from our conversations — based on material they had shared with me in writing — was that in the future they would regard explicitly Nazi and pro-Holocaust material to be a violation of their existing policies.

But on Tuesday, when I wrote my story about the company’s decision to remove five publications, that language was missing from their statement. Instead, the company framed the entire discussion as having been about the handful of publications I had sent them for review.

I attempted to write a straightforward news story about all this, and wound up infuriating many readers. On the right, I faced criticism for making a fuss out of Substack hosting a handful of small Nazi publications. On the left, I faced even louder criticism for (in their view) appearing to celebrate and validate Substack’s removal of those same publications. (I wrote Tuesday that “Substack’s removal of Nazi publications resolves the primary concern we identified here last week.” I regret using that language. What I should have said was “Substack did the basic thing we asked it to,” and then emphasized that it did not address our larger concerns. Which I did go on to say, though not with the force that in hindsight I wish I had.)

I’m happy to take my lumps here. I just want to say again that to me, this was never about the fate of a few publications: it was about whether Substack would publicly commit to proactively removing pro-Nazi material. Up to the moment I published on Tuesday, I believed that the company planned to do this. But I no longer do.

From there, our next move seemed clear. But first I wanted to consult our readers, whose advice and support I have been so lucky to rely on over these past few years. Asking readers for their thoughts proved to be surprisingly controversial, especially in the Sidechannel Discord, where some of you wondered whether I was seeking a fig leaf of approval that we could use to justify staying here. But Platformer has as its readers some of the world’s smartest minds in content moderation and trust and safety — I sincerely wanted to get your thoughts before making a final decision.

Over the next 48 hours, the Platformer community raised a variety of sensible objections to how Substack had handled this issue. You pointed out that Substack had not changed its policy; that it did not commit explicitly to removing pro-Nazi material; that it seemed to be asking its own publications to serve as permanent volunteer moderators; and that in the meantime all of the hate speech on the platform remains eligible for promotion in Notes, its weekly email digest, and other algorithmically ranked surfaces.

In emails, comments, Substack Notes and callouts on social media, you’ve made your view clear: Platformer should leave Substack. We waited a day to announce our move as we finalized plans with Ghost and began our migration. But today we can say clearly that we agree with you.

Substack’s tools are designed to help publications grow quickly and make lots of money — money that is shared with Substack. That design demands responsible thinking about who will be promoted, and how.

The company’s defense boils down to the fact that nothing that bad has happened yet. But we have seen this movie before, from Alex Jones to anti-vaxxers to QAnon, and will not remain to watch it play out again.

III. Frequently asked questions about Substack and free speech

We’re still only talking about six newsletters. Aren’t you overreacting?

To be clear, there are a lot more than six bad publications on Substack: our analysis found dozens of far-right publications advocating for the great replacement theory and other violent ideologies.

But until Substack makes it clear that it will take proactive steps to remove hate speech and extremism, the current size of the problem isn’t relevant. The company’s edgelord branding ensures that the fringes will continue to arrive and set up shop, and its infrastructure creates the possibility that those publications will grow quickly. That’s what matters.

What about the fact that censorship will not make extremism go away, and might even make it worse?

We didn’t ask Substack to solve racism. We asked it to give us an easy, low-drama place to do business, and to commit to not funding and accelerating the growth of hate movements. Ultimately we did not get either.

Aren’t you actually helping Nazis here, making their ideology seem alluring by turning into forbidden knowledge?

Genocidal anti-semitism is hardly forbidden knowledge; you can find it just about anywhere. The Nazi worldview is taught in schools, figures prominently in popular culture, and endures in forums all across the internet. I believe Nazi ideology is made more appealing by the people who spread it than it is by the people who choose not to host it.

OK fine, but aren’t calls to ban Nazis a slippery slope? If Substack caves in here, there will be no end to what people like you call for them to remove.

The slippery-slope argument here is based on the fantasy that if you simply draw the right line, you will never have to revisit it. The fact is that we are constantly renegotiating the boundaries of speech based on values, norms, and threats of persecution. The problem is that renegotiating those boundaries is exhausting, expensive, and makes everyone mad, which is why most people prefer to shout “slippery slope!” and then never think about it again.

Previously you said you would submit your findings to Stripe, Substack’s payments processor. Doesn’t it set a bad precedent to lobby for content moderation at the level of payments?

Certainly I would not endorse a move like that in most cases. But as with Substack, I approached Stripe in the spirit of journalistic inquiry. One of its customers appeared to have said Nazis are free to set up shop there. Stripe’s policies forbid the funding of violent movements. How did Stripe’s policy square with Substack’s?

In any case, Stripe never responded to us. But it felt like it was worth sending an email.

Aren’t you going to have this exact same problem on Ghost, or wherever else you host your website?

As open-source software, Ghost is almost certainly used to publish a bunch of things we disagree with and find offensive. But it differs from Substack in some important respects.

One, its terms of service ban content that “is violent or threatening or promotes violence or actions that are threatening to any other person.” Ghost founder and CEO John O’Nolan committed to us that Ghost’s hosted service will remove pro-Nazi content, full stop. If nothing else, that’s further than Substack will go, and makes Ghost a better intermediate home for Platformer than our current one.

Two, Ghost tells us it has no plans to build the recommendation infrastructure Substack has. It does not seek to be a social network. Instead, it seeks only to build good, solid infrastructure for internet businesses. That means that even if Nazis were able to set up shop here, they would be denied access to the growth infrastructure that Substack provides them. Among other benefits, that means that there is nowhere on Ghost where their content will appear next to Platformer.

I actually reached these conclusions way before you did, and moved off Substack a long time ago. Is there anything you would like to say to me?

Good job! Thank you for your leadership.

IV.

I want to end on a note of gratitude.

We rarely see boom times in media, but these days the mood is particularly bleak. From smaller publications to giant ones, it can seem like almost everyone is laying people off and shrinking their ambitions. Against that backdrop, Substack has been a bright spot. Each week, some of the best things that I read are on the platform. It has made a meaningful, positive contribution to the culture, while also committing to allowing writers like me to leave when we want and take our customers with us when we do.

That takes talent, hard work, and principles. It counts for something. And I’m grateful to Substack for all the help it has given me and Platformer.

But over the past three years, another wonderful thing happened in media. Other publishing platforms sprung up featuring more robust content moderation policies, and with terms friendlier to business. (Platformer will save tens of thousands of dollars a year by no longer having to share 10 percent of its revenue with Substack.)

Meanwhile, as our paid readership grew into the thousands, we became less dependent on the goodwill of our host. So many times over the past two weeks, readers have written to say that they will follow Platformer anywhere it goes. Messages like yours have given us the confidence that, whatever challenges we might face as we leave this network, Platformer will endure.

Substack deserves credit for kicking off a revolution in independent publishing. But the world it helped to birth is now much bigger than its own platform. Next week we will move to a new home in that world. One where readers can feel confident their money is not going to accelerate the growth of hate movements. And one where we are no longer called upon constantly to defend an ideology we do not believe in.

I’m sad our time on Substack is ending in this way. But I’m also hopeful that, years from now, we will look back on today as a new beginning for our journalism.

And in the meantime, whatever else this decision turns out to mean for Platformer, I am certain in the knowledge that it is the right thing to do.

On the podcast this week: Kevin interrogates me about the decision to leave Substack. Then, the Wall Street Journal’s Kirsten Grind stops by to discuss her investigation into Elon Musk’s drug use. And finally, researcher Felix Wong joins us to explain how AI helped his team identify a new class of drugs that could potentially treat MRSA.

Apple | Spotify | Stitcher | Amazon | Google | YouTube

Governing

- On Wednesday, SEC chair Gary Gensler said the agency has reluctantly approved about a dozen spot bitcoin ETF proposals, after a loss in court. (Jesse Hamilton / CoinDesk)

- The move came after the SEC account on X was hacked to falsely say that did not approve bitcoin ETFs had already been approved. (Nikhilesh De, Krisztian Sandor / CoinDesk)

- X said the problem didn’t stem from a breach of its systems, but rather from the hacker obtaining a phone number associated with the account. (Joanna Tan / CNBC)

- The FBI is investigating the issue, the SEC says. (Sarah Wynn / The Block)

- OpenAI, Anthropic and Cohere have reportedly had secret back-channel talks with Chinese AI experts, with the knowledge of the White House, as well as the UK and Chinese governments. This is … probably good? (Madhumita Murgia / Financial Times)

- Pennsylvania is allowing some state employees to use ChatGPT Enterprise, in a pilot program with OpenAI. (Maxwell Zeff / Gizmodo)

- The US International Trade Commission wants to reinstate the Apple Watch ban, opposing Apple’s motion to pause the ban during its appeal. (Joe Rossignol / MacRumors)

- Non-consensual deepfake porn images of female celebrities were reportedly the first few images on Google, Bing, and other search engines, when searching for those celebrities deepfakes with safe-search off. (Kat Tenbarge / NBC News)

- 4chan users who are manipulating images and audio to spread hateful content are giving a preview into how dangerous AI can be in the hands of bad actors. (Stuart A. Thompson / The New York Times)

- Google is officially endorsing the right to repair and asked regulators to ban “parts pairing,” a practice that limits the ability of independent shops to repair devices by making necessary parts unavailable to them. The company is set to testify on the issue at a hearing in Oregon for a state bill. (Jason Koebler / 404 Media)

- Amazon did not any offer concessions in response to the European Commission’s antitrust concerns surrounding its planned $1.4 billion iRobot merger. (Josh Sisco and Aoife White / POLITICO)

- A Meta staffer says she was put under investigation for possible violations of employee policy after raising concerns over alleged pro-Palestinian censorship by the company. (Hannah Murphy and Cristina Criddle / Financial Times)

- Apple pulled at least nine crypto exchange apps from its India App Store, including Binance and Kraken, after authorities said they were operating illegally. (Manish Singh / TechCrunch)

- ByteDance is shutting down music streaming service Resso in India, following its removal from app stores under a government order. (Vikas Sn and Aihik Sur / Moneycontrol)

- The biggest risk in global elections is AI-derived misinformation and disinformation, according to a report by the World Economic Forum. (Karen Gilchrist / CNBC)

- Ilan Shor, a Moldovan oligarch who was sanctioned by the US, is once again pushing pro-Kremlin ads on Facebook, despite Meta’s previous promise to stop him. (David Gilbert / WIRED)

Industry

- The year has unfortunately began with another big round of layoffs across the tech industry:

- Hundreds working on the Google Assistant software were laid off amidst the company’s focus on Bard. (Louise Matsakis / Semafor)

- Google also made cuts to its hardware division, with the majority of layoffs impacting a team that works on augmented reality. (Nico Grant / The New York Times)

- Google’s Devices and Services team in charge of Nest, Pixel and Fitbit hardware is being reorganized, with Fitbit co-founders James Park and Eric Friedman leaving. (Abner Li / 9to5Google)

- A union representing Alphabet workers said that the layoffs affected more than 1,000 employees. (Jon Victor / The Information)

- Amazon is cutting hundreds of jobs in its Prime Video and MGM Studios divisions, citing a reprioritization of investments. (Alex Weprin / The Hollywood Reporter)

- Twitch is laying off about 500 workers – 35 percent of its staff – in the latest round of job cuts. (Cecilia D’Anastasio / Bloomberg)

- Discord is reportedly laying off about 17 percent of its staff, about 170 workers, with CEO Jason Citron saying the company grew too quickly in previous years. (Alex Heath / The Verge)

- Meta is cutting many technical program manager positions at Instagram, telling workers they can reinterview for other jobs. (Kali Hays, Hugh Langley, and Sydney Bradley / Business Insider)

- X removed support for NFT profile pictures for Premium subscribers along with all descriptions of the feature. This story continues the trend of everything I ever wrote about crypto looking terrible in retrospect. (Ivan Mehta / TechCrunch)

- AI has become Mark Zuckerberg’s top priority over the metaverse, and his close involvement in Meta’s AI research group FAIR is paying dividends so far. An uncommonly dishy look inside Meta today; highly recommended. (Aisha Counts and Sarah Frier / Bloomberg)

- Google DeepMind’s drug discovery spinout, Isomorphic Labs, is aiming to halve average drug discovery times from five years to two through Big Pharma partnerships. (Cristina Criddle, Hannah Kuchler and Madhumita Murgia / Financial Times)

- First-aid tutorials by authoritative sources will now be displayed on the top of YouTube search results – including how to perform Heimlich maneuvers and CPR. Would love to see this practice applied to other queries that would benefit from seeing high-quality sources up top! (Amrita Khalid / The Verge)

- 17 features with low usage will be removed from Google Assistant. (Abner Li / 9to5Google)

- Gboard now has a physical keyboard toolbar on Android tablets. (Abner Li / 9to5Google)

- Google is eliminating fees for customers switching to other cloud computing providers. Other providers may now feel pressure to do the same. (Dina Bass / Bloomberg)

- Google Cloud is introducing several generative AI tools for retailers to improve online shopping and other operations. (Alex Koller / CNBC)

- OpenAI launched its GPT store, for paid ChatGPT users to buy and share custom chatbots. (Rachel Metz / Bloomberg)

- A new ChatGPT subscription, ChatGPT Team, will let smaller, service-oriented teams have a workspace and tools for team management. (Kyle Wiggers / TechCrunch)

- OpenAI is reportedly in talks to license content from CNN, Fox Corp. and Time in order to train ChatGPT. (Shirin Ghaffary, Graham Starr and Brody Ford / Bloomberg)

- Snap CEO Evan Spiegel predicts that social media is dead and that Snap will “transcend” the smartphone, according to a leaked internal memo. He took a swing at rivals, noting they are “connecting pedophiles and fueling insurrection.” (Kali Hays / Business Insider)

- Faye Iosotaluno is the new chief executive officer of Tinder. She was previously the company’s chief operating officer. (Natalie Lung / Bloomberg)

- Walmart launched a new generative AI tool using Microsoft’s AI models that allows customers to search for items based on uses instead of items. “Food for a family picnic,” for example. (Siddharth Cavale / Reuters)

- Startup Rabbit sold out its first launch of the R1 pocket AI companion, a device that’s a “universal controller for apps”. Lots of buzz about this cute little gadget this week, though the economics of a subscription-free service that talks to GPT-4 all the time seem unsustainable. (Emma Roth / The Verge)

- Consumer spending on apps increased by 3 percent year-over-year, according to Data.ai’s annual report, with non-game apps like TikTok seeing an increase in spending. But downloads remained flat. (Sarah Perez / TechCrunch)

- Software engineers are saying the job market has become extremely competitive over the past year, with many saying AI will lead to less hiring. (Maxwell Strachan / Vice)

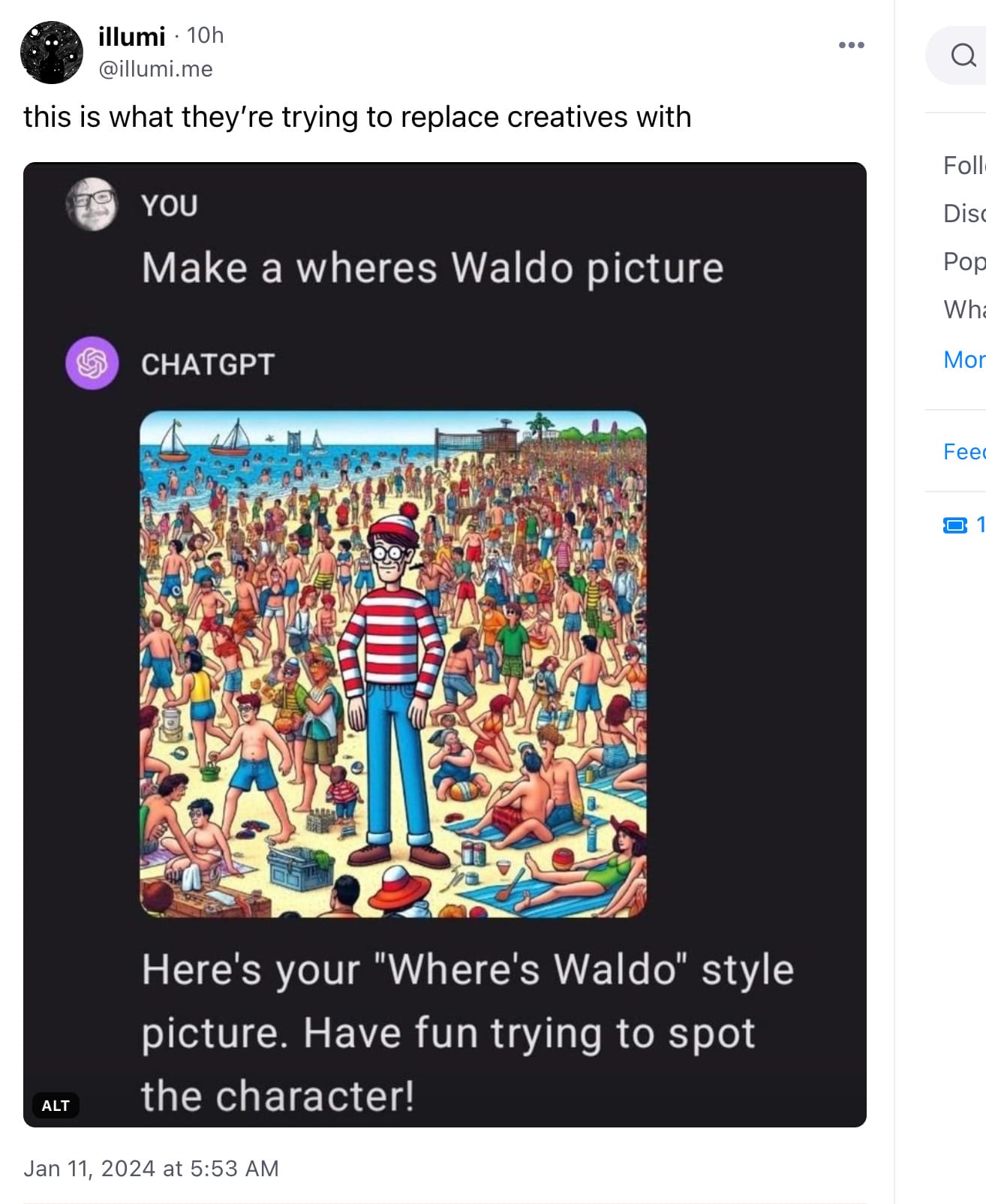

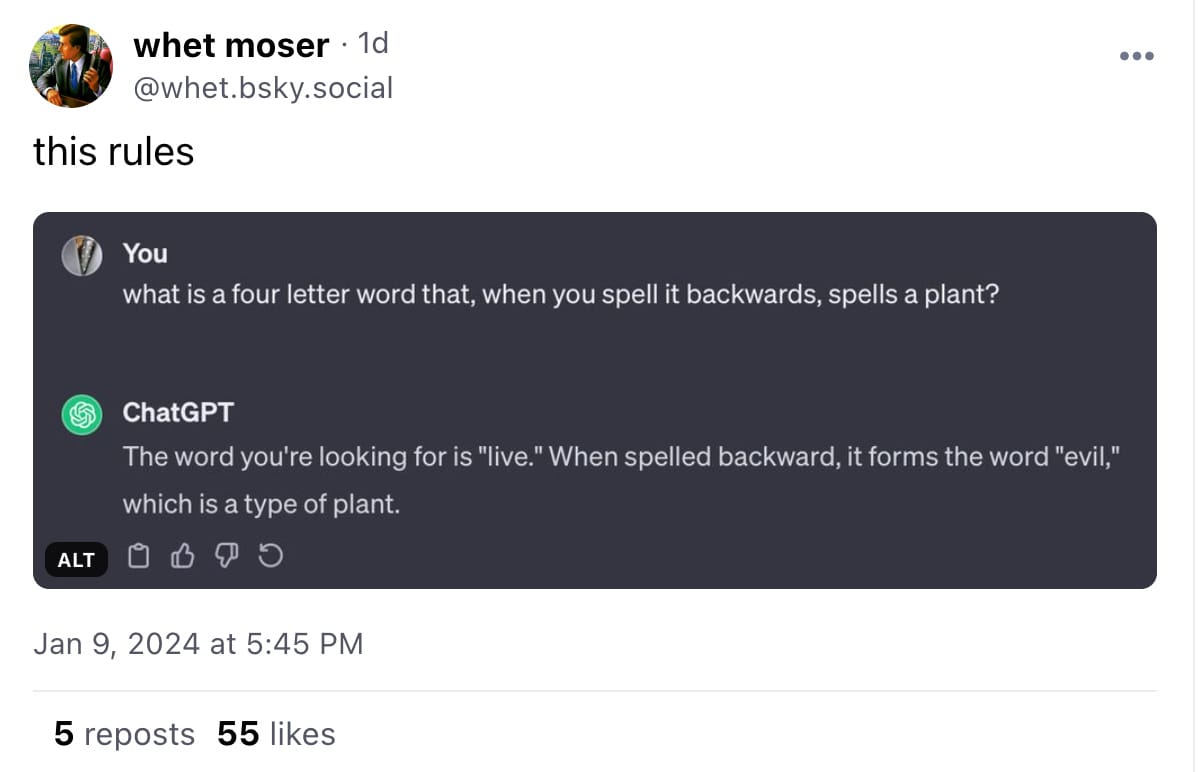

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and questions about Ghost: casey@platformer.news and zoe@platformer.news.