The AI is eating itself

Early notes on how generative AI is affecting the internet

Today let’s look at some early notes on the effect of generative artificial intelligence on the broader web, and think through what it means for platforms.

At The Verge, James Vincent surveys the landscape and finds a dizzying number of changes to the consumer internet over just the past few months. He writes:

Google is trying to kill the 10 blue links. Twitter is being abandoned to bots and blue ticks. There’s the junkification of Amazon and the enshittification of TikTok. Layoffs are gutting online media. A job posting looking for an “AI editor” expects “output of 200 to 250 articles per week.” ChatGPT is being used to generate whole spam sites. Etsy is flooded with “AI-generated junk.” Chatbots cite one another in a misinformation ouroboros. LinkedIn is using AI to stimulate tired users. Snapchat and Instagram hope bots will talk to you when your friends don’t. Redditors are staging blackouts. Stack Overflow mods are on strike. The Internet Archive is fighting off data scrapers, and “AI is tearing Wikipedia apart.” The old web is dying, and the new web struggles to be born.

The rapid diffusion of text generated by large language models around the web cannot be said to come as any real surprise. In December, when I first covered the promise and the perils of ChatGPT, I led with the story of Stack Overflow getting overwhelmed with the AI’s confident bullshit. From there, it was only a matter of time before platforms of every variety began to experience their own version of the problem.

To date, these issues have been covered mostly as annoyances. Moderators of various sites and forums are seeing their workloads increase, sometimes precipitously. Social feeds are becoming cluttered with ads for products generated by bots. Lawyers are getting in trouble for unwittingly citing case law that doesn’t actually exist.

For every paragraph that ChatGPT instantly generates, it seems, it also creates a to-do list of facts that need to be checked, plagiarism to be considered, and policy questions for tech executives and site administrators.

When GPT-4 came out in March, OpenAI CEO Sam Altman tweeted: “it is still flawed, still limited, and it still seems more impressive on first use than it does after you spend more time with it.” The more we all use chatbots like his, the more this statement rings true. For all of the impressive things it can do — and if nothing else, ChatGPT is a champion writer of first drafts — there also seems to be little doubt that is corroding the web.

On that point, two new studies offered some cause for alarm. (I discovered both in the latest edition of Import AI, the indispensable weekly newsletter from Anthropic co-founder and former journalist Jack Clark.)

The first study, which had an admittedly small sample size, found that crowd-sourced workers on Amazon’s Mechanical Turks platforms increasingly admit to using LLMs to perform text-based tasks. Studying the output of 44 workers, using “a combination of keystroke detection and synthetic text classification,” researchers from EPFL write, they “estimate that 33–46% of crowd workers used LLMs when completing the task.” (The task here was to summarize the abstracts of medical research papers — one of the things today’s LLMs are supposed to be relatively good at.)

Academic researchers often use platforms like Mechanical Turk to conduct research in social science and other fields. The promise of the service is that it gives researchers access to a large, willing, and cheap body of potential research participants.

Until now, the assumption has been that they will answer truthfully based on their own experiences. In a post-ChatGPT world, though, academics can no longer make that assumption. Given the mostly anonymous, transactional nature of the assignment, it’s easy to imagine a worker signing up to participate in a large number of studies and outsource all their answers to a bot. This “raises serious concerns about the gradual dilution of the ‘human factor’ in crowdsourced text data,” the researchers write.

“This, if true, has big implications,” Clark writes. “It suggests the proverbial mines from which companies gather the supposed raw material of human insights are now instead being filled up with counterfeit human intelligence.”

He adds that one solution here would be to “build new authenticated layers of trust to guarantee the work is predominantly human generated rather than machine generated.” But surely those systems will be some time in coming.

A second, more worrisome study comes from researchers at the University of Oxford, University of Cambridge, University of Toronto, and Imperial College London. It found that training AI systems on data generated by other AI systems — synthetic data, to use the industry’s term — causes models to degrade and ultimately collapse.

While the decay can be managed by using synthetic data sparingly, researchers write, the idea that models can be “poisoned” by feeding them their own outputs raises real risks for the web.

And that’s a problem, because — to bring together the threads of today’s newsletter so far — AI output is spreading to encompass more of the web every day.

“The obvious larger question,” Clark writes, “is what this does to competition among AI developers as the internet fills up with a greater percentage of generated versus real content.”

When tech companies were building the first chatbots, they could be certain that the vast majority of the data they were scraping was human-generated. Going forward, though, they’ll be ever less certain of that — and until they figure out reliable ways to identify chatbot-generated text, they’re at risk of breaking their own models.

What we’ve learned about chatbots so far, then, is that they make writing easier to do — while also generating text that is annoying and potentially disruptive for humans to read. Meanwhile, AI output can be dangerous for other AIs to consume — and, the second group of researchers predict, will eventually create a robust market for data sets that were created before chatbots came along and began to pollute the models.

In The Verge, Vincent argues that the current wave of disruption will ultimately bring some benefits, even if it’s only to unsettle the monoliths that have dominated the web for so long. “Even if the web is flooded with AI junk, it could prove to be beneficial, spurring the development of better-funded platforms, he writes. “If Google consistently gives you garbage results in search, for example, you might be more inclined to pay for sources you trust and visit them directly.”

Perhaps. But I also worry the glut of AI text will leave us with a web where the signal is ever harder to find in the noise. Early results suggest that these fears are justified — and that soon everyone on the internet, no matter their job, may soon find themselves having to exert ever more effort seeking signs of intelligent life.

Talk about this edition with us in Discord: This link will get you in for the next week.

Governing

- The Supreme Court made it more difficult to prosecute cyberstalking by ruling that threatening messages are protected under the First Amendment unless they rise to the level of “true threats.” The liberal justices were concerned the case might chill legal speech if the law disregarded someone’s intent. (Issie Lapowsky / Fast Company)

- The group of TikTok users who filed a lawsuit arguing the state’s ban on the app violates their First Amendment rights is being financed by TikTok. (Sapna Maheshwari / New York Times)

- Apple joined Signal and WhatsApp in criticizing the U.K.’s Online Safety Bill and calling it a threat to end-to-end encryption. (Jon Porter / The Verge)

- A Twitter video playback feature copied from TikTok generated controversy over the weekend for serving people violent videos and misinformation. Elon Musk encouraged users to try the feature and inadvertently brought attention to the platform’s algorithmic shortcomings. (Khadijah Khogeer / NBC News)

- Meta launched new child safety features for Messenger that include stricter parental controls, the ability to view a child’s contacts and the option to disable messages from strangers. (Tatum Hunter / The Washington Post)

Industry

- OpenAI plans to build a ChatGPT-powered AI assistant for the workplace, putting it in conflict with partners and customers like Microsoft and Salesforce that are trying to do the same. This was always the obvious risk of white-labeling OpenAI’s tech.(Aaron Holmes / The Information)

- Medical professionals are cautiously optimistic about the benefits of generative AI, specifically around reducing burnout from paperwork and other documentation duties. There are concerns that AI software might introduce errors or fabrications in medical records, though. (Steve Lohr / The New York Times)

- Amazon’s warehouse robots are increasingly automating human-level work, in part by using a new device named Proteus that works alongside humans and a picking bot named Sparrow that can sort products. (Will Knight / Wired)

- TikTok is discontinuing its BeReal clone, called TikTok Now, less than a year after it was announced in yet another sign of BeReal’s waning relevance. (Jon Porter / The Verge)

- TikTok introduced a new monetization feature that will let creators submit video ads under a brand “challenge,” which might require using a certain prompt or sound. Making ads on spec for big brands and hoping you get enough views to make it worthwhile feels like a grim turn in the creator marketplace! (Aisha Malik / TechCrunch)

- Google often violates its own standards when placing video ads on third party sites, a third-party analysis found, leading some advertisers to demand refunds. Google disputed the claims. (Patience Haggin / Wall Street Journal)

- Google is abandoning its Iris augmented-reality glasses project and will instead focus on making AR software. (Hugh Langley / Insider)

- Amazon-owned Goodreads has become a popular avenue for review bombing campaigns from outraged readers, many of whom try to derail new books before they’re even published. (Alexandra Alter and Elizabeth A. Harris / The New York Times)

- Elon Musk and Mark Zuckerberg’s long-running conflict involves mutual jealousy, according to this report, with Musk envious of Zuckerberg’s wealth and the Meta CEO wishing he had Musk’s (former!) reputation as an innovator. (Tim Higgins and Deepa Seetharaman / WSJ)

- Damus, a decentralized social media app backed by Jack Dorsey, will be removed from the App Store over a cryptocurrency tipping feature that Apple says should qualify for its 30% cut. Damus disagreed, and plans to appeal the removal. (Aisha Malik / TechCrunch)

- Telegram is launching an ephemeral Stories feature next month, responding to years of user requests that the company it. Finally a way to ensure your crypto scams disappear from the public record within 24 hours. (Aisha Malik / TechCrunch)

- WhatsApp revealed that its small business-focused app has quadrupled in monthly active users, to more than 200 million, over the past three years. (Ivan Mehta / TechCrunch)

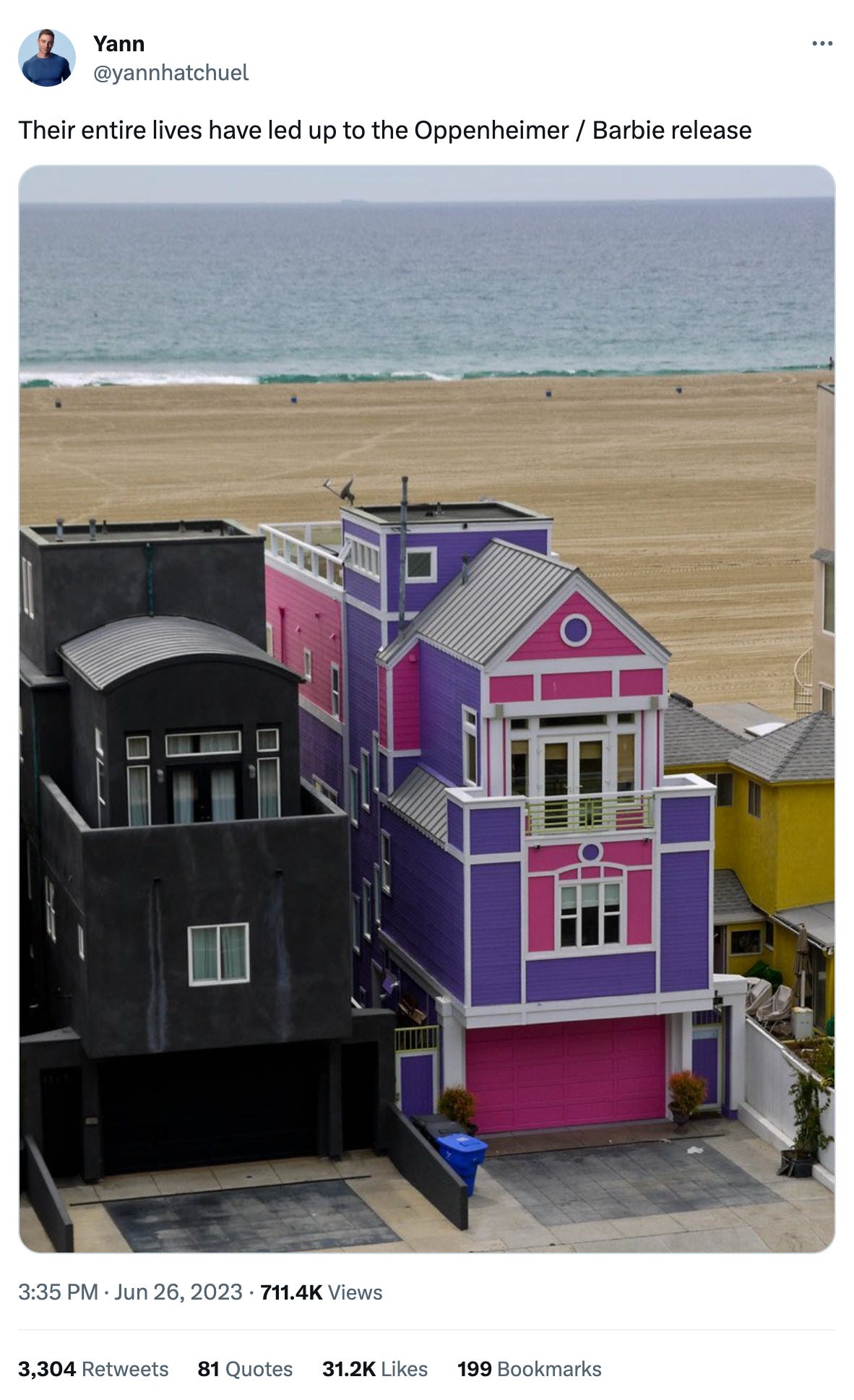

Those good tweets

For more good tweets every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and AI-free copy: casey@platformer.news and zoe@platformer.news.